User Guide

Welcome to the QMonitor User Guide. This documentation will help you monitor and manage your SQL Server instances.

This guide provides all the information you need to use QMonitor effectively. You will learn how to set up monitoring, register SQL Server instances, and use QMonitor features to track performance and health.

What You’ll Find Here

- Overview: Learn what QMonitor is and how it helps you monitor SQL Server

- Concepts: Understand key terms and how QMonitor works

- Getting Started: Set up your first monitored instance

- Tasks: Step-by-step instructions for common tasks

Getting Help

If you need assistance, check the Getting Started guide to begin monitoring your SQL Server instances.

If you want to file a Support Request, log in to QMonitor and go to the Support page.

1 - Overview

Learn what QMonitor is and how it can help you.

What is QMonitor?

QMonitor is a monitoring solution for SQL Server. It collects performance metrics and health data from your SQL Server instances and displays them in easy-to-read dashboards.

QMonitor helps you:

- Track SQL Server performance over time

- Identify problems before they affect users

- Analyze query performance and resource usage

- Monitor SQL Server Agent jobs

- Review blocking, deadlocks, and errors

Why Use QMonitor?

Good for:

- Monitoring multiple SQL Server instances from one place

- Tracking performance trends and capacity planning

- Getting alerts when problems occur

- Analyzing slow queries and high resource usage

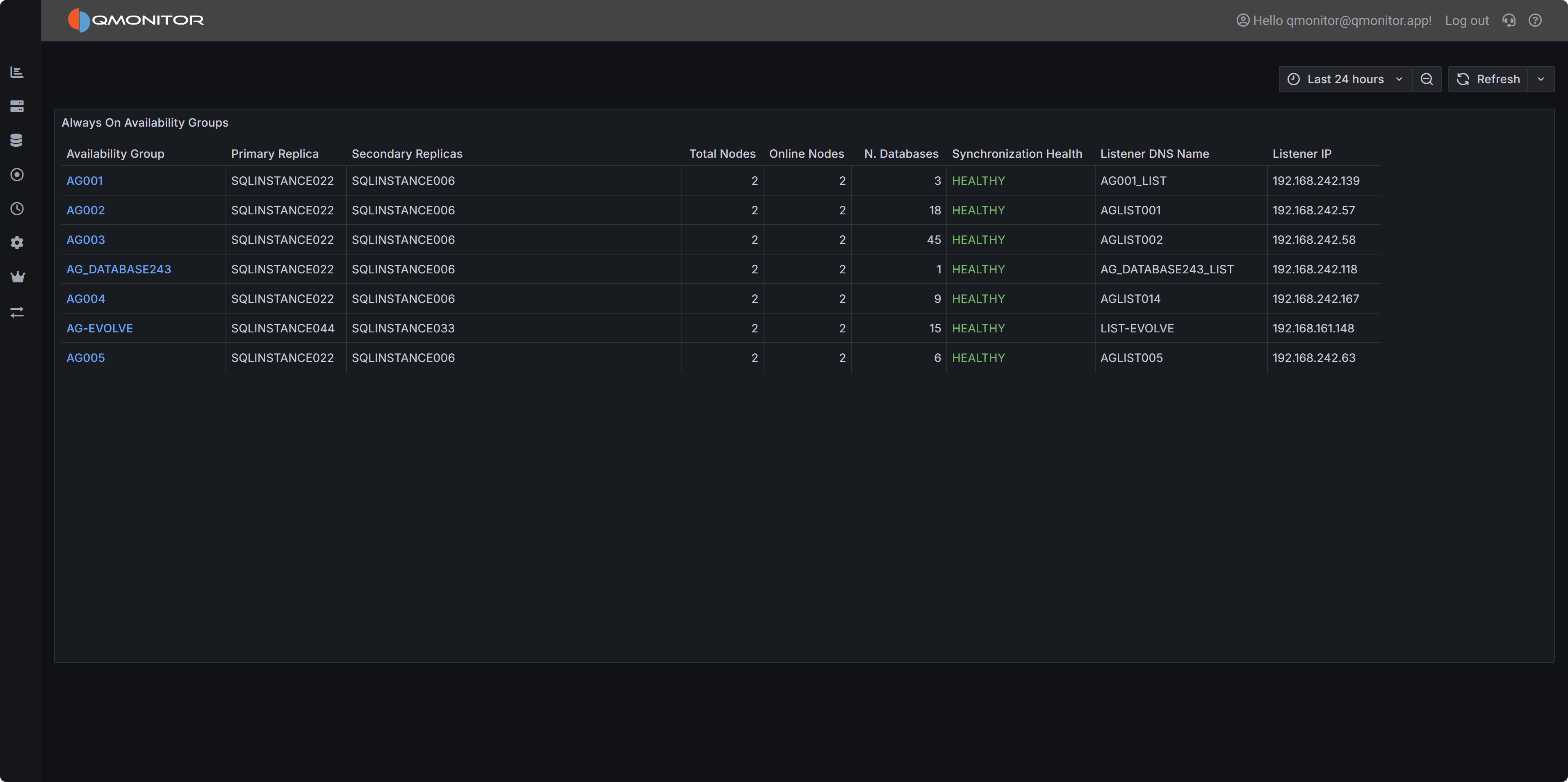

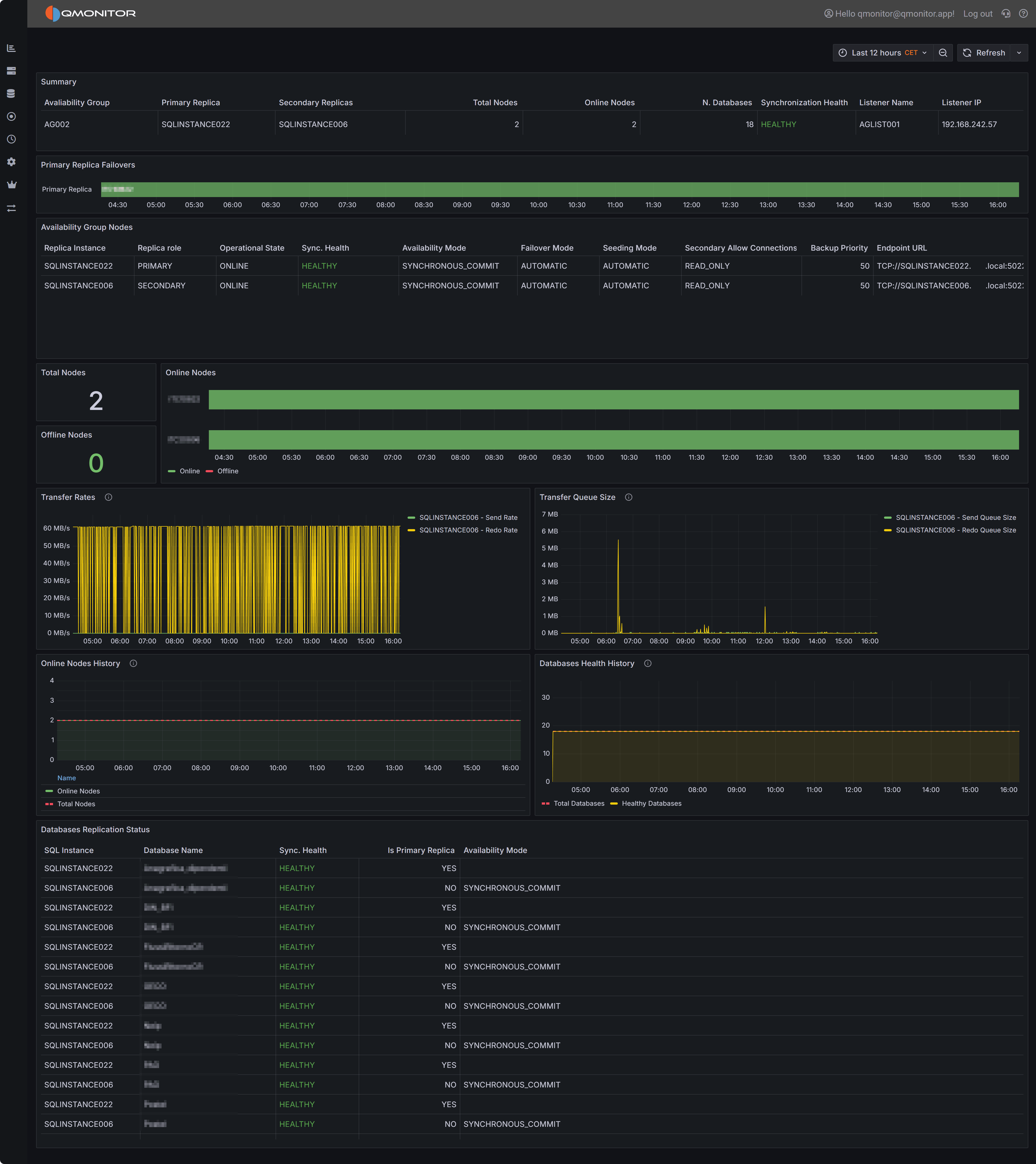

- Monitoring Always On availability groups

- Tracking the outcome and duration of SQL Server Agent jobs

- Documenting instances and databases in your environment

Coming soon:

- Custom dashboards and visualizations

- Oracle and PostgreSQL monitoring

- AI assisted query optimization

- AI assisted incident analysis

Where Should I Go Next?

Ready to get started? Here are your next steps:

2 - Concepts

Learn the key terms and concepts you need to use QMonitor effectively.

This page explains the main concepts in QMonitor. Understanding these terms will help you set up and use QMonitor successfully.

Organization

An organization is a container for monitored SQL Server instances and the users who manage them.

graph TB

OrgA["<br>Organization A<br><br>"]

Owner["Owner User<br>👤<br>(Full Access)"]

User1["Regular User<br>👤<br>(View Only)"]

User2["Regular User<br>👤<br>(View Only)"]

spacer1[" "]:::spacer

spacer2[" "]:::spacer

Agent1["Agent<br>Data Center 1"]

Agent2["Agent<br>Data Center 2"]

Instance1["SQL Server<br>Instance 1"]

Instance2["SQL Server<br>Instance 2"]

Instance3["SQL Server<br>Instance 3"]

Instance4["SQL Server<br>Instance 4"]

OrgA --> Owner

OrgA --> User1

OrgA --> User2

OrgA ~~~ spacer1

spacer1 ~~~ spacer2

OrgA --> Agent1

OrgA --> Agent2

Agent1 --> Instance1

Agent1 --> Instance2

Agent2 --> Instance3

Agent2 --> Instance4

style OrgA fill:#4A90E2,stroke:#2E5C8A,stroke-width:3px,color:#fff

style Owner fill:#52C41A,stroke:#389E0D,stroke-width:2px,color:#fff

style User1 fill:#1890FF,stroke:#096DD9,stroke-width:2px,color:#fff

style User2 fill:#1890FF,stroke:#096DD9,stroke-width:2px,color:#fff

style Agent1 fill:#FA8C16,stroke:#D46B08,stroke-width:2px,color:#fff

style Agent2 fill:#FA8C16,stroke:#D46B08,stroke-width:2px,color:#fff

style Instance1 fill:#722ED1,stroke:#531DAB,stroke-width:2px,color:#fff

style Instance2 fill:#722ED1,stroke:#531DAB,stroke-width:2px,color:#fff

style Instance3 fill:#722ED1,stroke:#531DAB,stroke-width:2px,color:#fff

style Instance4 fill:#722ED1,stroke:#531DAB,stroke-width:2px,color:#fff

classDef spacer fill:#fff,stroke:#fff- When you first sign up for QMonitor, you are not part of any organization

- You can create a new organization or join an existing one

- The user who creates an organization becomes its owner

- Owners can invite users and manage all organization settings

- Regular users can only view data (they cannot change settings)

- Users can belong to multiple organizations with different roles

- All users in an organization can access data from all SQL Server instances registered in that organization

- If you have different teams working on different sets of instances, create separate organizations for each team

User

A User is a registered account identified by an email address. Users can join multiple organizations and have different roles in each organization.

graph LR

User["👤<br><br>User<br>user@example.com<br><br>"]

OrgA["<br>Organization A<br><br>"]

OrgB["<br>Organization B<br><br>"]

OrgC["<br>Organization C<br><br>"]

RoleA["Role: Owner<br>(Full Access)"]

RoleB["Role: Regular User<br>(View Only)"]

RoleC["Role: Regular User<br>(View Only)"]

User --> OrgA

User --> OrgB

User --> OrgC

OrgA --> RoleA

OrgB --> RoleB

OrgC --> RoleC

style User fill:#FA541C,stroke:#D4380D,stroke-width:3px,color:#fff

style OrgA fill:#4A90E2,stroke:#2E5C8A,stroke-width:3px,color:#fff

style OrgB fill:#4A90E2,stroke:#2E5C8A,stroke-width:3px,color:#fff

style OrgC fill:#4A90E2,stroke:#2E5C8A,stroke-width:3px,color:#fff

style RoleA fill:#52C41A,stroke:#389E0D,stroke-width:2px,color:#fff

style RoleB fill:#1890FF,stroke:#096DD9,stroke-width:2px,color:#fff

style RoleC fill:#1890FF,stroke:#096DD9,stroke-width:2px,color:#fffInstance

An Instance represents a SQL Server instance registered in an organization. QMonitor connects to the Instance using the connection string you provide. The connection string includes the authentication method. See the Authentication section for supported methods.

Agent

An Agent is a service that collects metrics from SQL Server instances.

- The Agent is installed as a service on a computer in your network

- The Agent connects to SQL Server instances assigned to it

- If you have multiple data centers, create separate Agents for each location

- Each Agent monitors the instances in its data center

This structure keeps monitoring traffic local and improves reliability.

Monitoring Concepts

QMonitor continuously collects and displays performance data from your SQL Server instances. Understanding these monitoring concepts will help you interpret the data and respond to problems effectively.

Dashboard

A Dashboard is a customizable view that displays metrics from one or more SQL Server instances in real-time.

- Each organization has a set of standard Dashboards

- Dashboards contain widgets that visualize different metrics

- Dashboards update automatically as new data arrives

- All users in an organization can view Dashboards

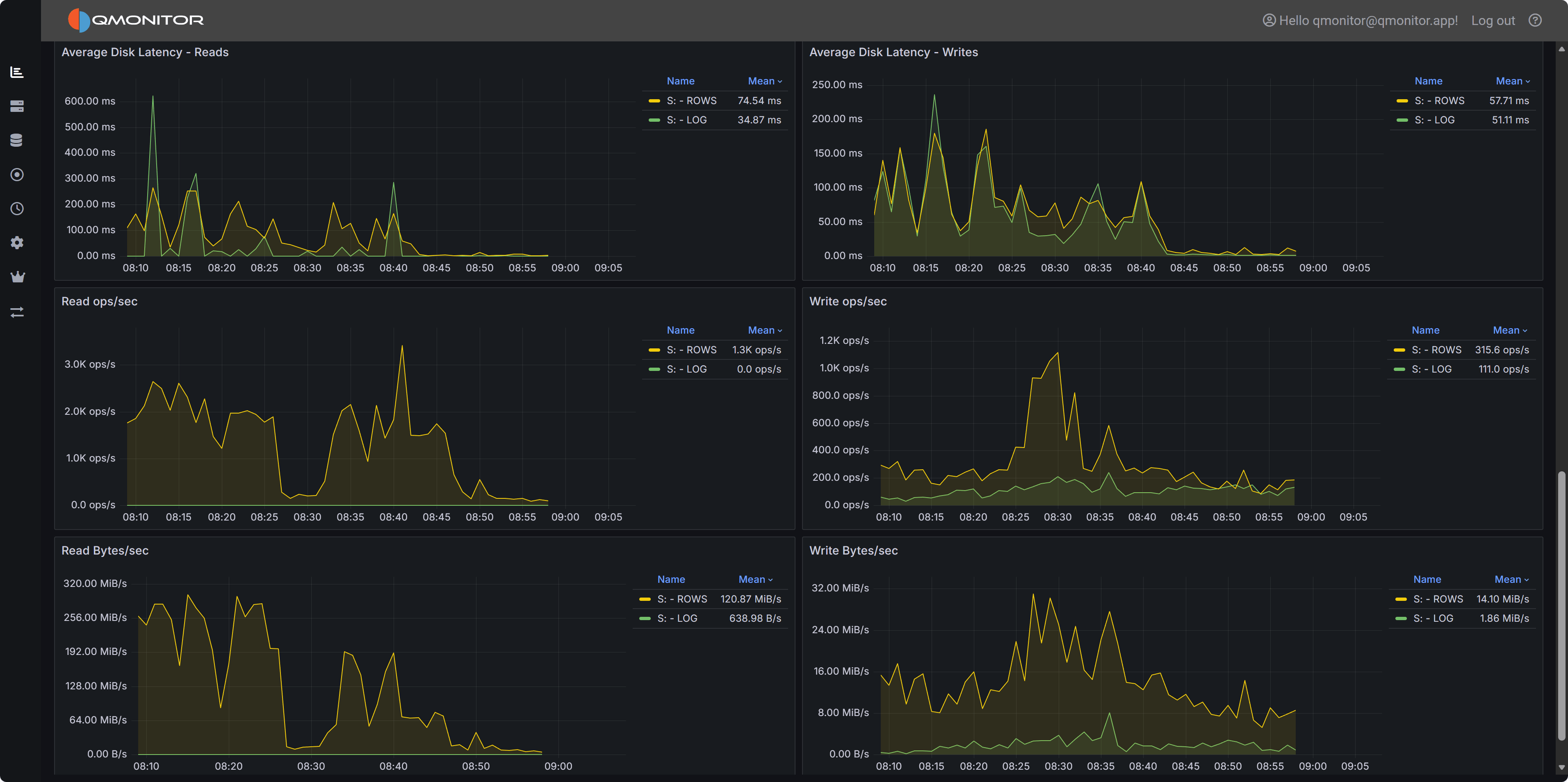

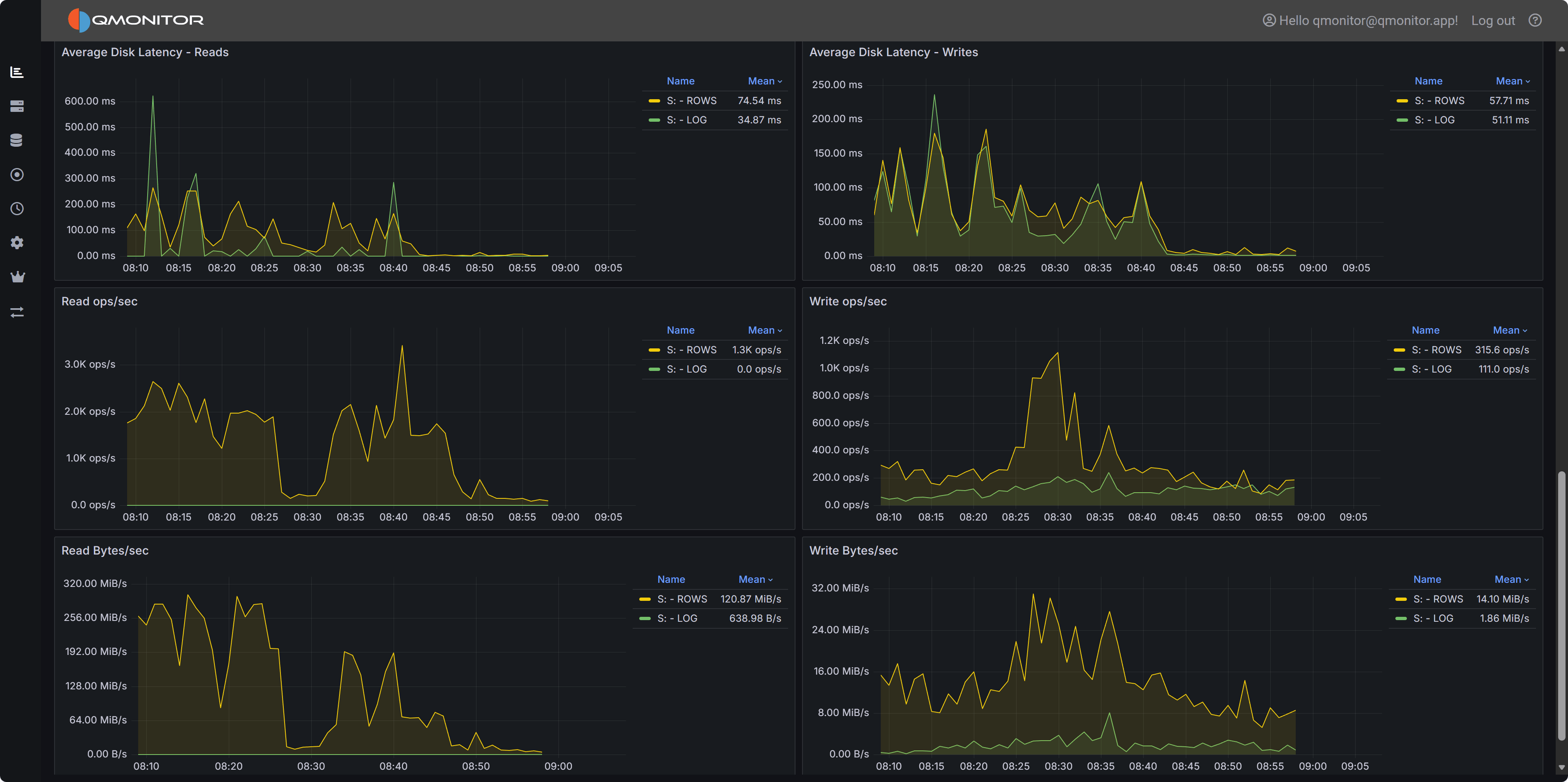

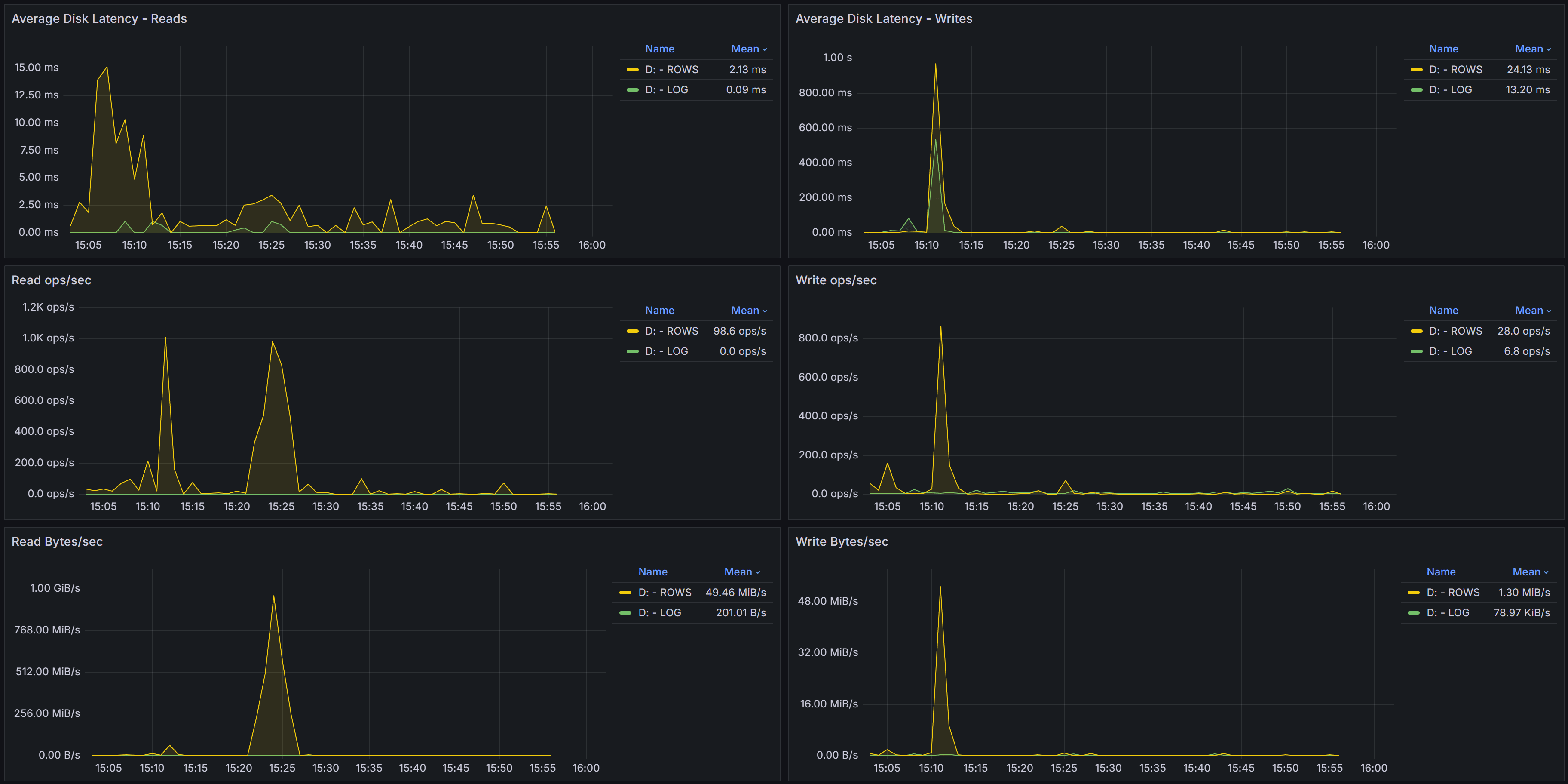

Here is an example of a Dashboard showing disk metrics for a particular instance:

Metric

A Metric is a specific measurement collected from a SQL Server instance at regular intervals.

Examples of metrics:

- CPU usage percentage

- Memory consumption

- Database size

- Active connections

- Query execution time

- Disk I/O operations

Each metric has:

- Current value - The most recent measurement

- Historical data - Past values stored for trend analysis

- Thresholds - Optional warning and critical levels

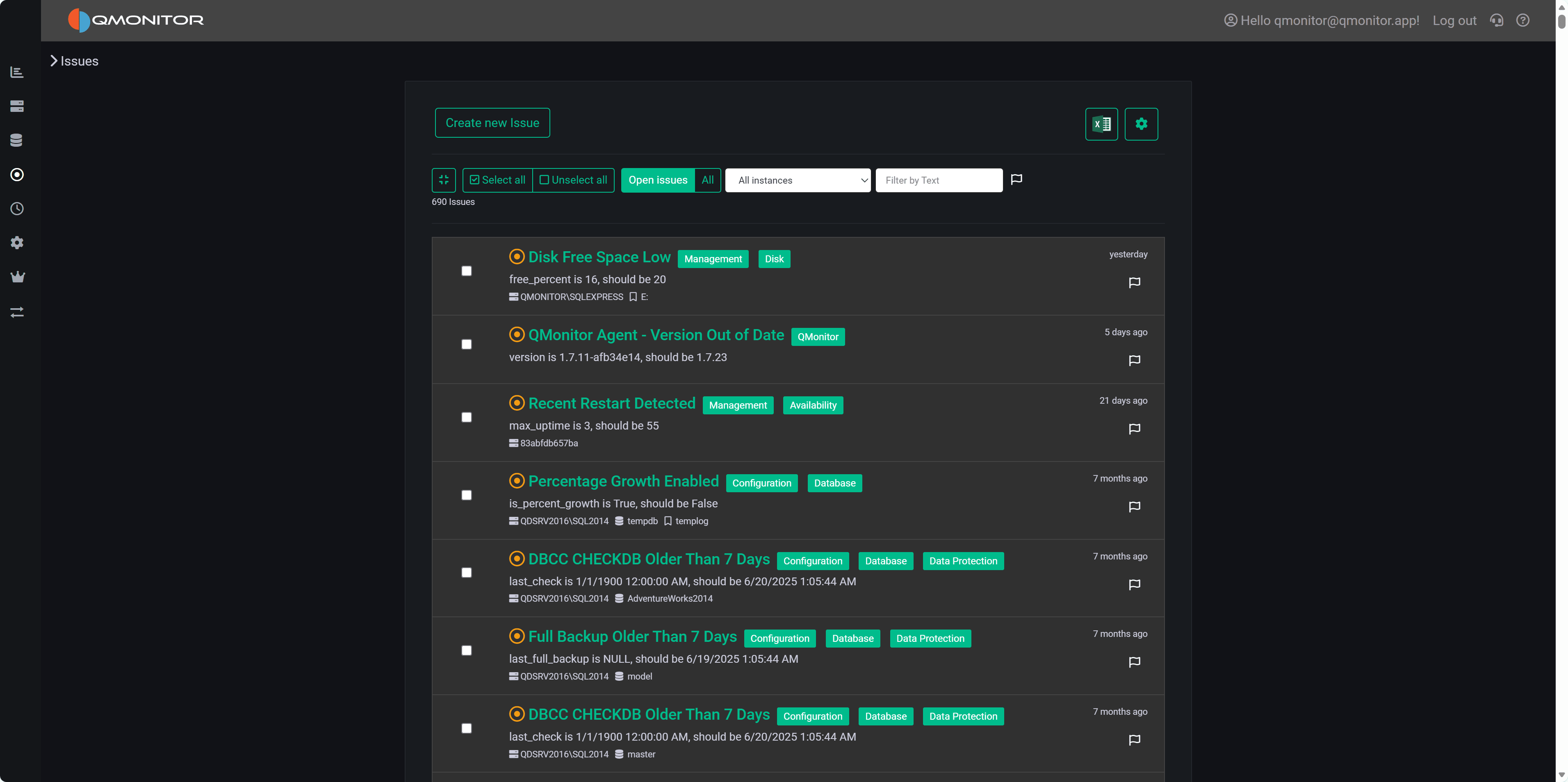

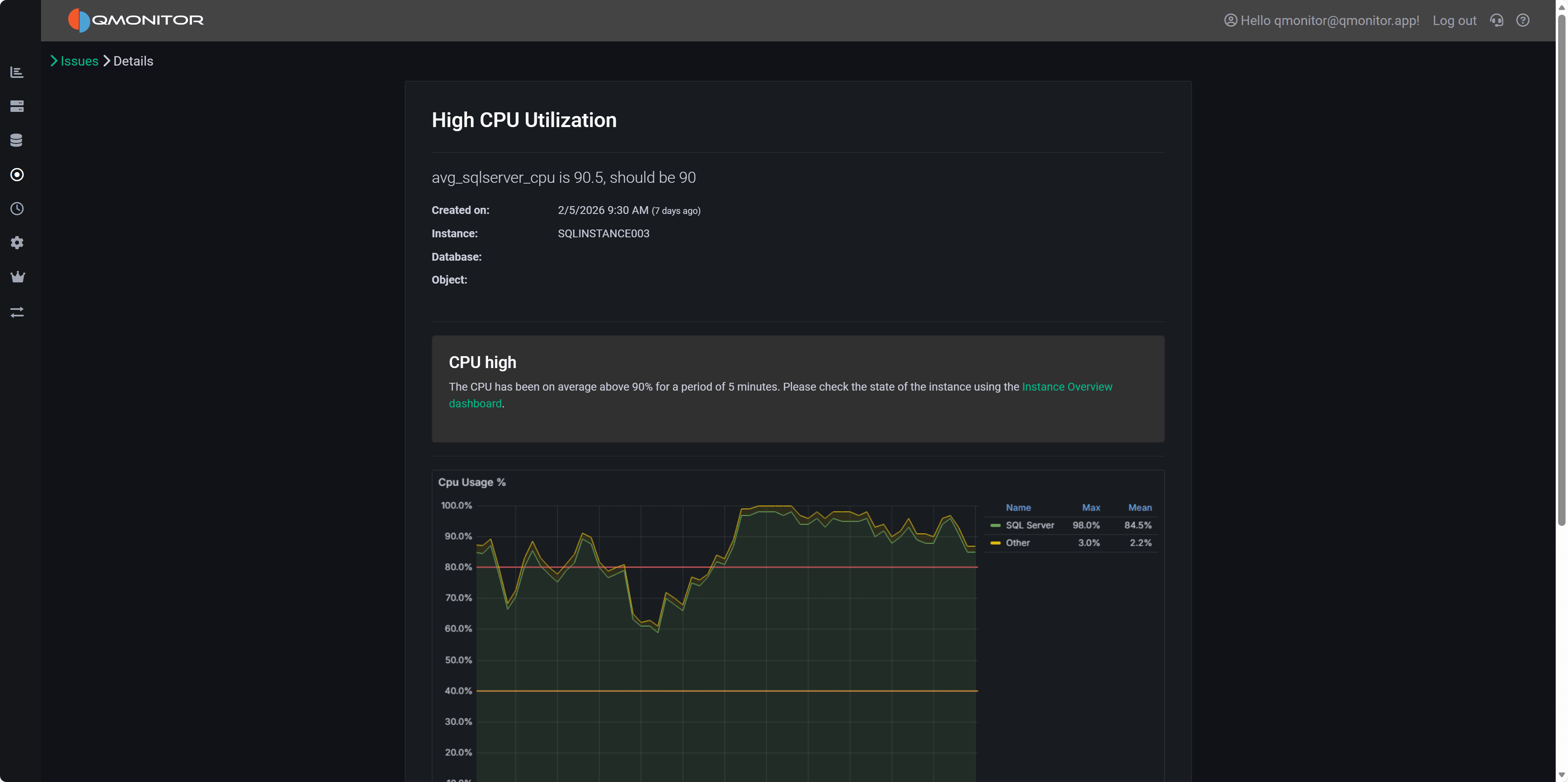

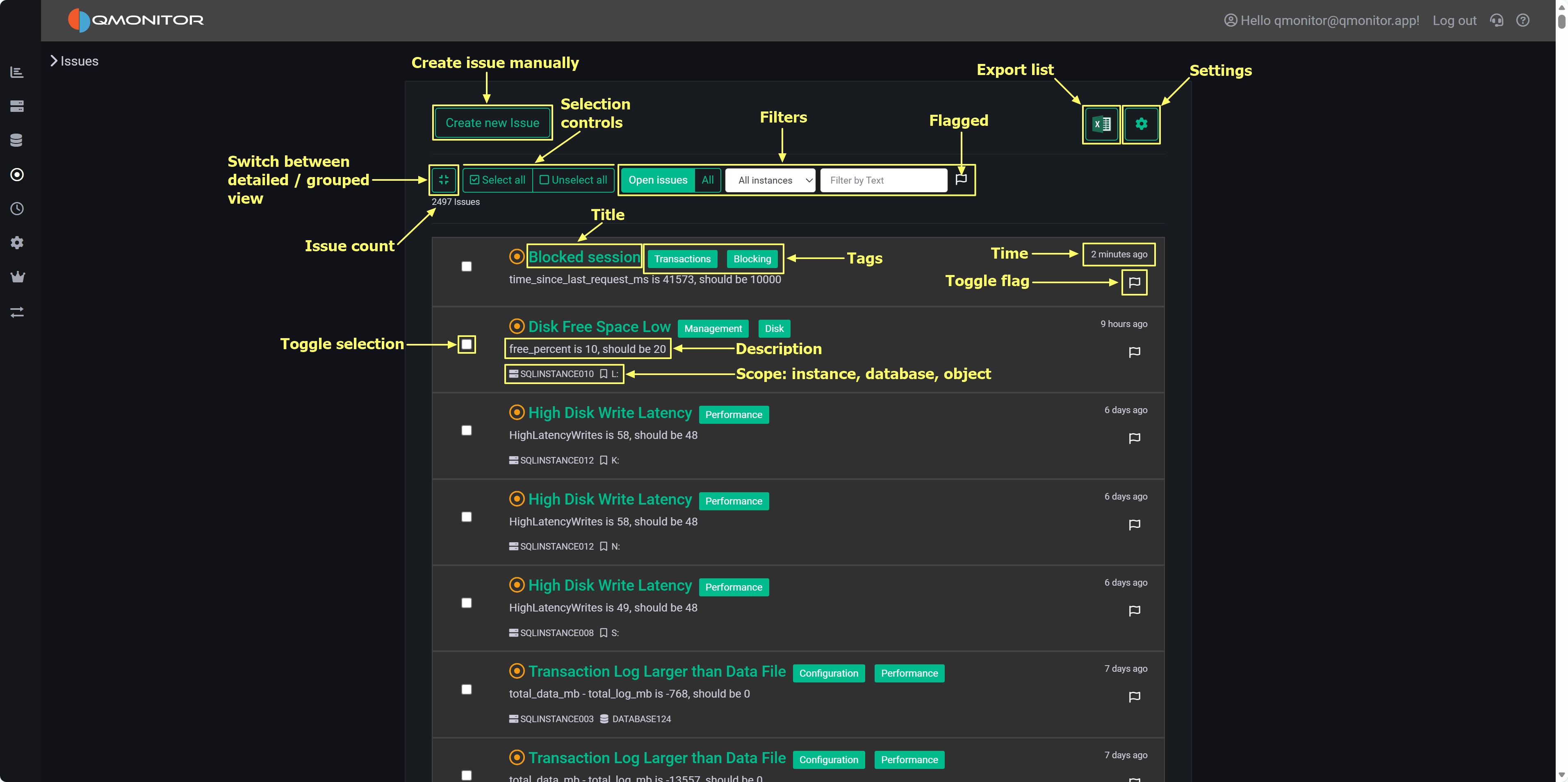

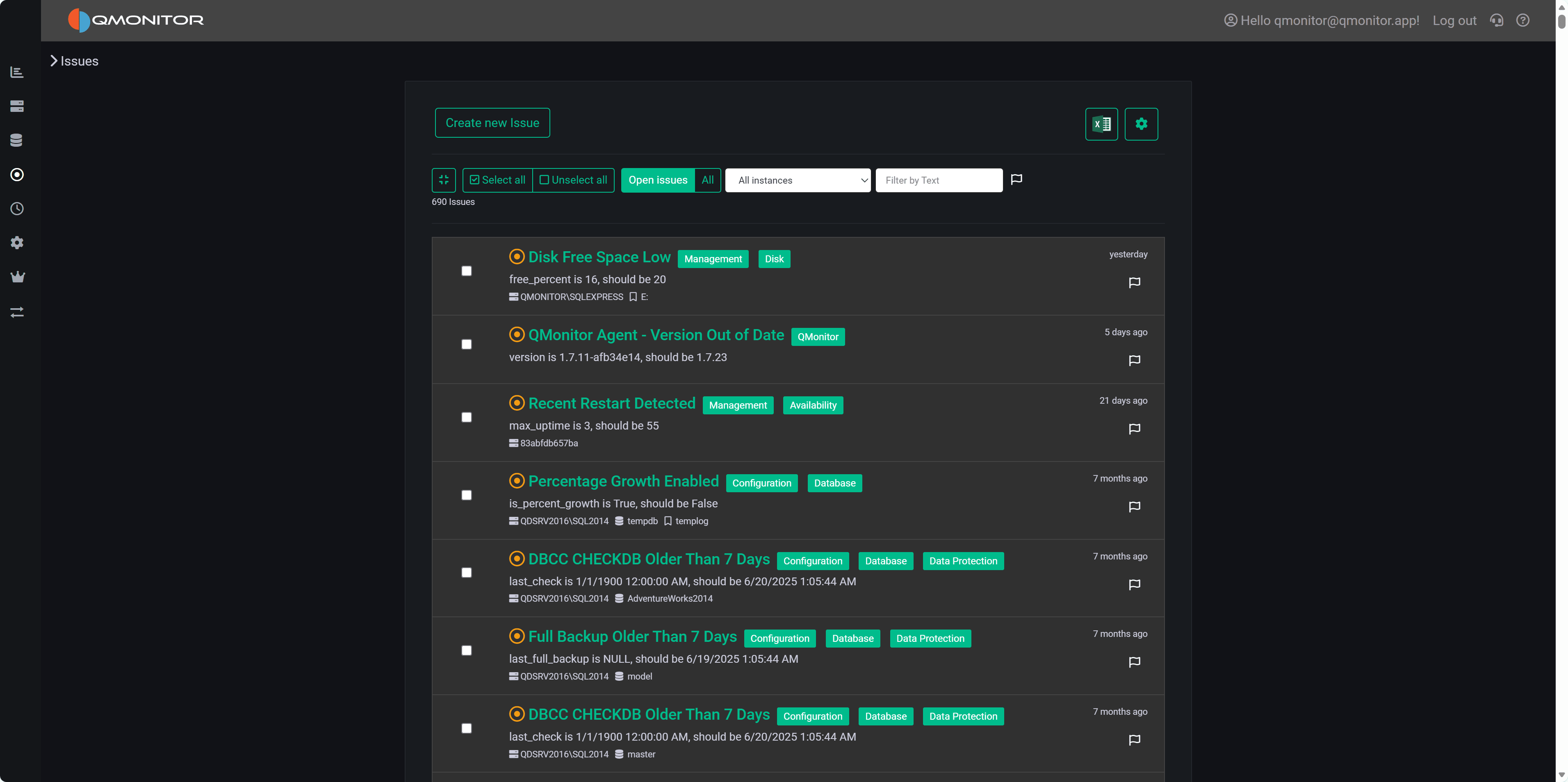

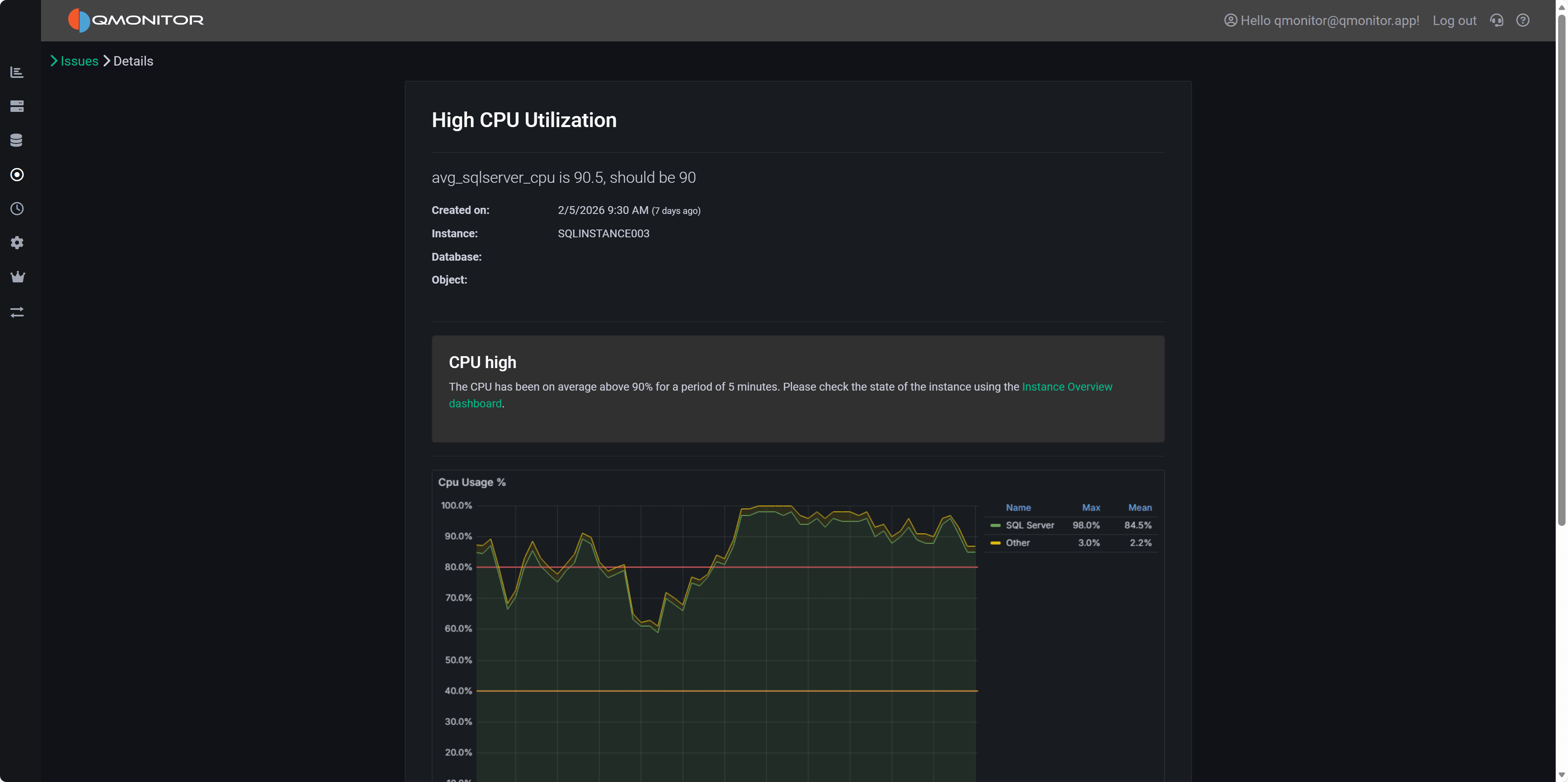

Issue

An Issue is an alert generated when a metric crosses a defined threshold or when QMonitor detects an abnormal condition.

Issue lifecycle:

- Open - The condition that causes the Issue is detected

- Active - The Issue remains active while the condition persists

- Closed - The condition returns to normal

Notifications:

- QMonitor can send notifications when Issues are triggered

- Notification methods include email, Teams, Slack, Telegram, or other integrations (see the Notifications section)

- Only organization Owners can configure Issue thresholds and notification settings

Here is an example of an Issue triggered by high CPU usage:

3 - Getting Started

Set up your first SQL Server instance in QMonitor

This guide will walk you through the essential steps to start monitoring your first SQL Server instance with QMonitor.

Before You Begin

What you’ll need:

- A SQL Server instance you want to monitor (SQL Server 2008 or later)

- Access to create a login on the SQL Server instance

- A modern web browser

Time to complete: About 15 minutes

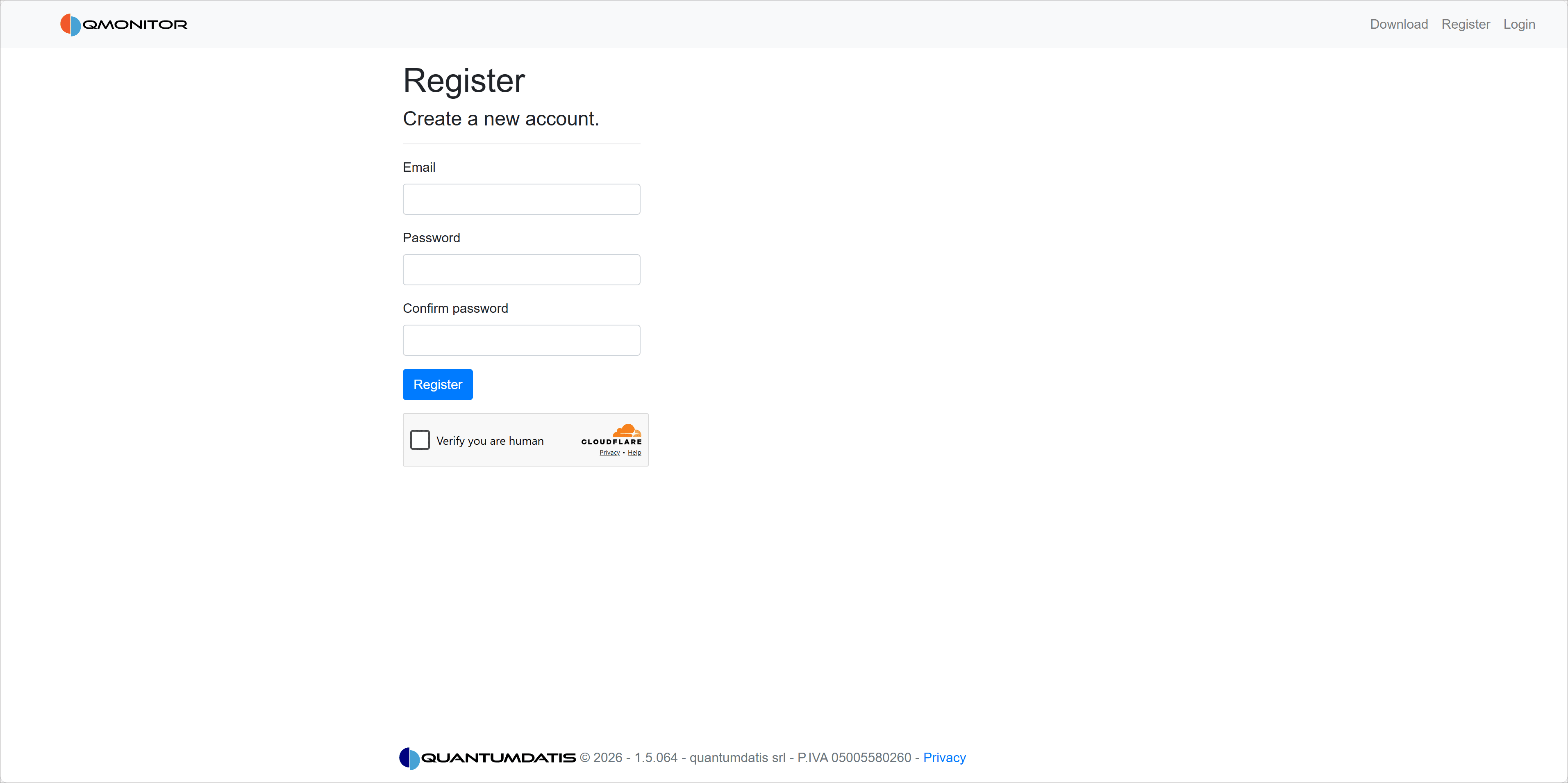

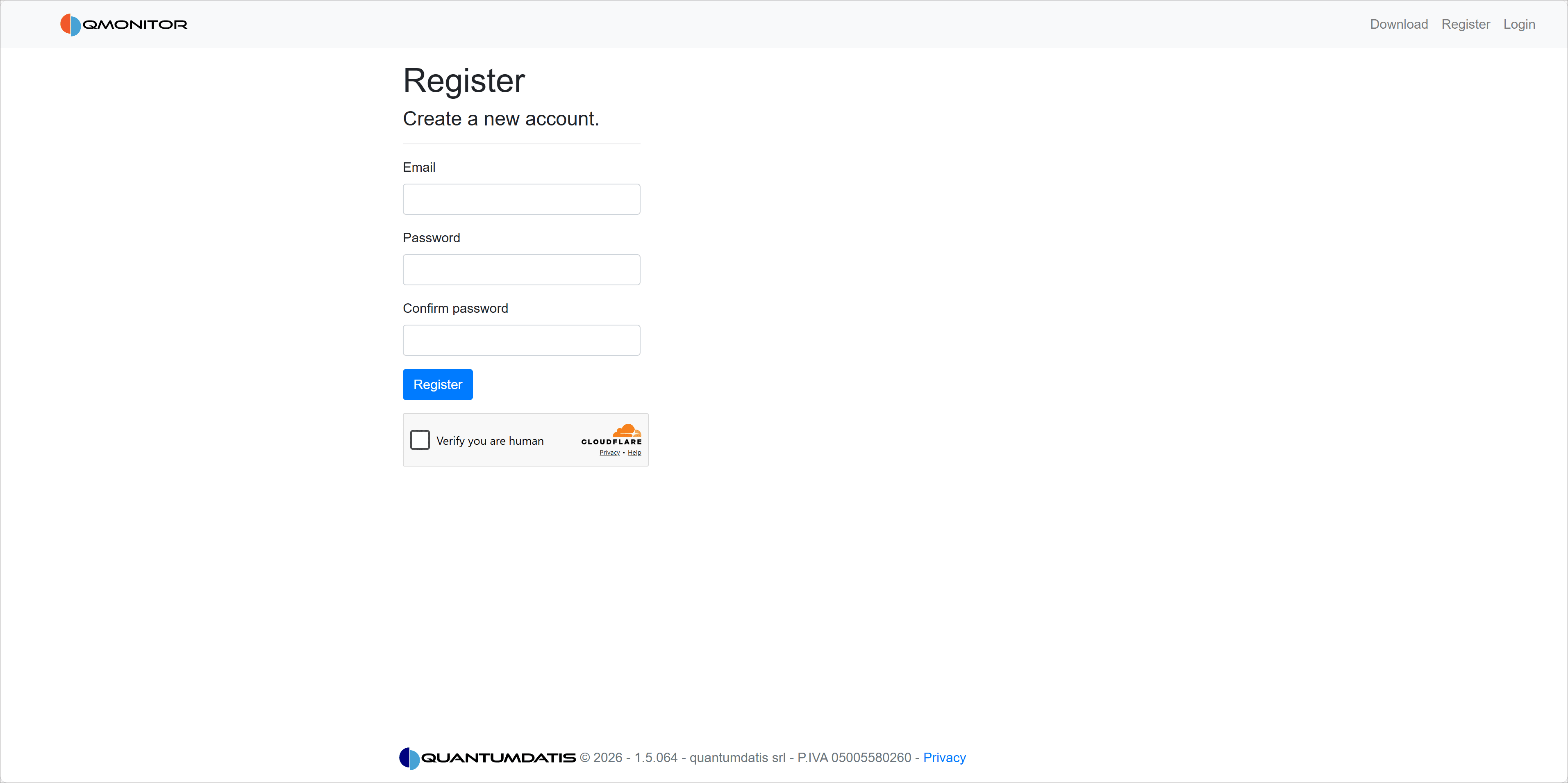

Step 1: Create Your Account

First, you need to register for a QMonitor account:

- Go to https://portal.qmonitor.app/Identity/Account/Register

- Enter your email and create a password (minimum 20 characters)

- Complete the captcha verification

- Check your email for the confirmation link

Once you verify your email, you can log in to QMonitor.

Need more details? See the Sign Up guide for step-by-step instructions with screenshots.

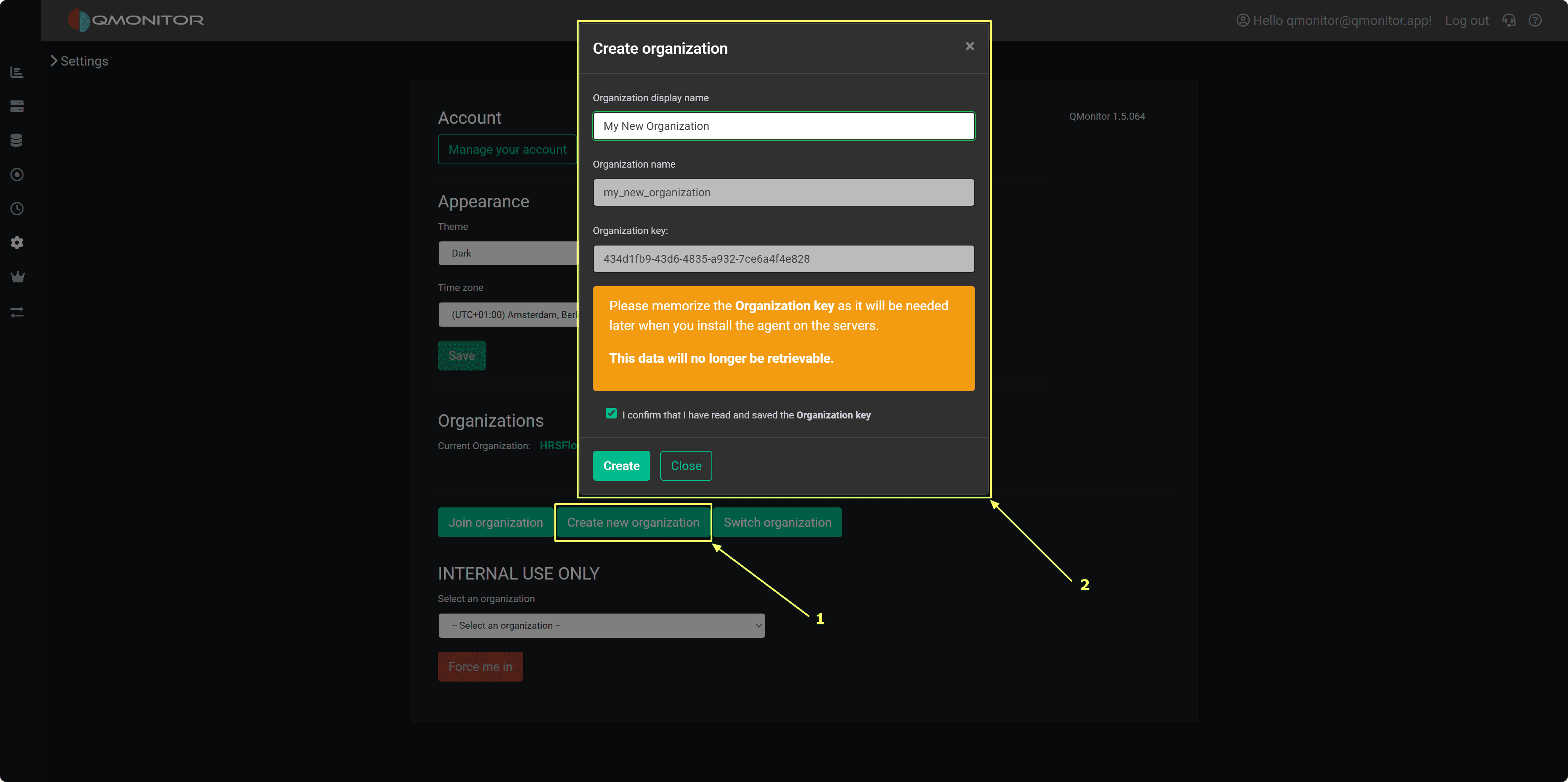

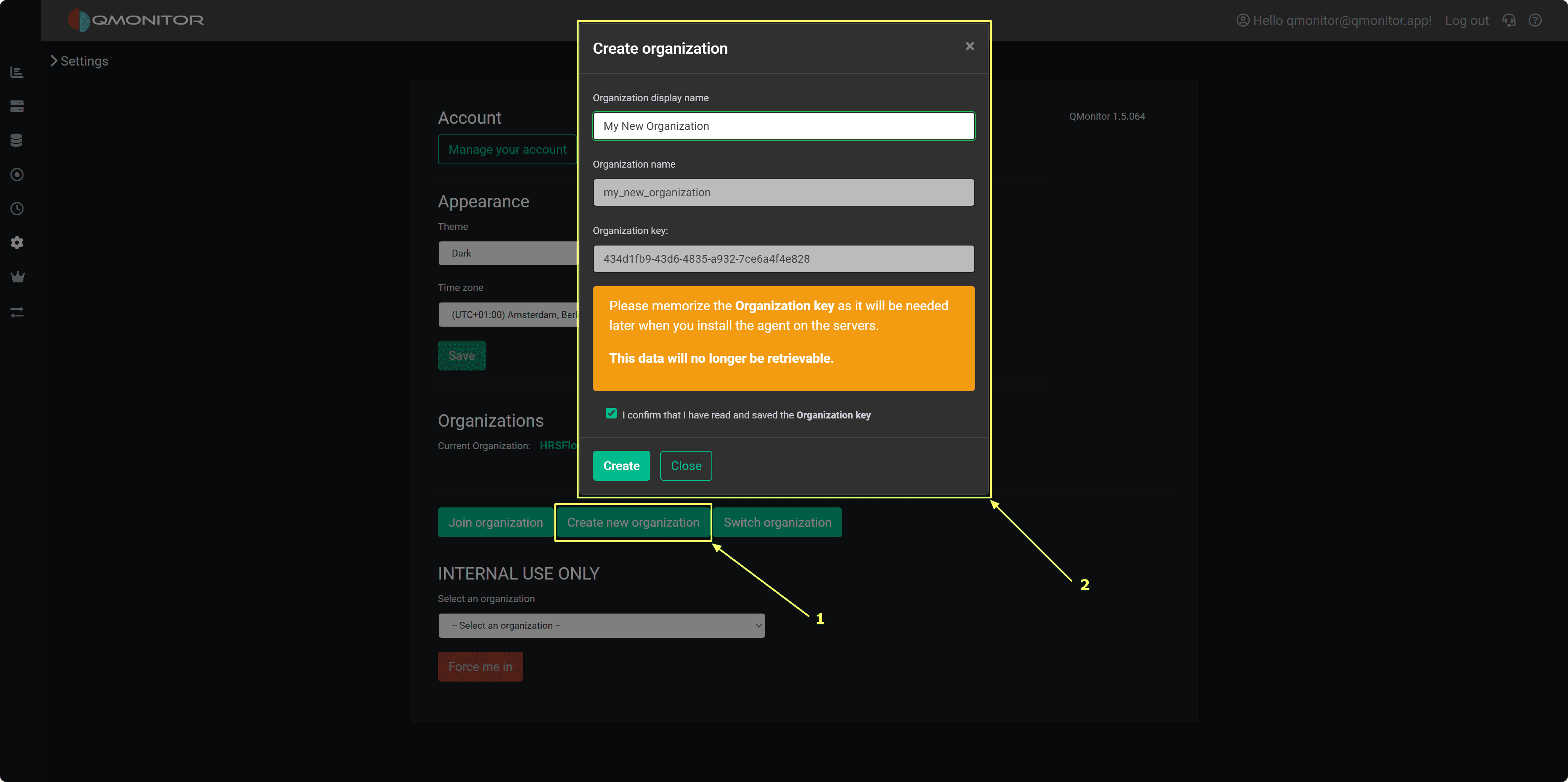

Step 2: Set Up Your Organization

After your first login, QMonitor will guide you through creating your organization:

- Enter your organization name

- Note down your organization name and ID (you’ll need these for agent configuration)

- Configure basic settings

- Invite team members (optional)

Your organization is the workspace where all your monitored instances and team members will be organized.

Need help? Check out Set Up Organization for detailed instructions.

Step 3: Install the QMonitor Agent

The QMonitor agent collects metrics from your SQL Server instances and sends them to the QMonitor platform:

- Download the agent installer from the Downloads page

- Run the installer on a server with access to your SQL Server instances

- Configure the agent with your organization’’s connection details

- Start the agent service

The agent will start and display as “ok” on the Instances page.

Agent deployment options: You can install the agent on the same server as SQL Server or on a separate monitoring server. See the Agent documentation for installation options.

Step 4: Prepare Your SQL Server Instance

Before adding an instance to QMonitor, you need to configure it with the required permissions:

- Download the setup script from the Instances page in QMonitor

- Open the script in SQL Server Management Studio

- Configure these parameters at the top of the script:

- @LoginName: The login name for the QMonitor agent

- @Password: Password for SQL Server authentication (leave empty for Windows authentication)

- @Sysadmin: Set to ‘‘Y’’ for full access, or ‘‘N’’ for minimum required permissions

- Execute the script on your SQL Server instance

The script will:

- Create the QMonitor login (if it doesn’’t exist)

- Grant necessary permissions

- Create the Extended Events session for monitoring

- Set up required database objects

What permissions does QMonitor need? The setup script can grant either sysadmin role membership (easiest) or minimum required permissions for specific DMVs and system tables. See Set Up Instance for details.

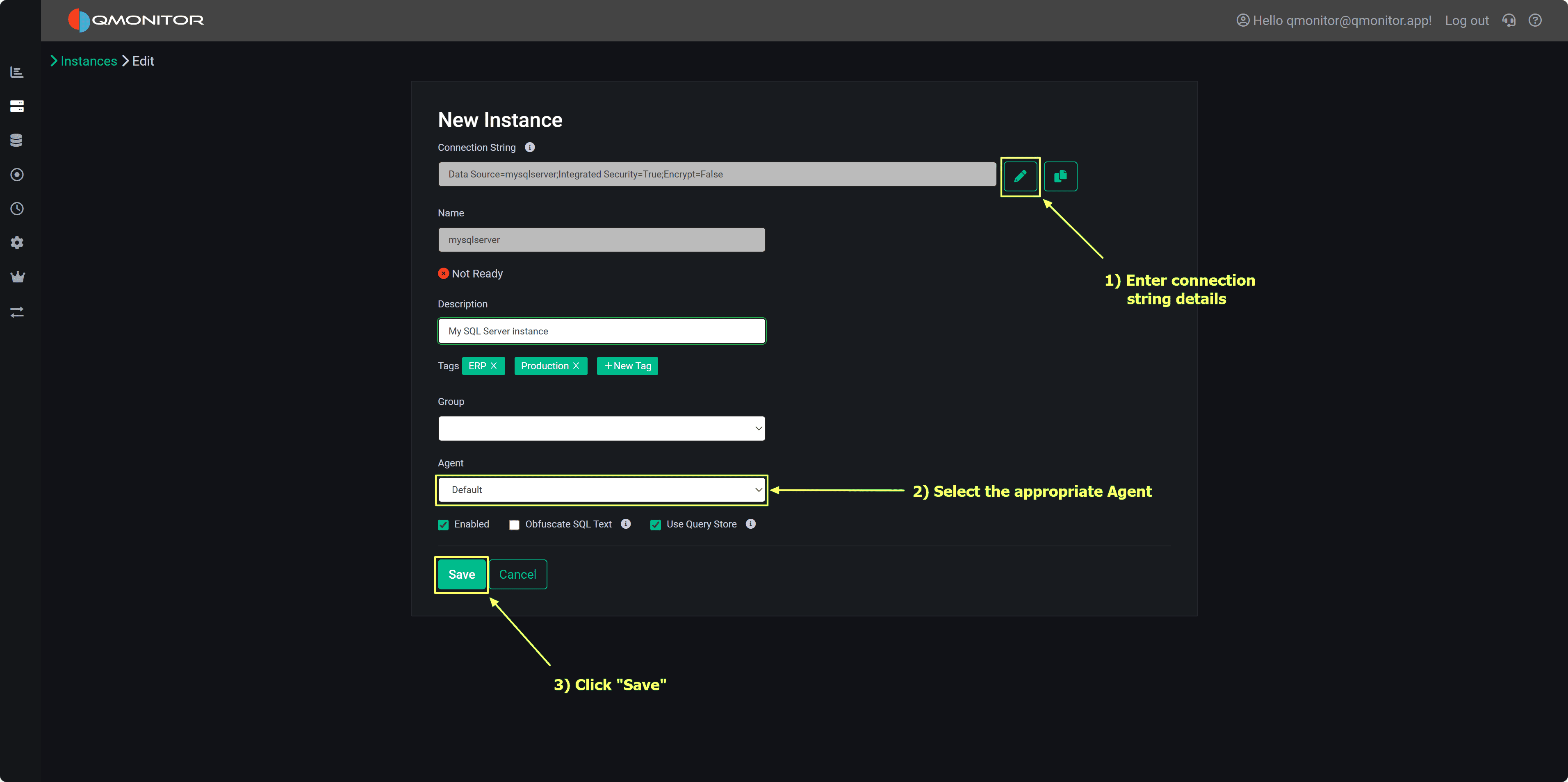

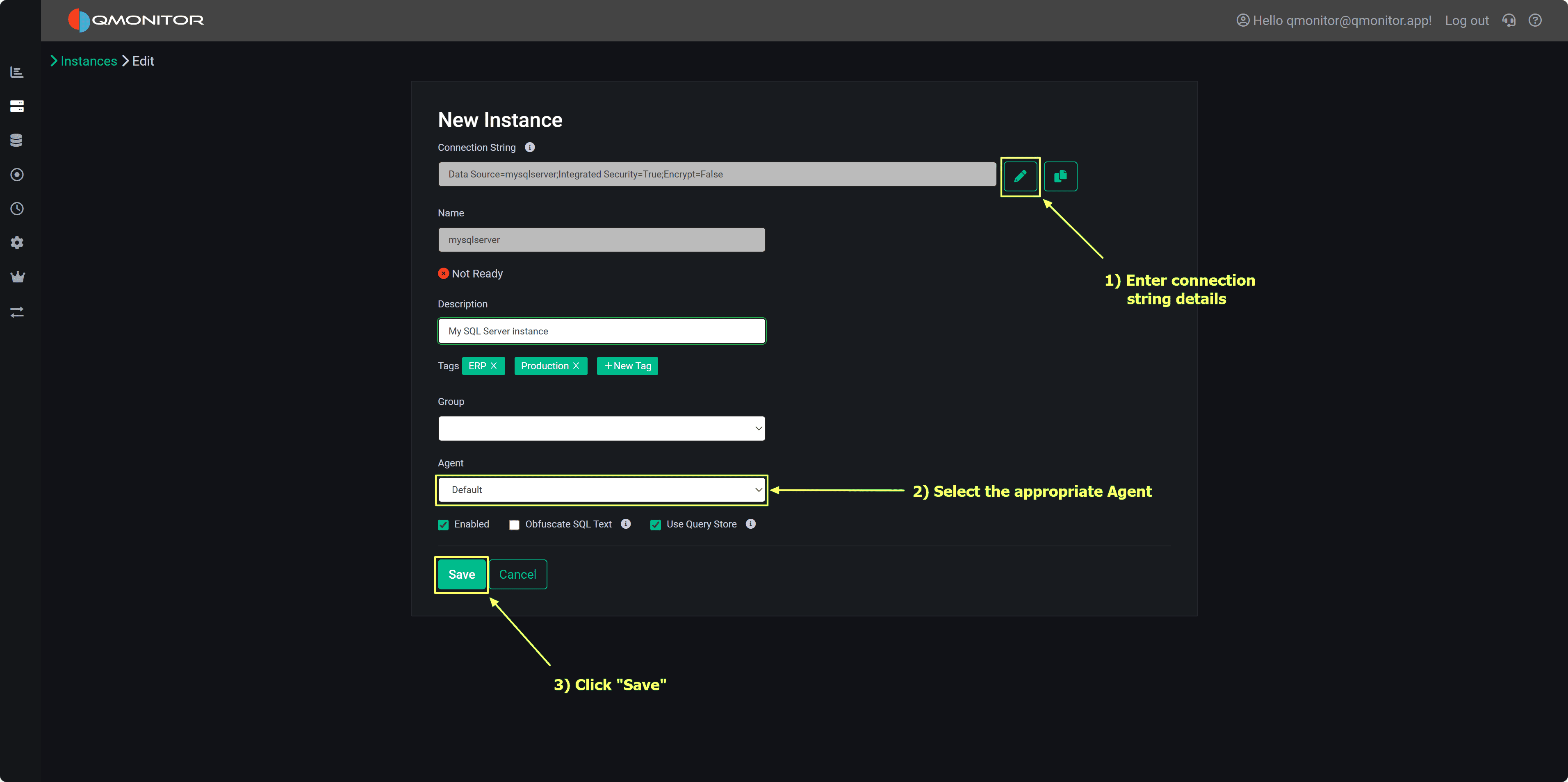

Step 5: Add Your Instance to QMonitor

Now you’’re ready to register your SQL Server instance:

- In QMonitor, navigate to Instances

- Click New Instance

- Enter the connection details:

- Instance name or address

- Authentication method (Windows or SQL Server)

- Login credentials (the ones you configured in Step 3)

- Click Save to verify

QMonitor will verify that all permissions are correctly configured before completing the registration.

Troubleshooting connection issues? See Register Instance for common problems and solutions.

Step 6: View Your First Metrics

After a few minutes, you’’ll start seeing data in your dashboards:

- Navigate to the Dashboard page

- Select your instance from the dropdown

- Explore the available metrics:

- CPU and memory usage

- Wait statistics

- Query performance

- Blocking and deadlocks

- SQL Server Agent jobs

What’’s Next?

Now that you have your first instance monitored, you can:

Need Help?

If you run into any issues:

4 - Features

Detailed descriptions of QMonitor’s features and capabilities.

This section provides detailed descriptions of QMonitor’s features and capabilities.

Each feature is designed to help you effectively monitor and manage your SQL Server instances.

Overview

QMonitor provides a comprehensive set of features for monitoring, analyzing, and maintaining

SQL Server instances across your entire estate, whether on-premises, in the cloud, or in hybrid environments.

Core Features

Monitoring & Dashboards

Visual monitoring through pre-built Grafana dashboards that provide real-time and historical insights

into your SQL Server health and performance.

- Performance Dashboards: Track CPU, memory, disk I/O, and other critical metrics

- Query Stats: Analyze workload characteristics and identify expensive queries

- Storage Analysis: Monitor database size, file growth, and space utilization

- Custom Views: Filter and focus on specific time ranges, instances, or databases

Explore Dashboards

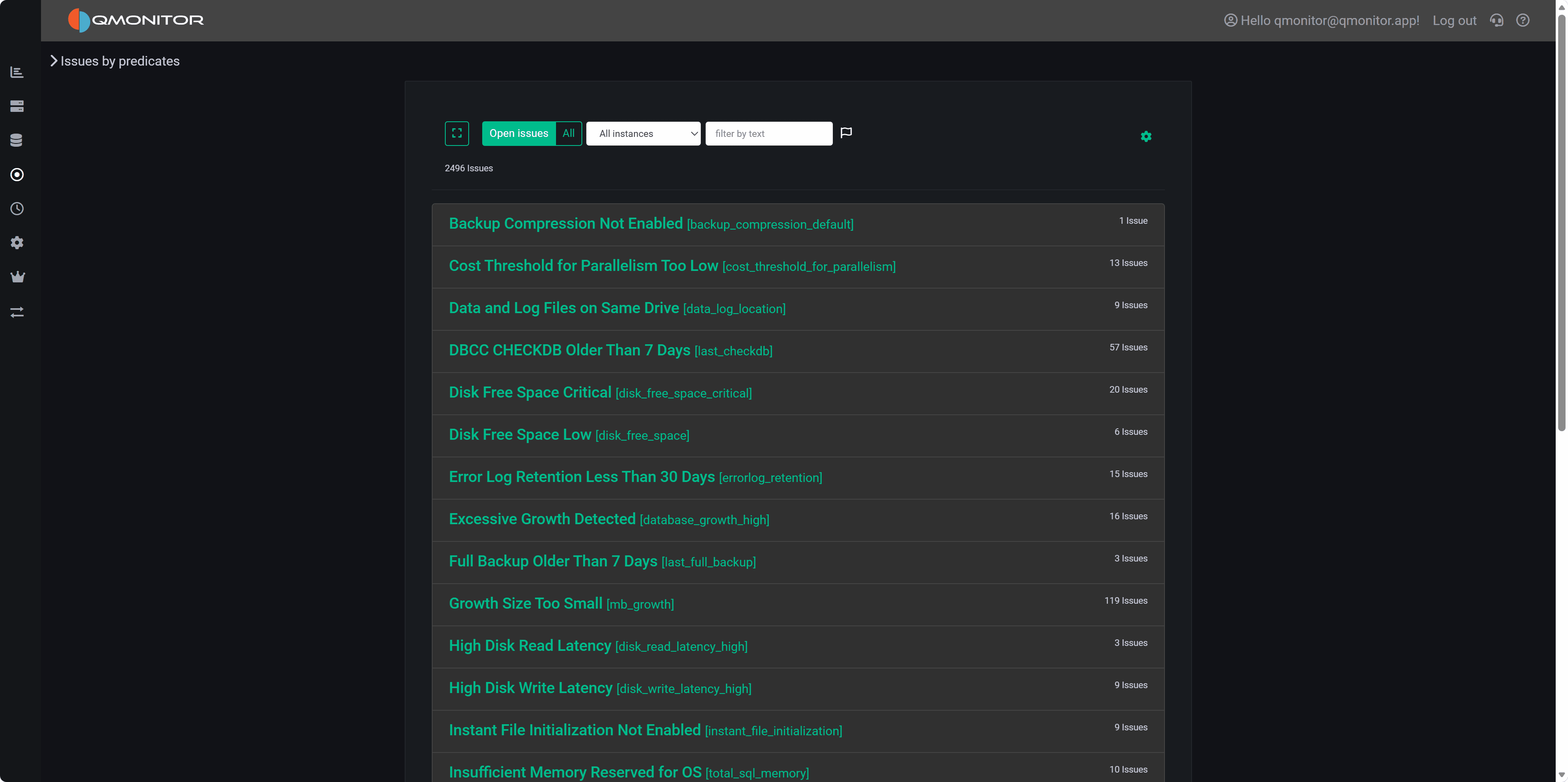

Issues & Alerts

Proactive monitoring that notifies you when your instances need attention.

- Issue Tracking: Centralized view of all detected problems

- Alert Configuration: Customize thresholds and notification rules

- Severity Levels: Prioritize critical, warning, and informational issues

- Historical Records: Track issue resolution and patterns over time

Explore Issues

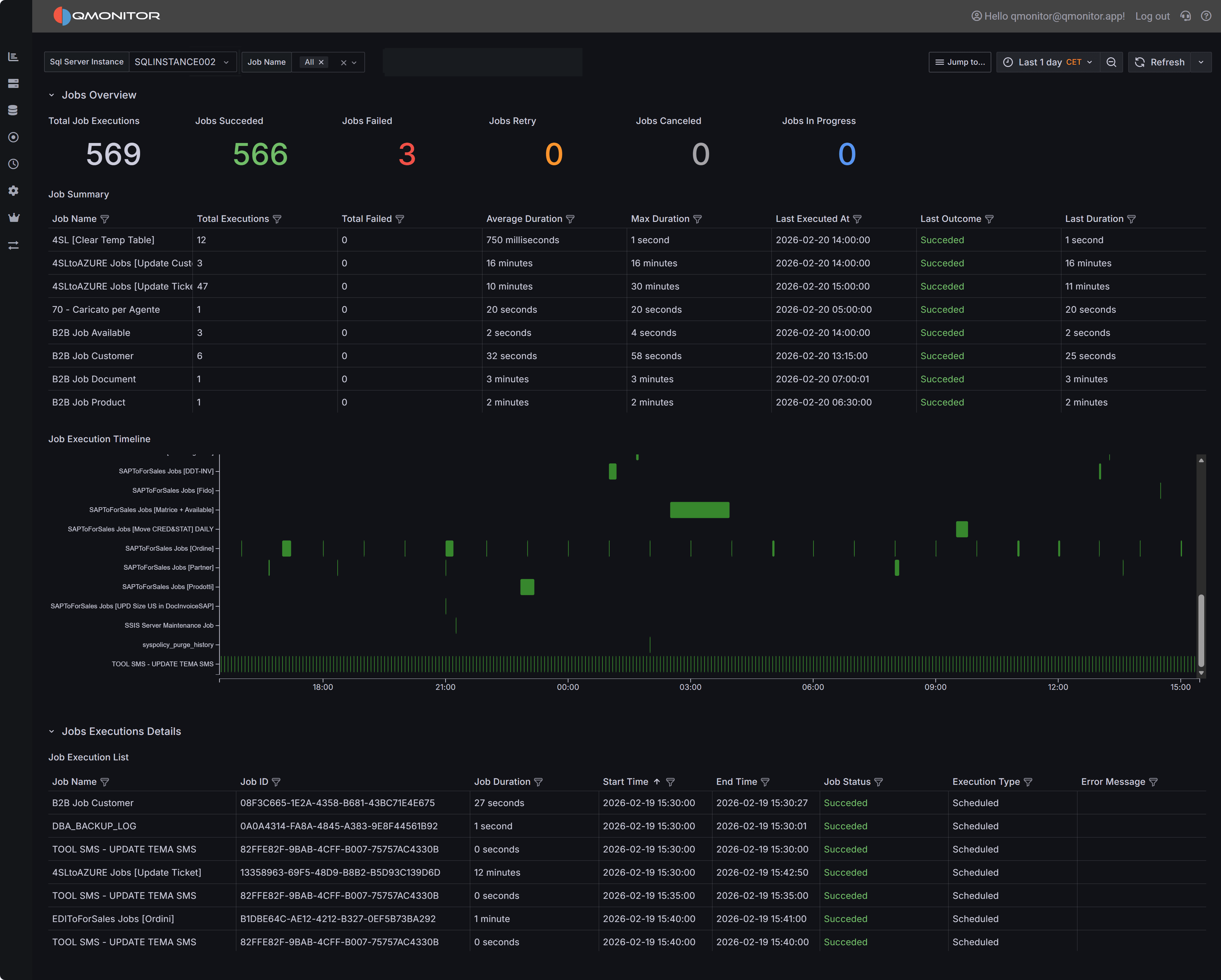

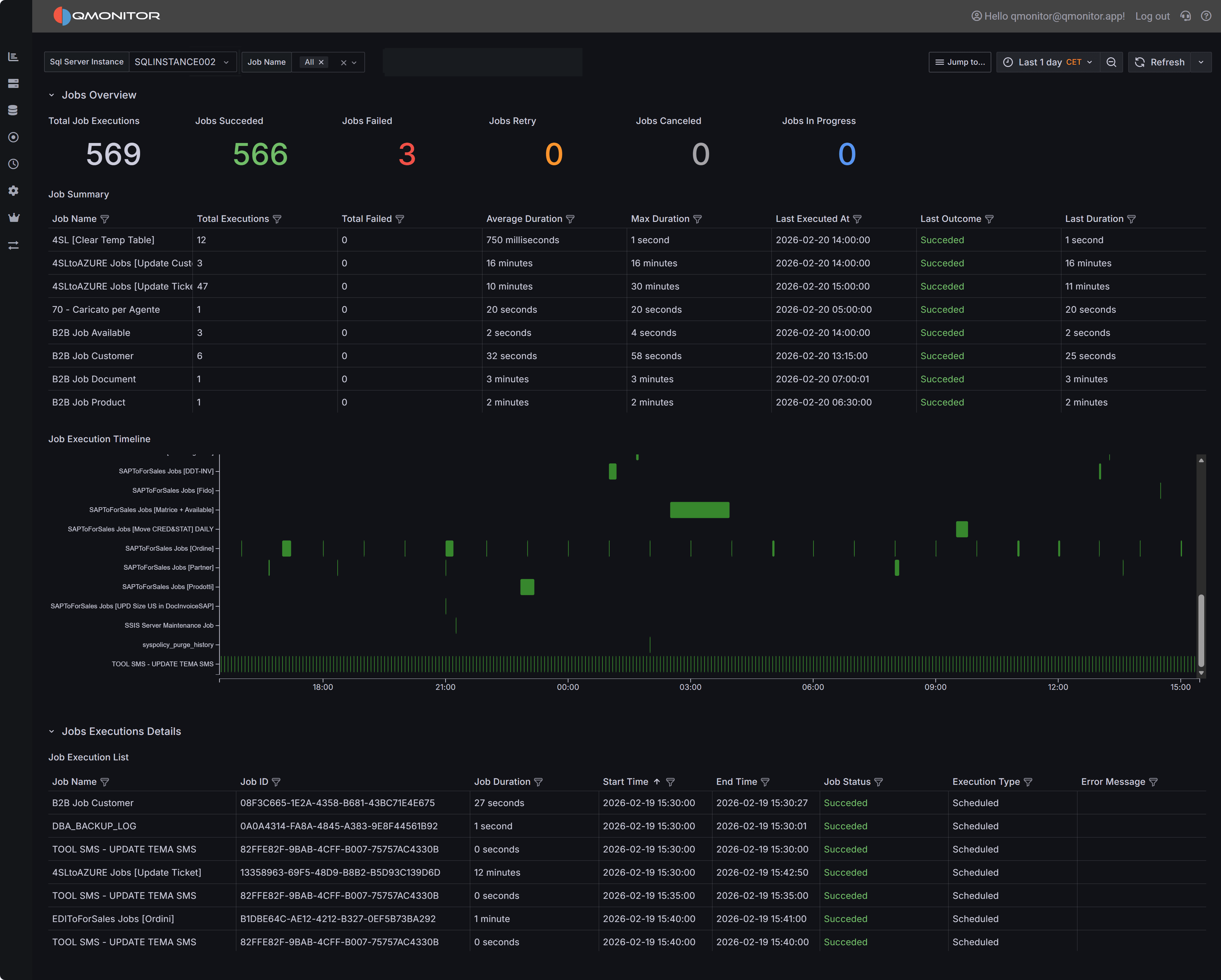

Jobs & Maintenance

Automated maintenance tasks to keep your SQL Server instances healthy and compliant.

- Backup Scheduling: Configure and monitor backup jobs

- Integrity Checks: Schedule DBCC checks and consistency validation

- Index Maintenance: Automate index rebuild and reorganization

- Job History: Track execution status and troubleshoot failures

Jobs

Inventory Management

Centralized repository of information about your SQL Server estate.

- Instance Catalog: Maintain details about all monitored servers

- Database Tracking: Document ownership, purpose, and metadata

- Configuration Management: Store connection strings, credentials, and settings

- Documentation: Link to runbooks, contacts, and operational notes

Inventory

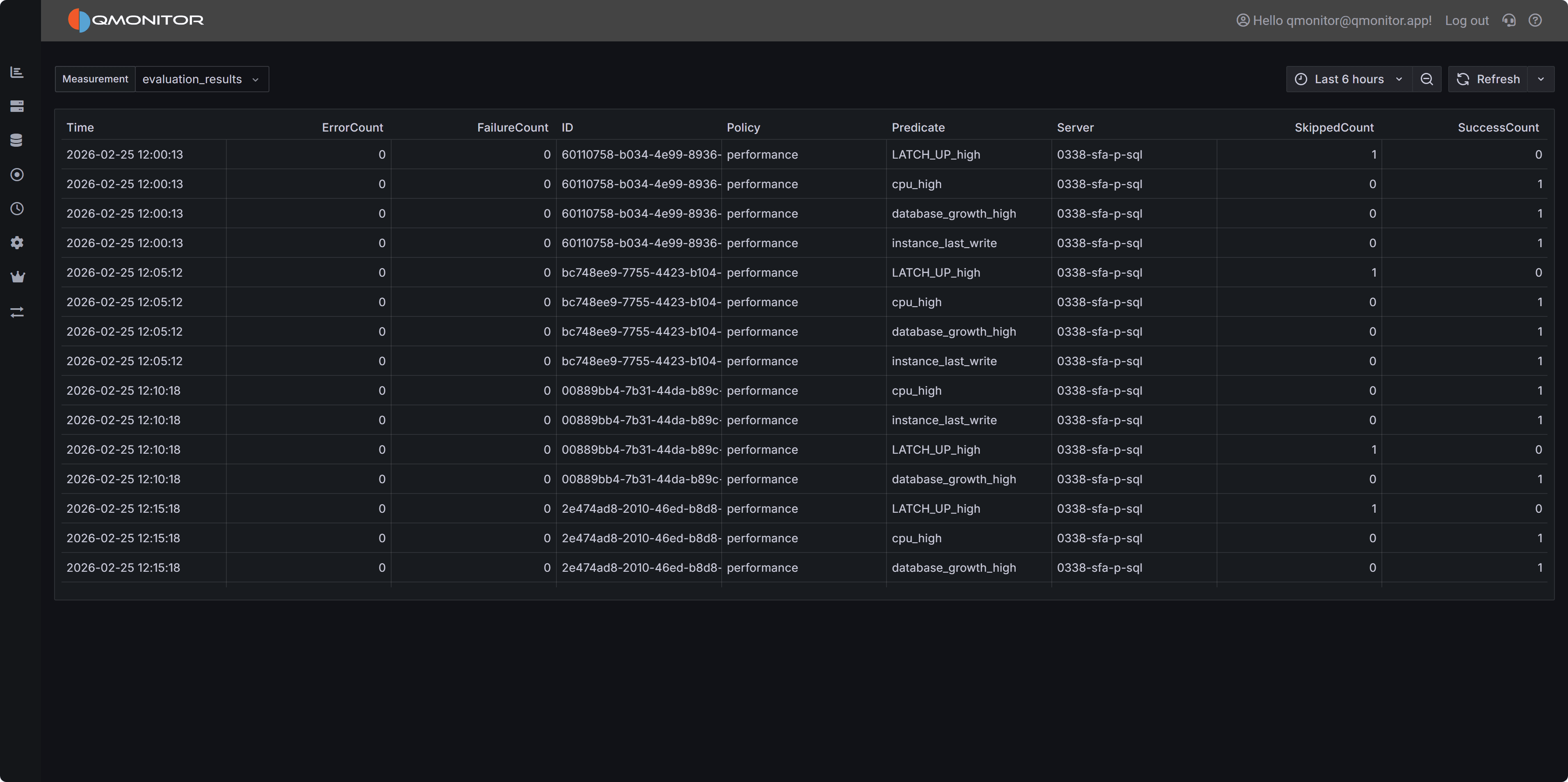

Compliance Checks

Validate your SQL Server instances against industry best practices and organizational standards.

- Best Practice Rules: Automated checks based on Microsoft and community guidelines

- Custom Policies: Define organization-specific compliance requirements

- Audit Reports: Generate compliance reports for review and remediation

- Remediation Guidance: Get recommendations for resolving non-compliant settings

Settings & Configuration

Centralized configuration for all aspects of QMonitor.

- Instance Registration: Add and configure SQL Server instances for monitoring

- Data Collection: Control what metrics and events are captured

- User Management: Define roles and permissions

- Integration Settings: Configure external systems and notifications

Getting Started

New to QMonitor? Start with these resources:

- Getting Started Guide - Set up your first monitored instance

- Dashboards Overview - Learn to navigate and interpret dashboards

- Common Tasks - Follow step-by-step guides for common operations

Architecture

QMonitor is a 100% cloud-based solution with the following characteristics:

- No On-Premises Infrastructure: No servers or databases to install and maintain

- Lightweight Agent: Minimal footprint on monitored SQL Server instances

- Secure Communication: Encrypted data transmission and storage

- Multi-Tenant: Isolates customer data with role-based access control

- Scalable: Monitors from a single instance to hundreds across your enterprise

Support

Need help? Check out the Troubleshooting section or

contact support from the Support page.

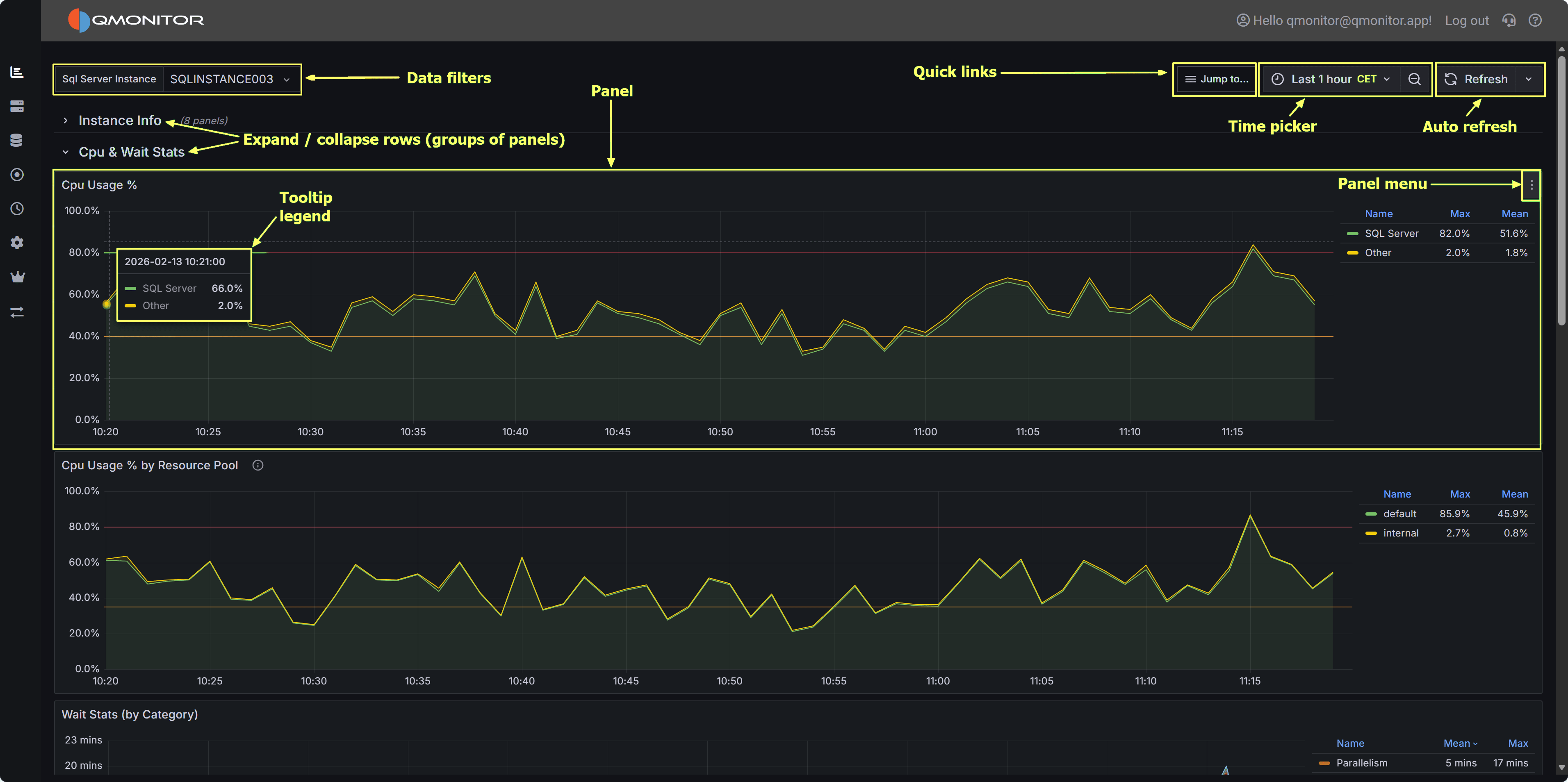

4.1 - Dashboards

Use dashboards to monitor instance health metrics

Using the taskbar on the left, you can click on the topmost button to open a list of the available dashboards,

that you can use to monitor your SQL Server instances.

QMonitor uses Grafana dashboards: Grafana is a powerful data analytics platform that provides

advanced dashboarding capabilities and represents a de-facto standard for monitoring and observability applications.

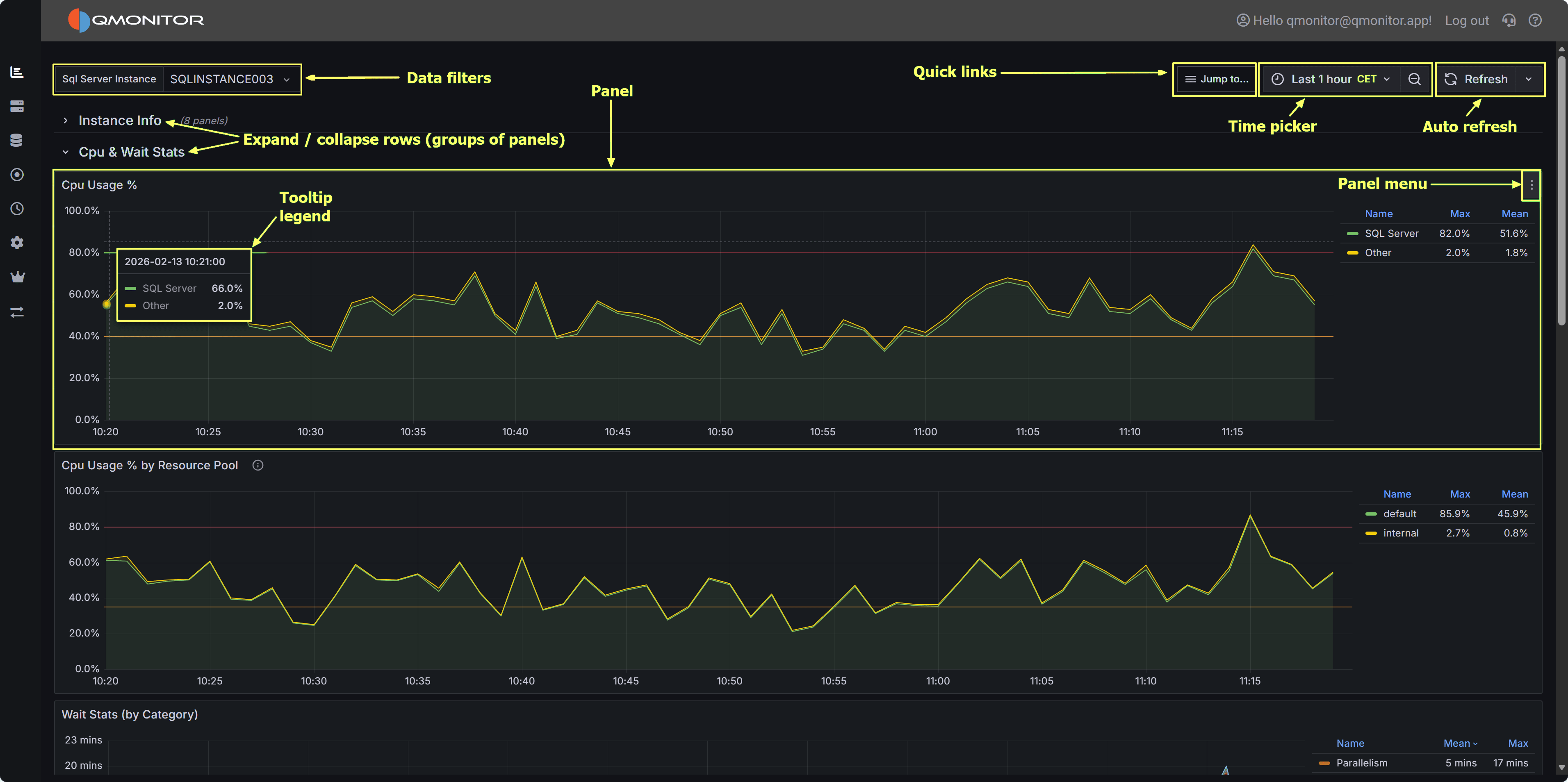

Navigating Dashboards

QMonitor dashboard showing multiple metrics panels with time picker and filters

QMonitor dashboard showing multiple metrics panels with time picker and filters

Time Range Selection

All the data in the dashboards can be filtered using the time picker on the top right corner: it offers predefined

quick time ranges, like “Last 5 minutes”, “Last 1 hour”, “Last 7 days” and so on. These are usually the easiest way

to select the time range.

If you want, you can also use absolute time ranges, that you can select with the calendar on the left side of the time picker popup.

You can use the calendar buttons on the From and To fields to pick a date or you can enter the time range manually.

Panel Interactions

- Zoom: Click and drag on any graph to zoom into a specific time range

- Tooltips: Hover over data points to see exact values and timestamps

- Full Screen: Click the panel menu (⋮) and select “View” to expand a panel to full screen (press Escape to exit)

- Panel Menu: Click the three dots (⋮) in the top-right corner of any panel for additional options

Legend Controls

- Isolate a series: Click a legend item to show only that metric

- Toggle visibility: Ctrl+click to show/hide multiple series

- Sort: Some legends allow sorting by current value or name

Refresh and Auto-Update

- Use the refresh button (🔄) in the top-right to manually reload data

- Dashboards auto-refresh at intervals (typically every 30 seconds or 1 minute)

- The refresh interval is shown next to the refresh button

Instance and Database Filters

At the top of most dashboards, you’ll find dropdown filters to narrow your view:

- Instance: Select one or more SQL Server instances

- Database: Filter by specific databases (where applicable)

- Click “All” to select all options, or choose individual items

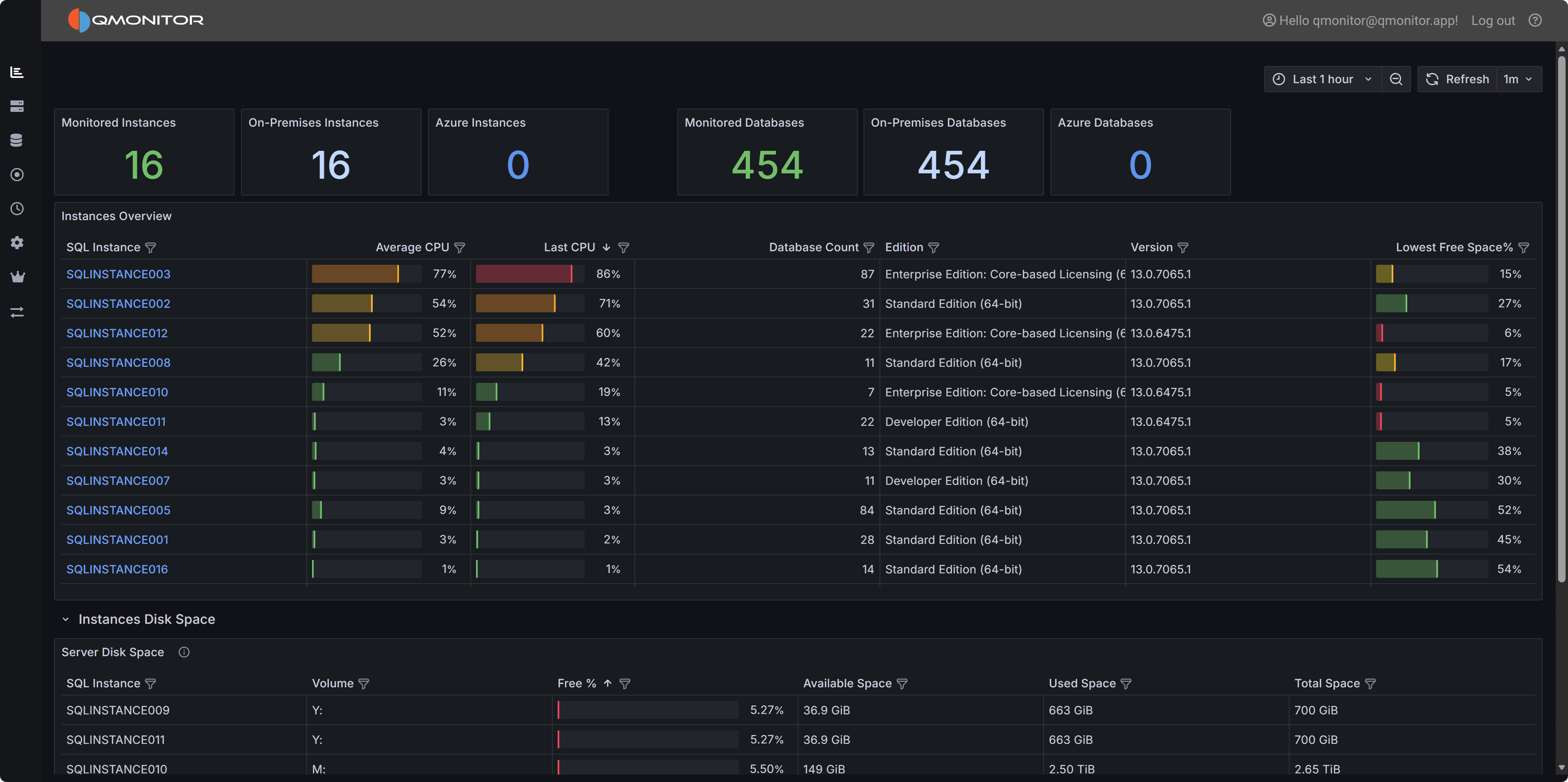

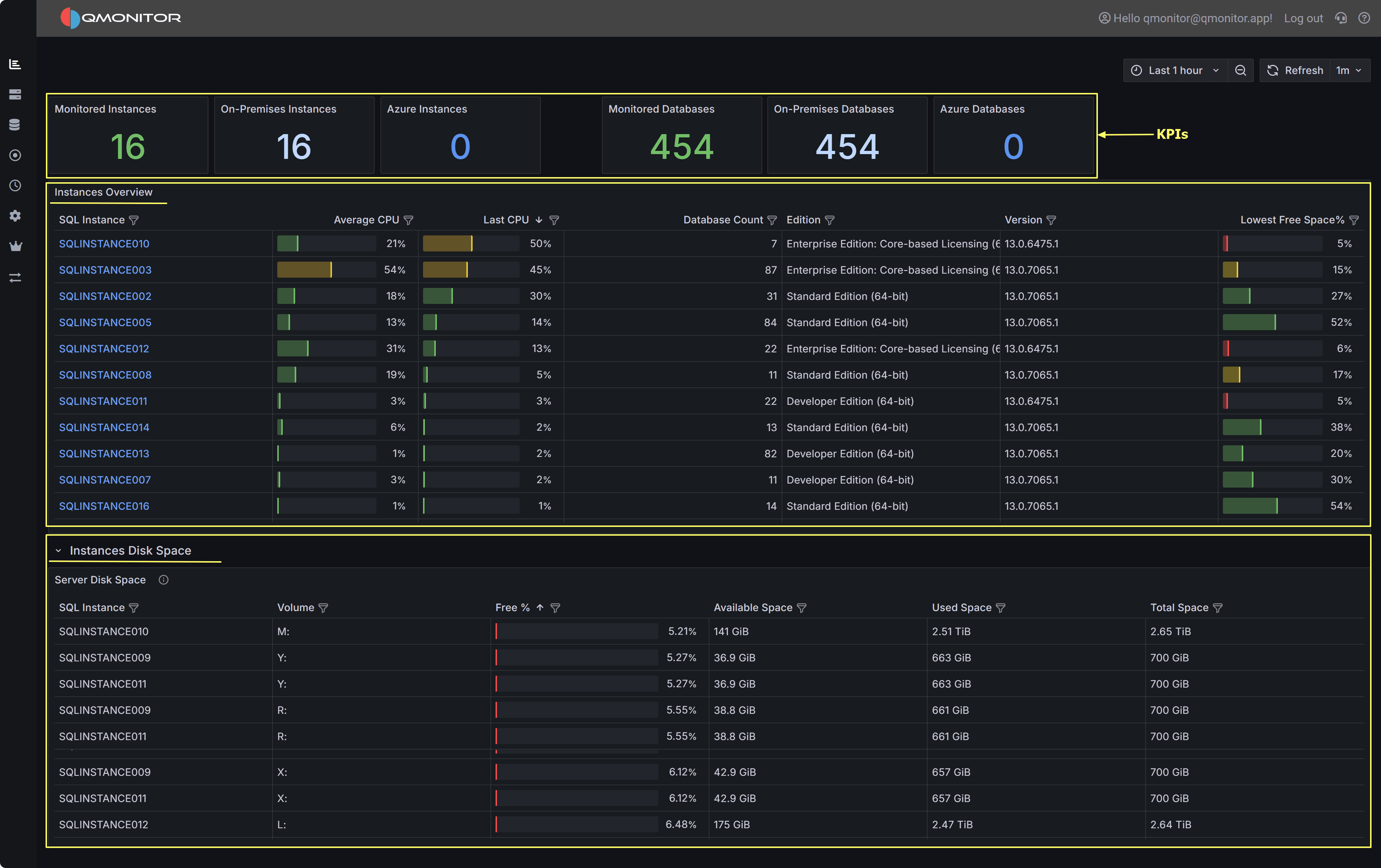

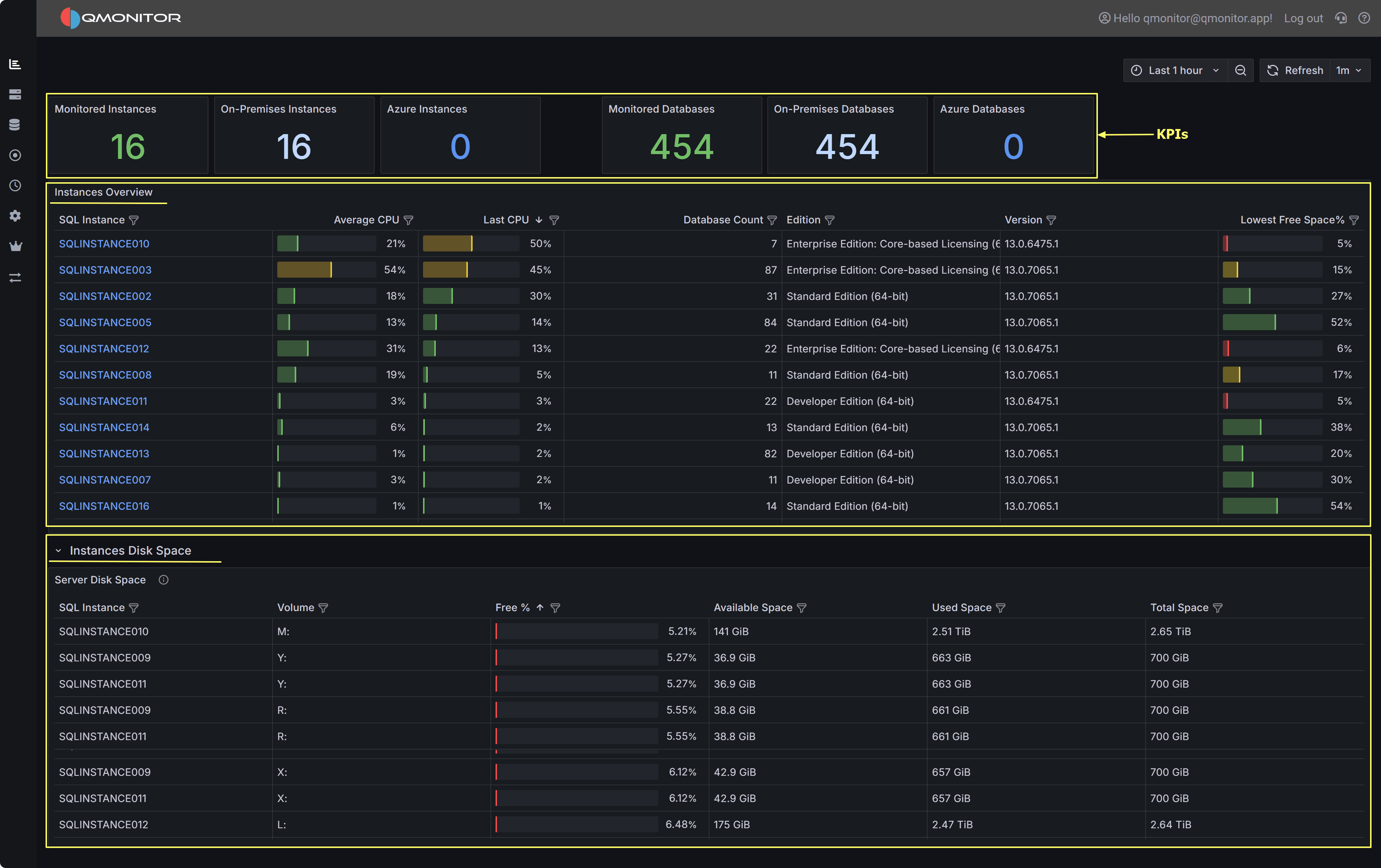

4.1.1 - Global Overview

An overall view of your SQL Server estate

The Global Overview dashboard is your entry point to the SQL Server infrastructure: it provides an at-a-glance view of all the instances,

along with useful performance metrics.

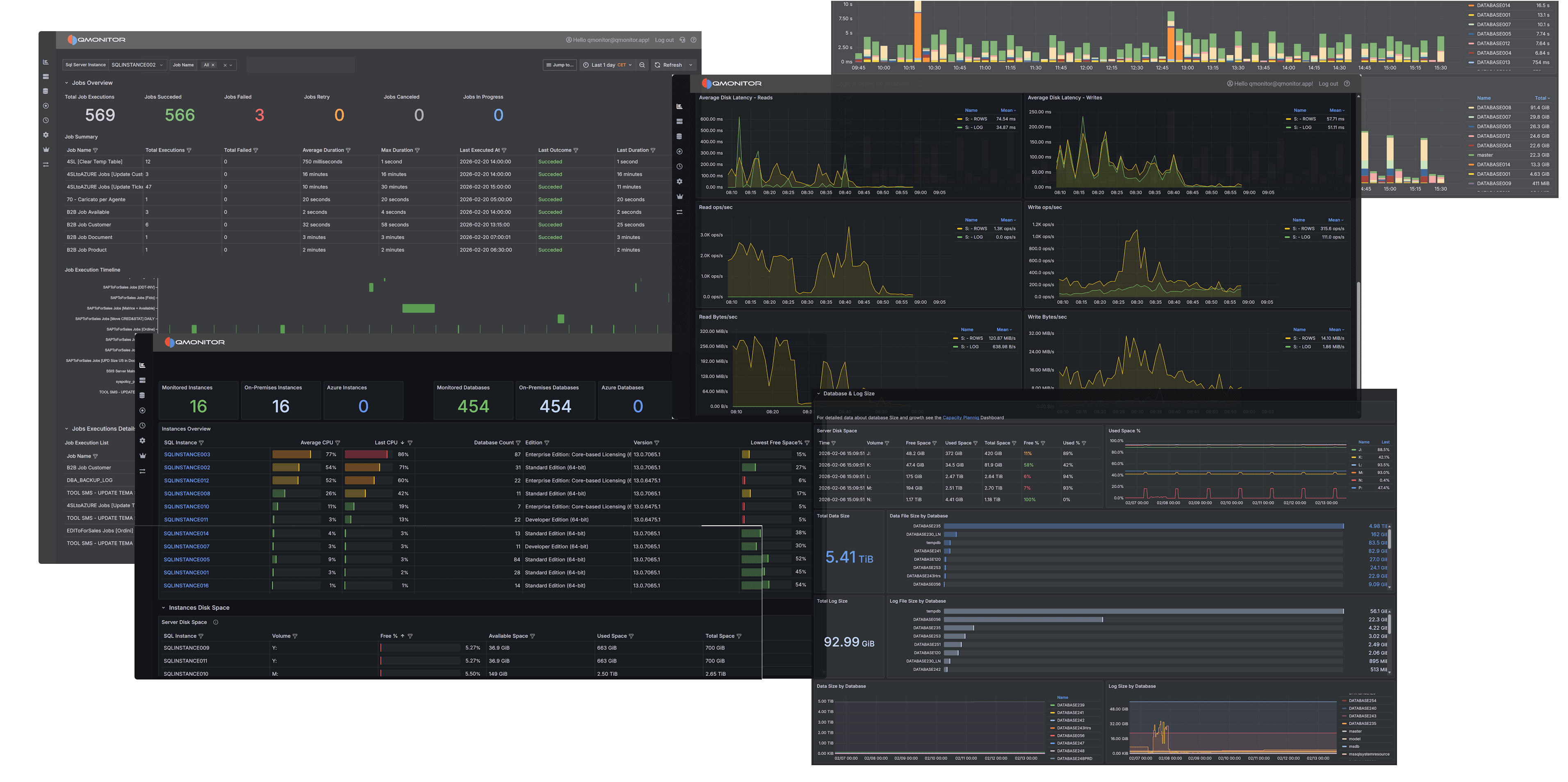

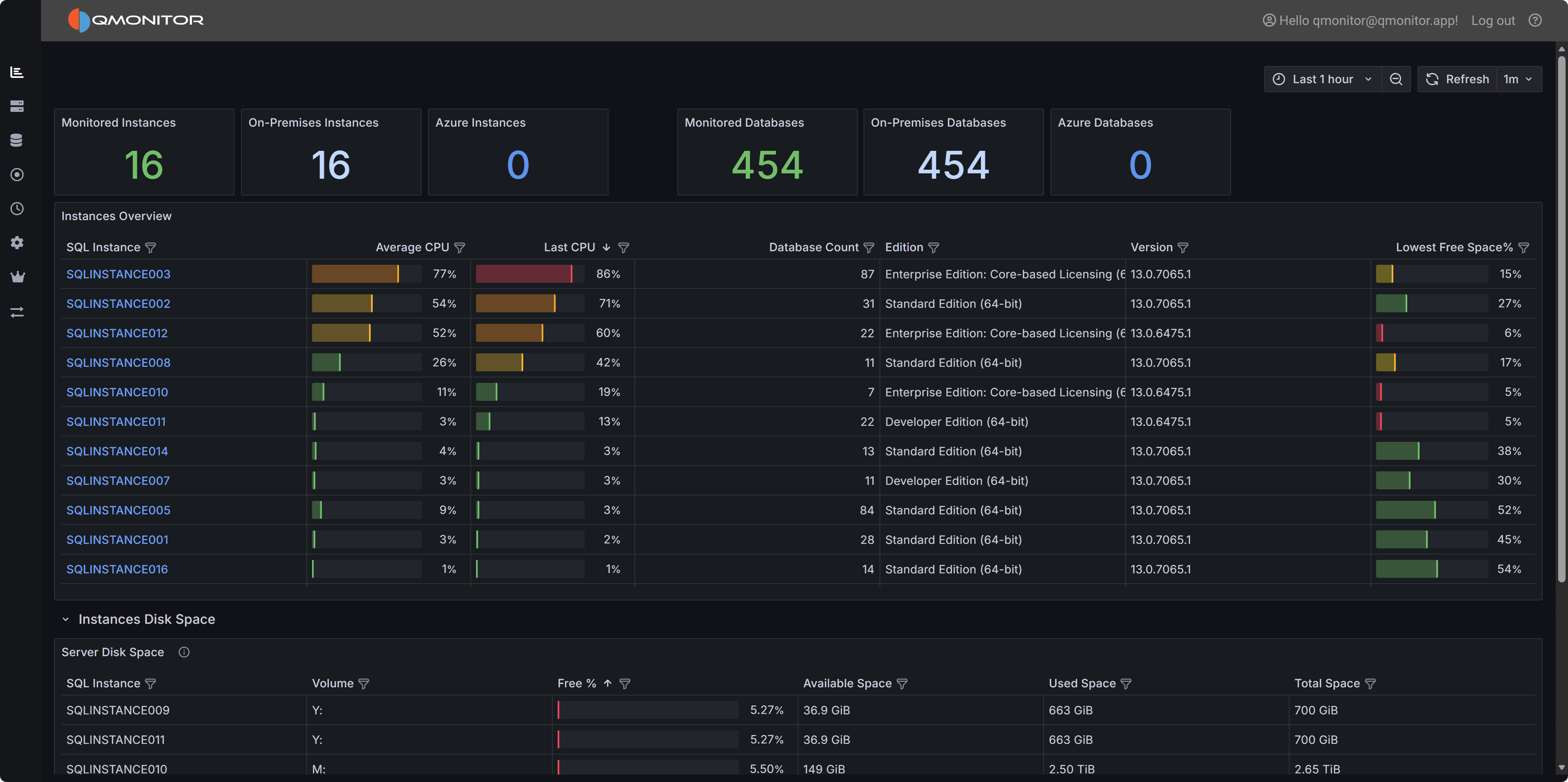

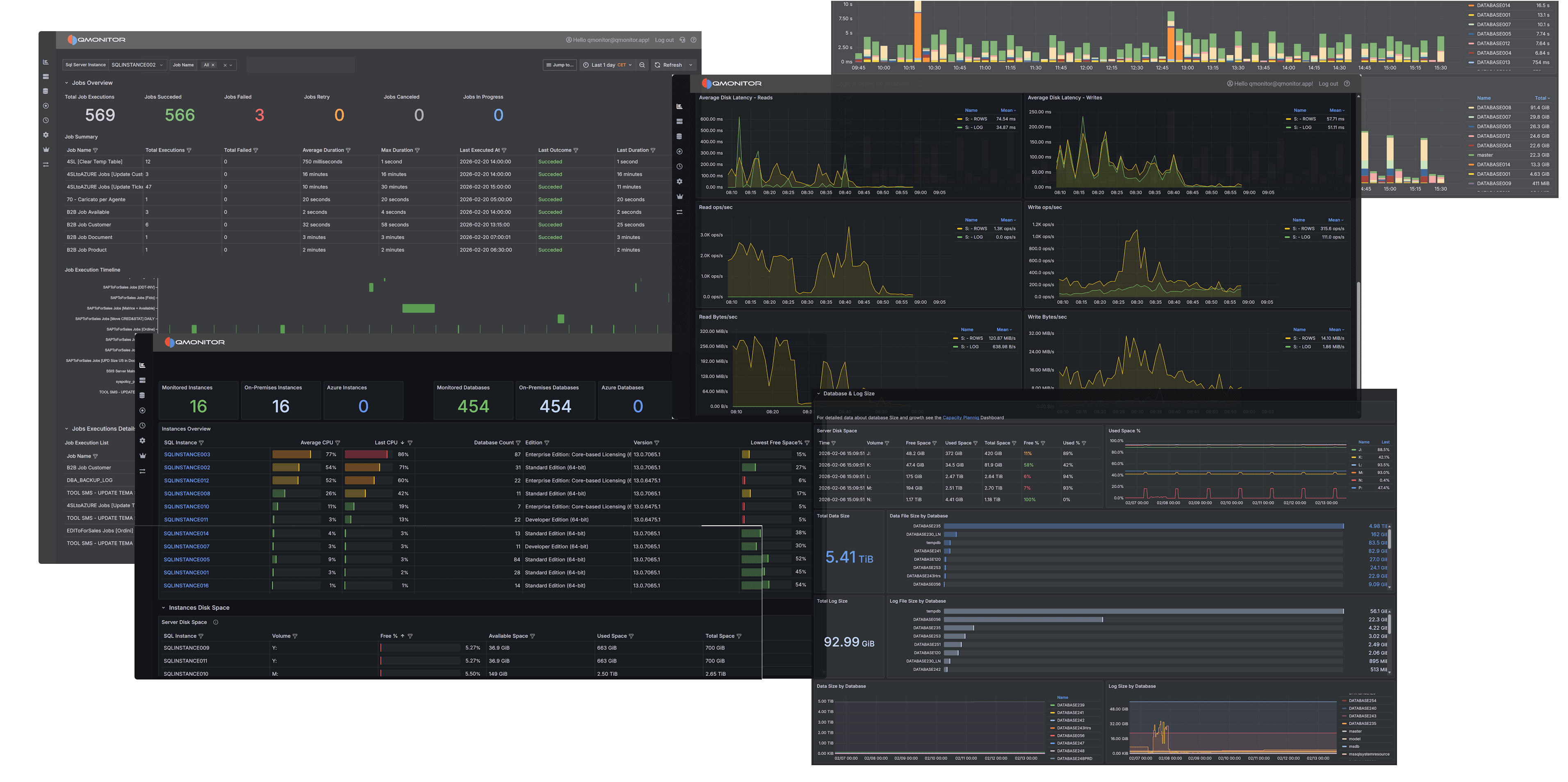

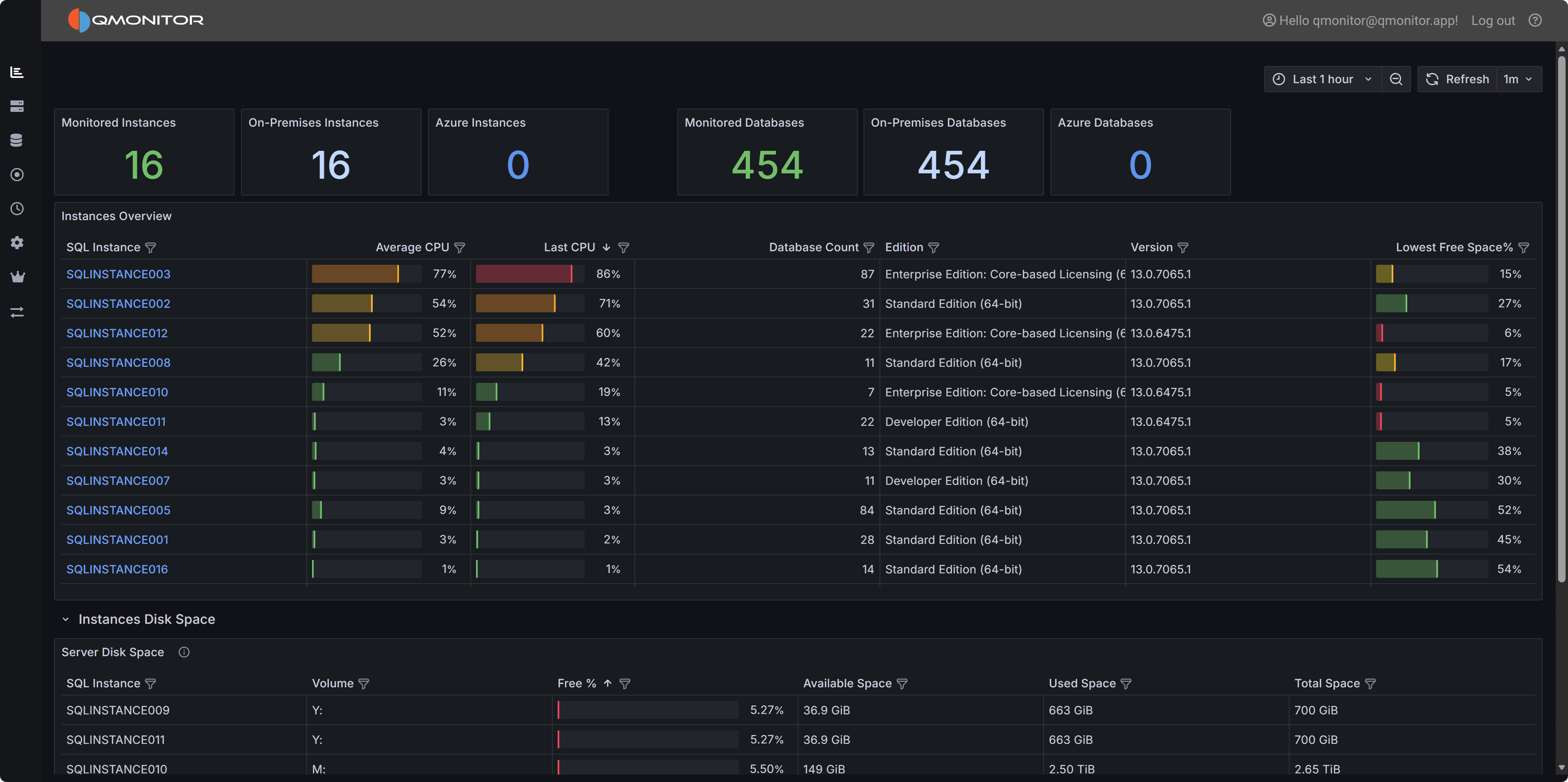

Global Overview dashboard showing instance KPIs, instances table, and disk space details

Global Overview dashboard showing instance KPIs, instances table, and disk space details

Dashboard Sections

Instance and Database Counts

At the top left of the dashboard, you have KPIs for the total number of monitored instances, divided between on-premises and Azure instances.

At the top right you have the same KPI for the total number of monitored databases, again divided between on-premises and Azure.

Instances Overview

The middle of the dashboard contains the Instances Overview table, with the following information:

- SQL Instance: The name of the instance. For on-premises SQL Servers, this corresponds to the name returned by @@SERVERNAME, except that

the backslash is replaced by a colon in named instances (you have SERVER:INSTANCE instead of SERVER\INSTANCE).

For Azure SQL Managed Instances and Azure SQL Databases, the name is the network name of the logical instance.

Click on the instance name to open the Instance Overview dashboard for that instance. - Database: for Azure SQL Databases, the name of the database

- Elastic Pool: for Azure SQL Databases, the name of the elastic pool if in use, <No Pool> otherwise.

- Database Count: the number of databases in the instance

- Edition: the edition of SQL Server (Enterprise, Standard, Developer, Express). For Azure SQL Databases it is “Azure SQL Database”.

For Azure SQL Managed Instances, it can be GeneralPurpose or BusinessCritical.

- Version: The version of SQL Server. For Azure SQL Database it contains the service tier (Basic, Standard, Premium…)

- Last CPU: the last value captured for CPU usage in the selected time interval

- Average CPU: the average CPU usage in the time interval

- Lowest disk space %: the percent of free space left in the disk that has the least space available. For Azure SQL Databases and

Azure SQL Managed Instances the percentage is calculated on the maximum space available for the current tier.

Instances Disk Space

At the bottom of the dashboard, you have the detail of the disk space available on all instances. The table contains the following information:

- SQL Instance: the name of the instance, Azure SQL Database or Azure SQL Managed Instance.

- Database: for Azure SQL Databases, the name of the database

- Elastic Pool: for Azure SQL Databases, the name of the elastic pool if in use, <No Pool> otherwise.

- Volume: drive letter or mount point of the volume

- Free %: Percentage of free space in the volume

- Available Space: Available space in the volume. The unit measure is included in the value.

- Used Space: Used space in the volume

- Total Space: Size of the Volume (Used space + Available space)

4.1.2 - Instance Overview

Detailed information about the performance of a SQL Server instance

This dashboard is one of the main sources of information to control the health and performance of a SQL Server instance.

It contains the main performance metrics that describe the behavior of the instance over time.

Access this dashboard by clicking on an instance name from the Global Overview dashboard

or by selecting it from the Instances dropdown at the top of any dashboard. Use the time picker to analyze

historical performance or monitor real-time metrics. Each section can be expanded or collapsed to focus on

specific areas of interest.

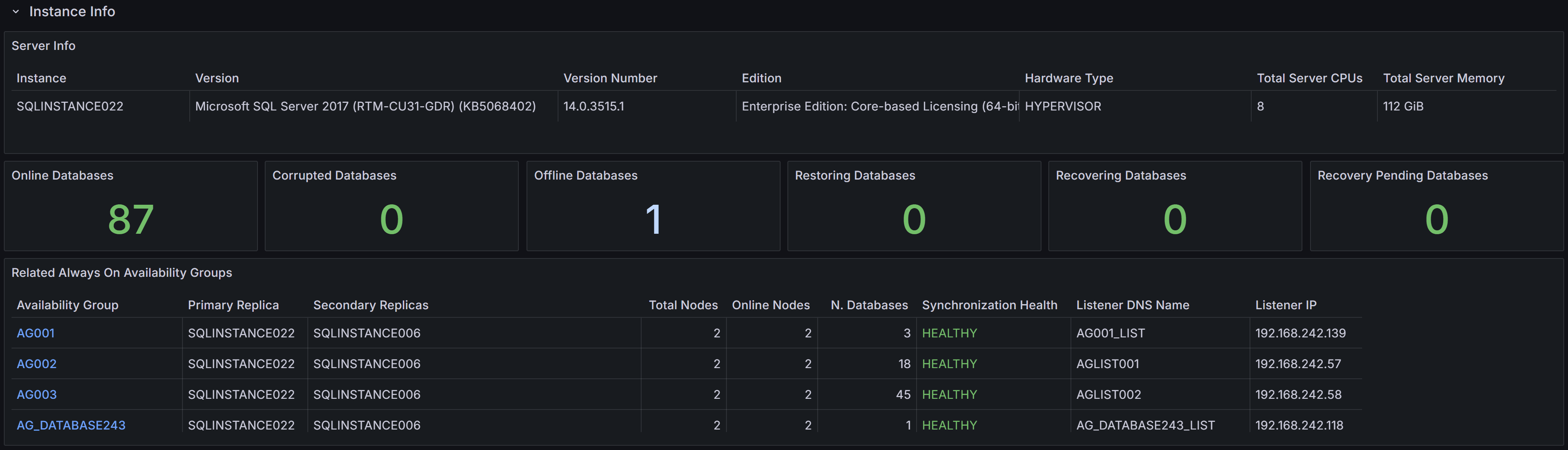

Dashboard Sections

The dashboard is divided into multiple sections, each one focused on a specific aspect of the instance performance.

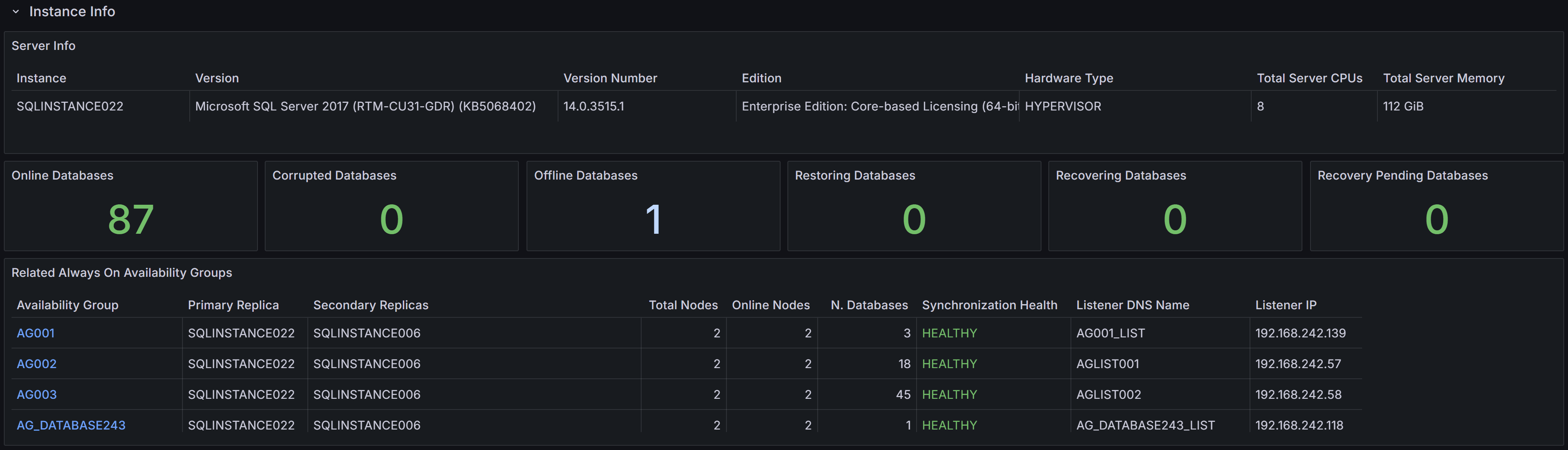

Instance Info

Instance properties, database states, and Always On Availability Groups summary

Instance properties, database states, and Always On Availability Groups summary

At the top you can find the Instance Info section, where the properties of the instance are displayed. You have information

about the name, version, edition of the instance, along with hardware resources available (Total Server CPUs and Total Server Memory).

You also have KPIs for the number of databases, with the counts for different states (online, corrupt, offline, restoring, recovering and recovery pending).

At the bottom of the section, you have a summary of the state of any configured Always On Availability Groups.

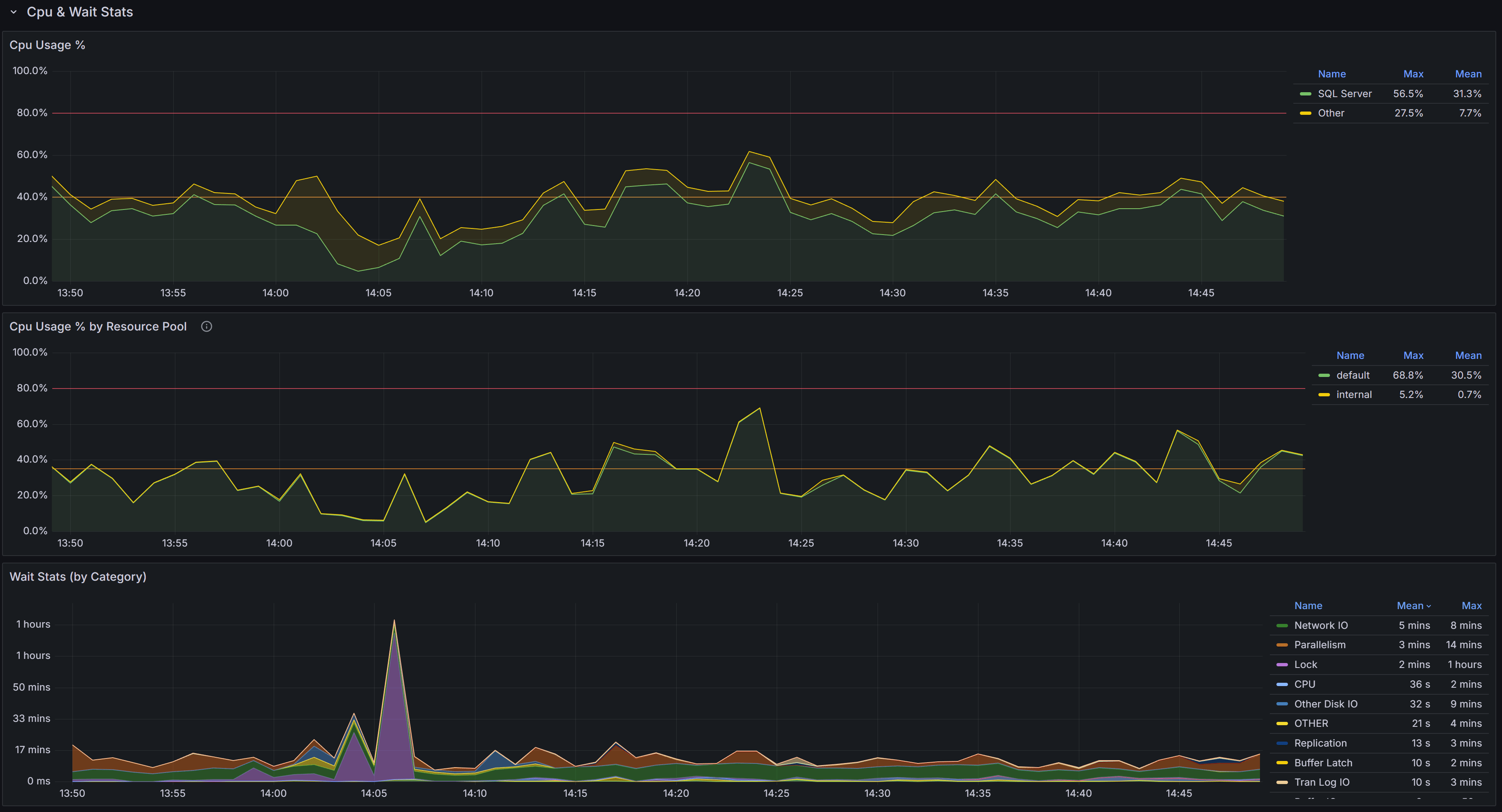

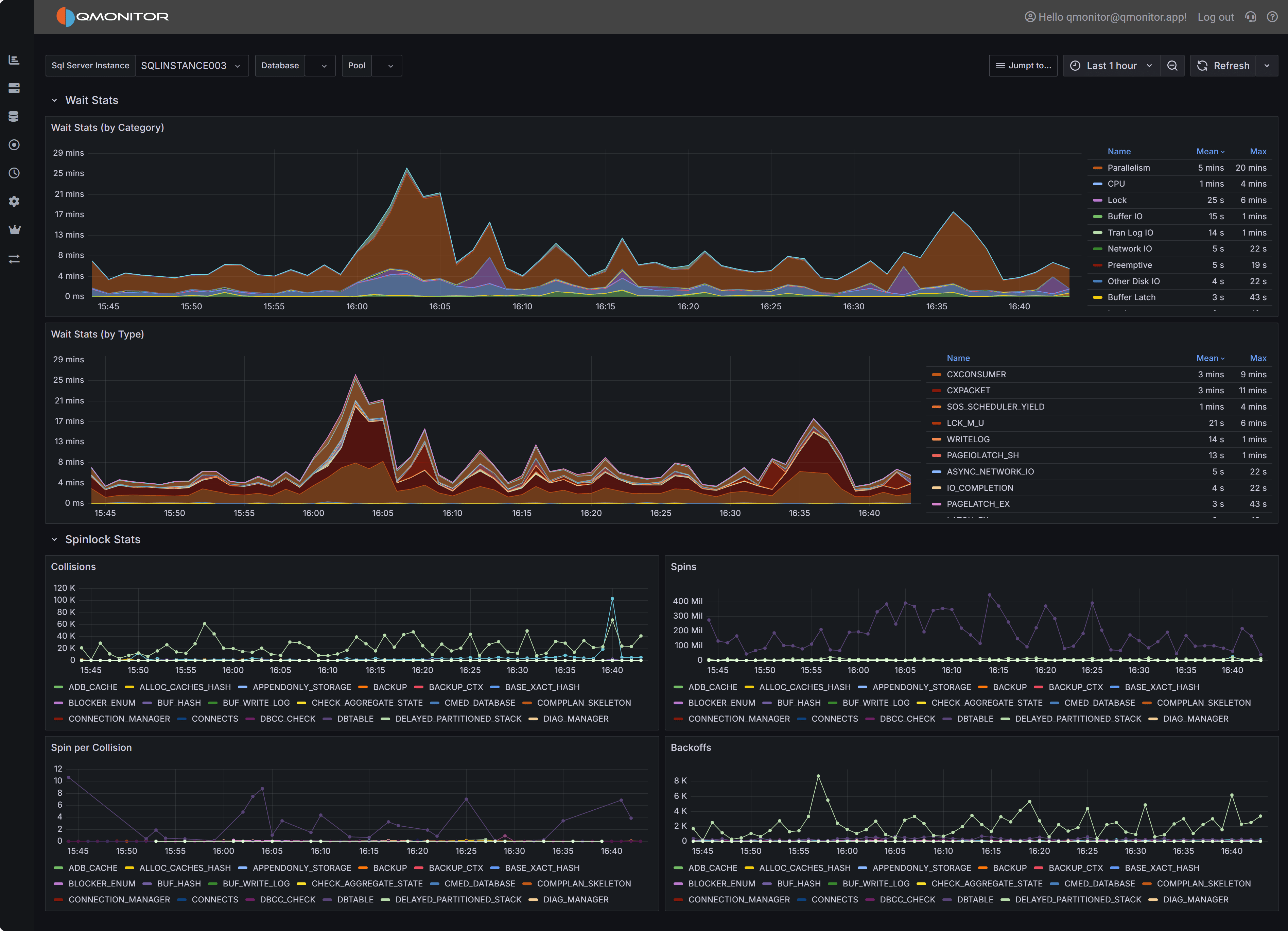

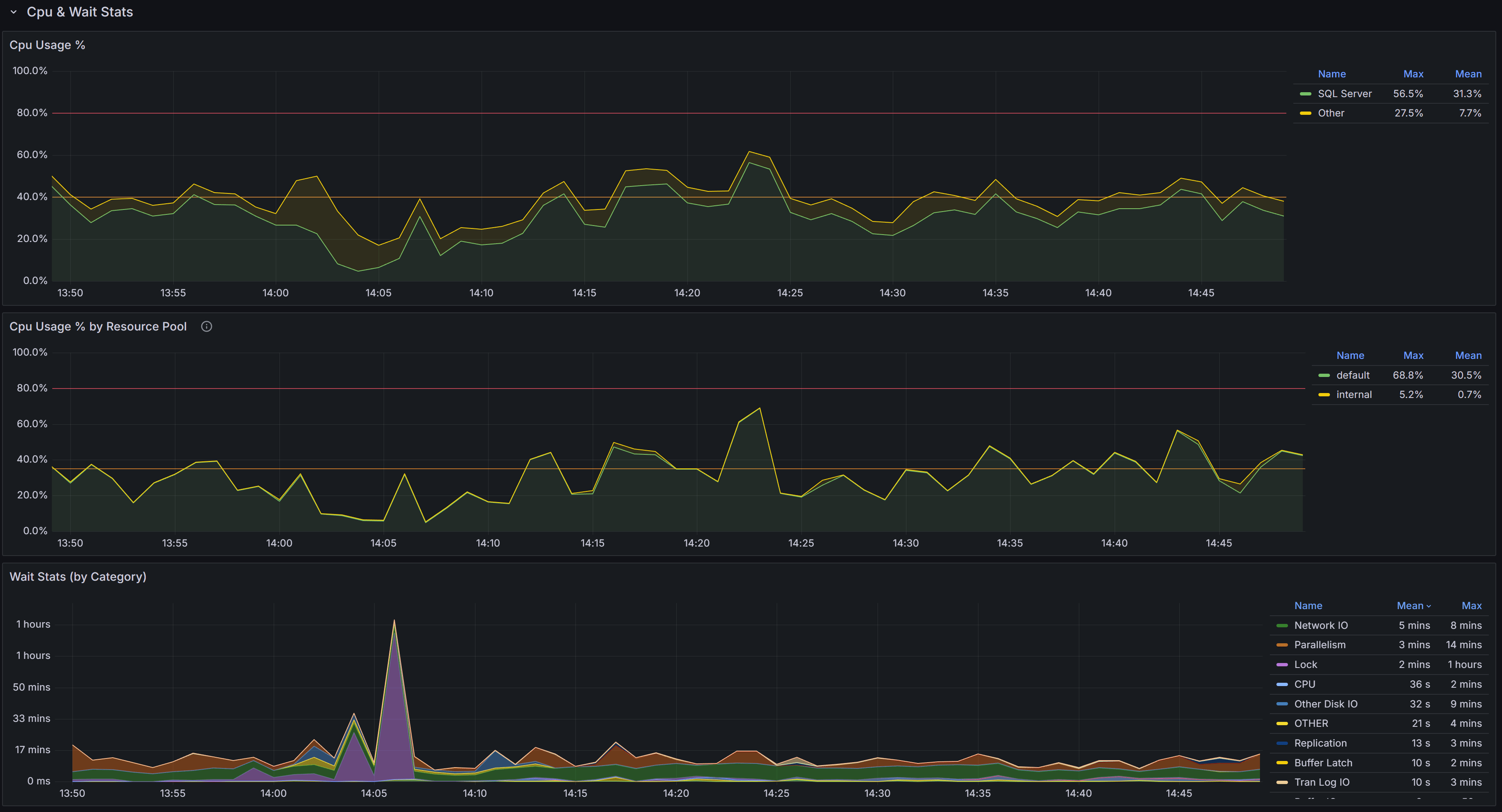

Cpu & Wait Stats

Cpu, Cpu by Resource Pool and Wait Stats By Category

Cpu, Cpu by Resource Pool and Wait Stats By Category

At the top of this section you have the chart that represents the percent CPU usage for the SQL Server process

and for other processes on the same machine.

The second chart represents the percent CPU usage by resource pool. This chart will help you understand which parts of the workload are

consuming the most CPU, according to the resource pool that you defined on the instance. If you are on an Azure SQL Managed Instance or

on an Azure SQL Database, you will see the predefined resource pools available from Azure, while on an Enterprise or Developer edition

you will see the user defined resource pools. For a Standard Edition, this chart will only show the internal pool.

The Wait Stats (by Category) chart represents the average wait time (per second) by wait category. The individual wait classes are not

shown on this chart, which only represents wait categories: in order to inspect the wait classes, go to the

Geek Stats dashboard.

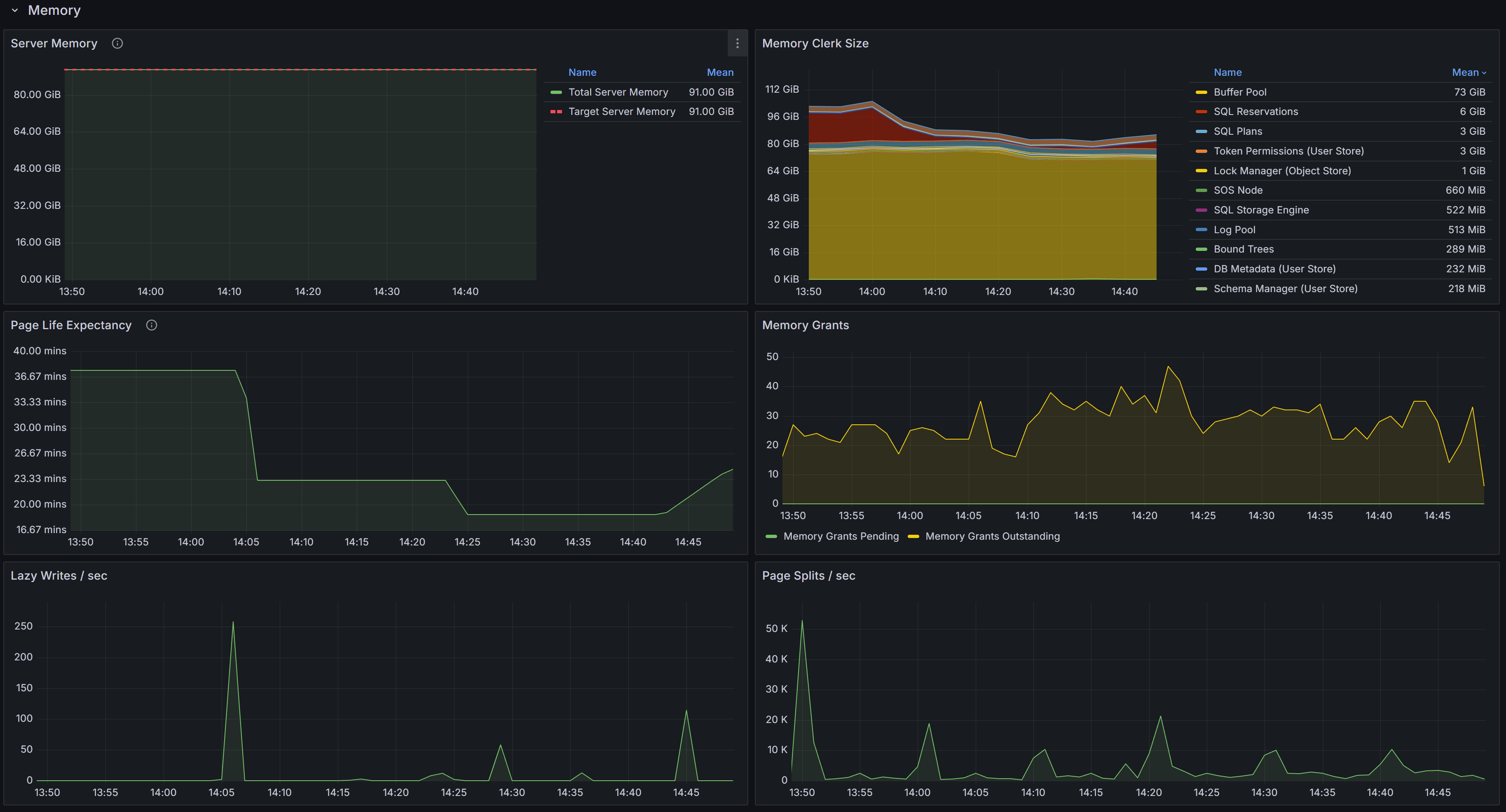

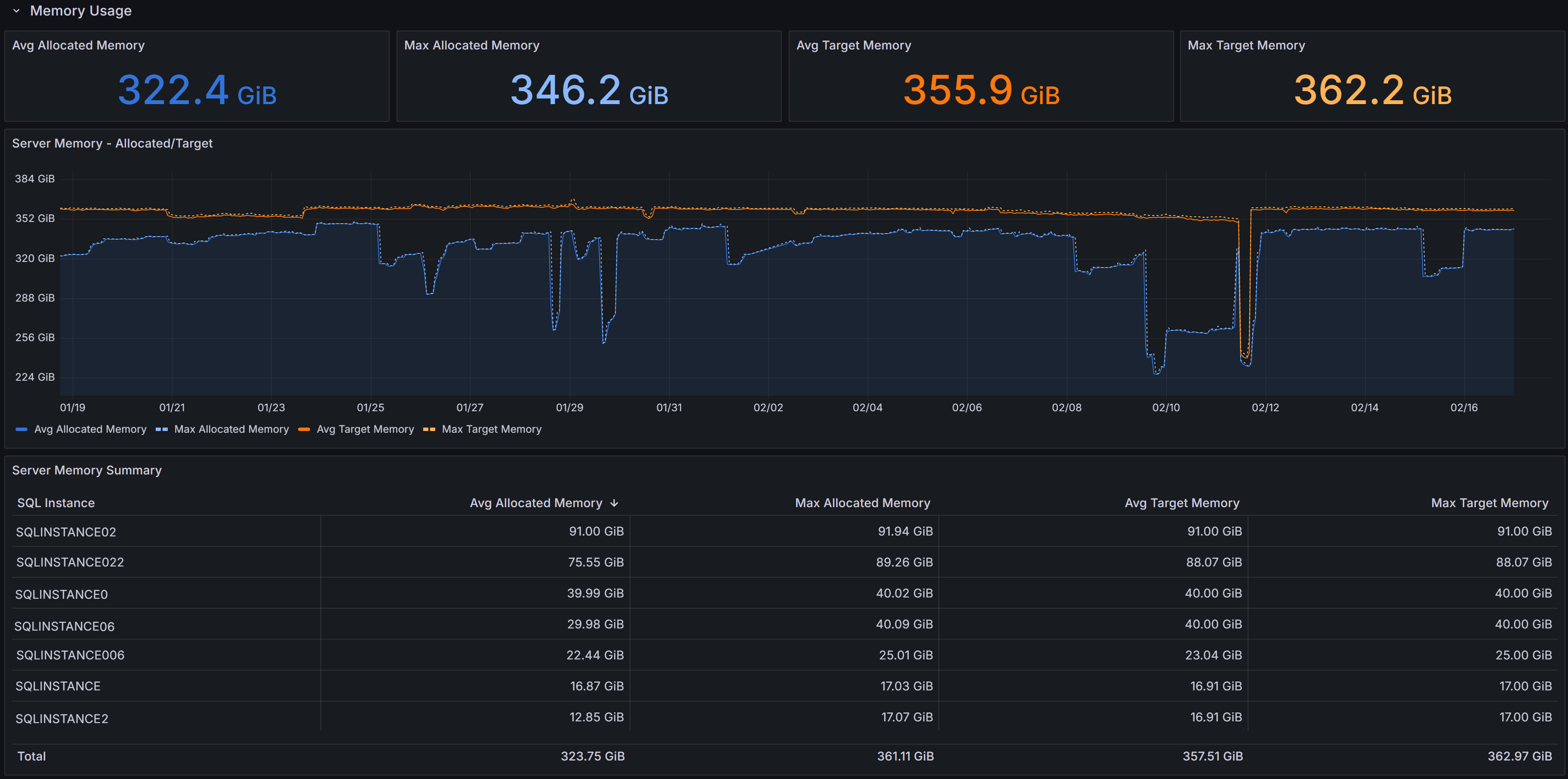

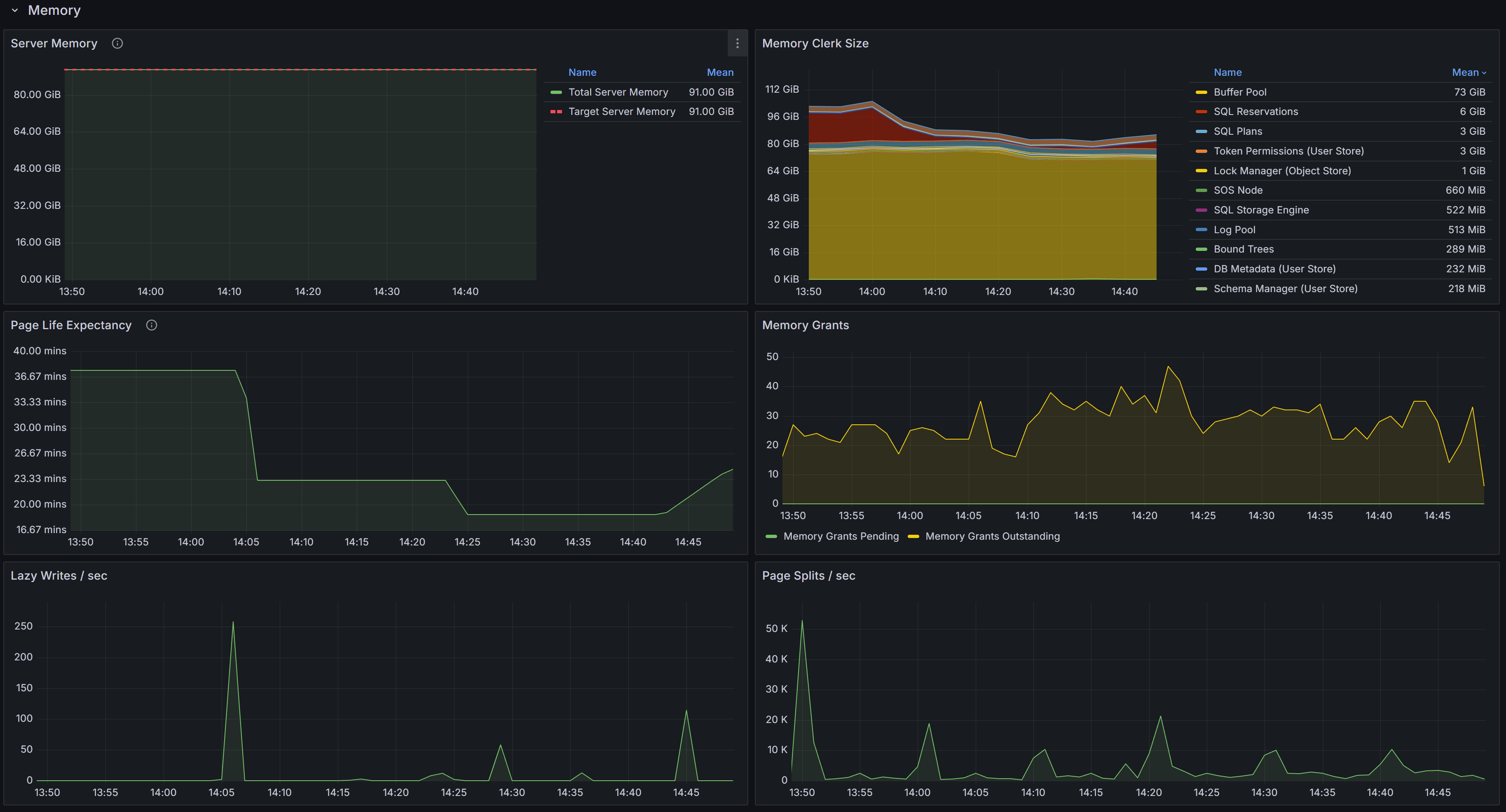

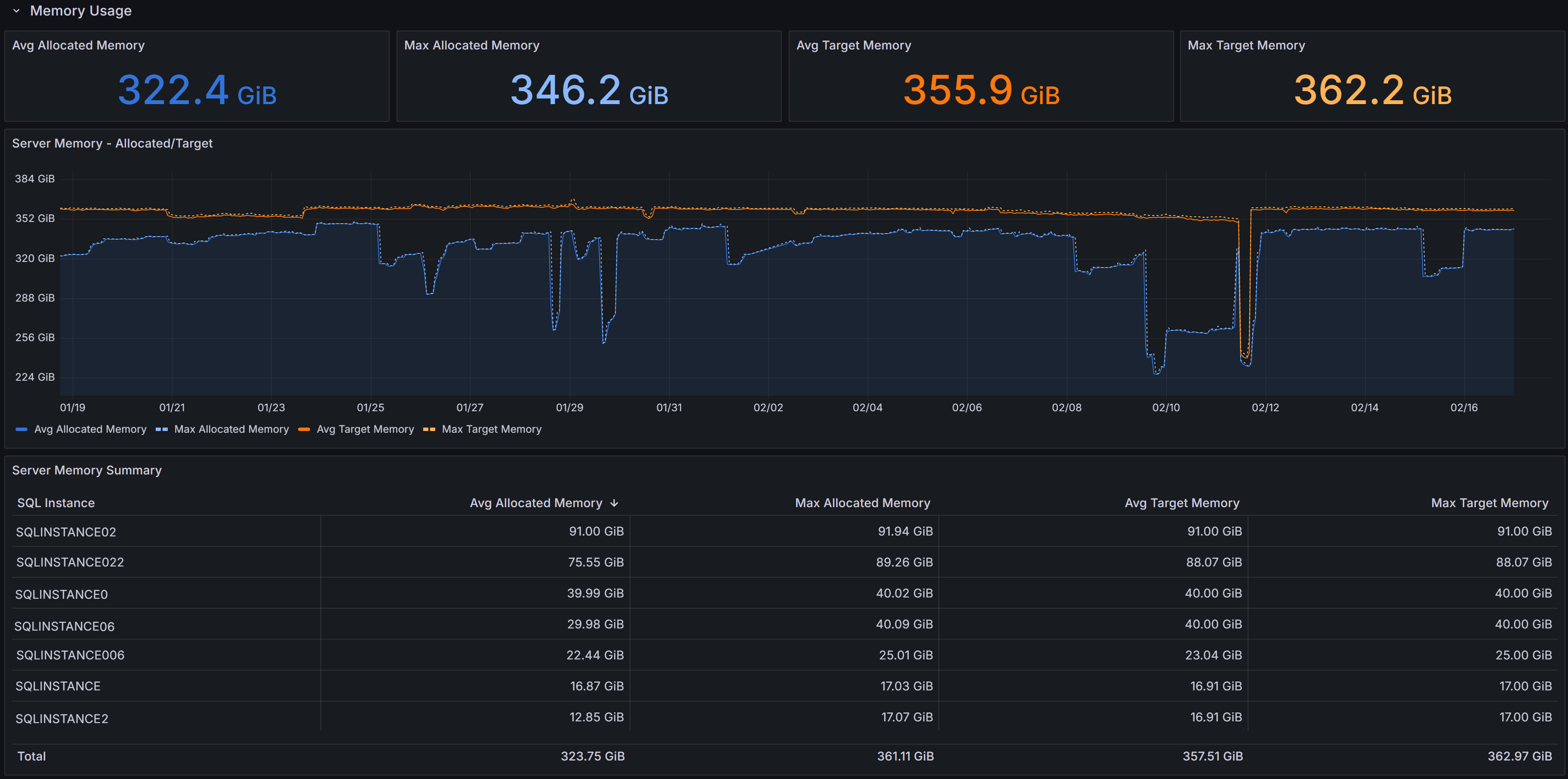

Memory

Memory related metrics describe the state of the instance in respect to memory usage and memory pressure

Memory related metrics describe the state of the instance in respect to memory usage and memory pressure

This section contains charts that display the state of the instance in respect to the use of memory. The chart at the top left is

called “Server Memory”, and shows Target Server Memory vs Total Server Memory. The former represents the ideal amount of memory that

the SQL Server process should be using, the latter is the amount of memory currently allocated to the SQL Server process. When the

instance is under memory pressure, the target server memory is usually higher than total server memory.

The second chart shows the distribution of the memory between the memory clerks. A healthy SQL Server instance allocates most of the

memory to the Buffer Pool memory clerk. Memory pressure could show on this chart as a fall in the amount of memory allocated to the Buffer Pool.

Another aspect to keep under control is the amount of memory used by the SQL Plans memory clerk. If SQL Server allocates too much

memory to SQL Plans, it is possible that the cache is polluted by single-use ad-hoc plans.

The third chart displays Page Life Expectancy. This counter is defined as the amount of time that a database page is expected to

live in the buffer cache before it is evicted to make room for other pages coming from disk. A very old recommendation from Microsoft

was to keep this counter under 5 minutes every 4 Gb of RAM, but this threshold was identified in a time when most servers had mechanical

disks and much less RAM than today.

Instead of focusing on a specific threshold, you should interpret this counter as the level of volatility of your buffer cache: a too low

PLE may be accompanied by elevated disk activity and higher disk read latency.

Next to the PLE you have the Memory Grants chart, which represents the number of memory grant outstanding and pending. At any time, having

Memory Grants Pending greater that zero is a strong indicator of memory pressure.

Lazy Writes / sec is a counter that represents the number of writes performed by the lazy writer process to eliminate dirty pages from the

Buffer Pool outside of a checkpoint, in order to make room for other pages from disk. A very high number for this counter may indicate

memory pressure.

Next you have the chart for Page Splits / sec, which represents how many page splits are happening on the instance every second. A page

split happens every time there is not enough space in a page to accommodate new data and the original page has to be split in two pages.

Page splits are not desirable and have a negative impact on performance, especially because split pages are not completely full, so more

pages are required to store the same amount of information in the Buffer Cache. This reduces the amount of data that can be cached, leading

to more physical I/O operations.

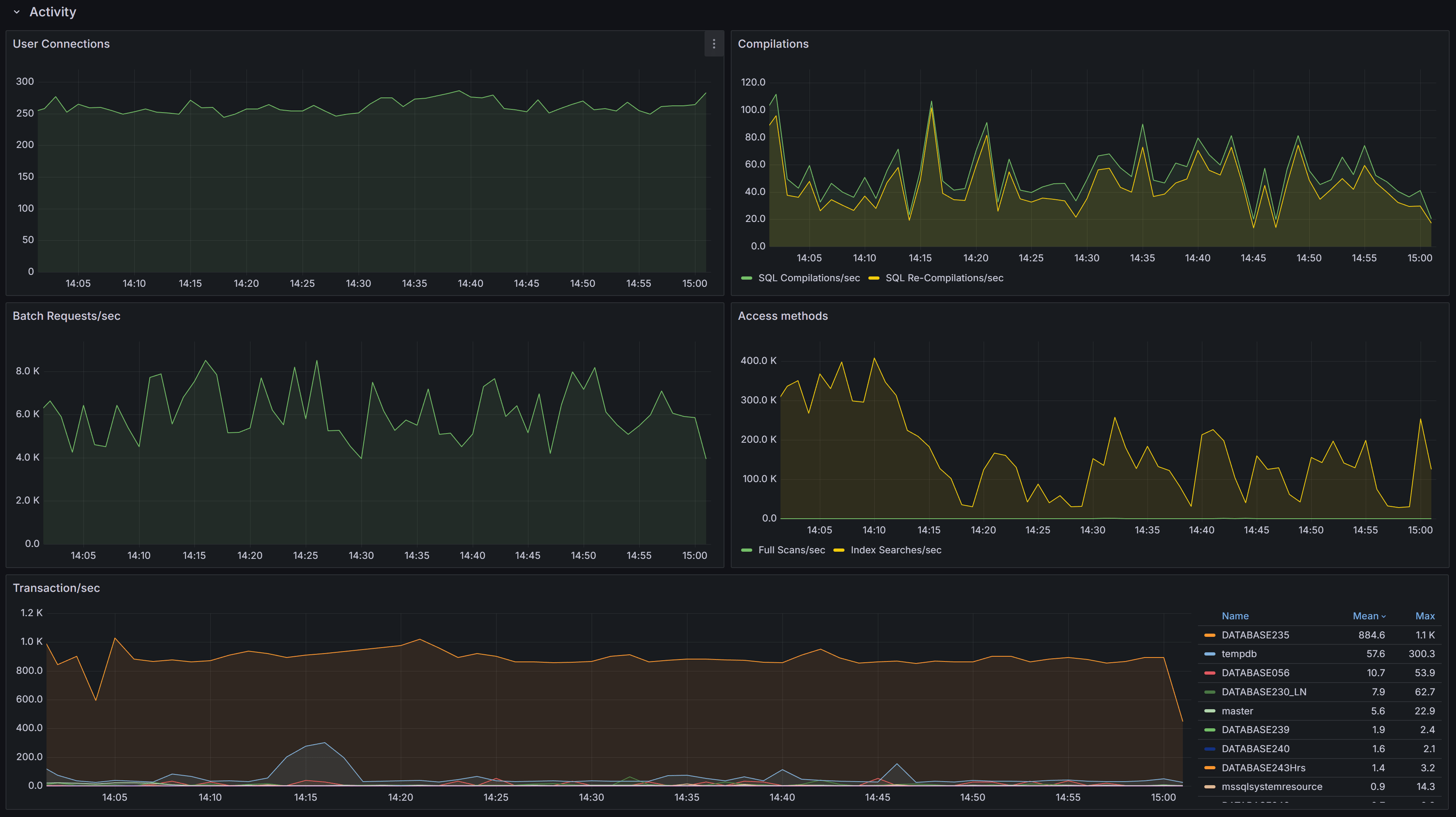

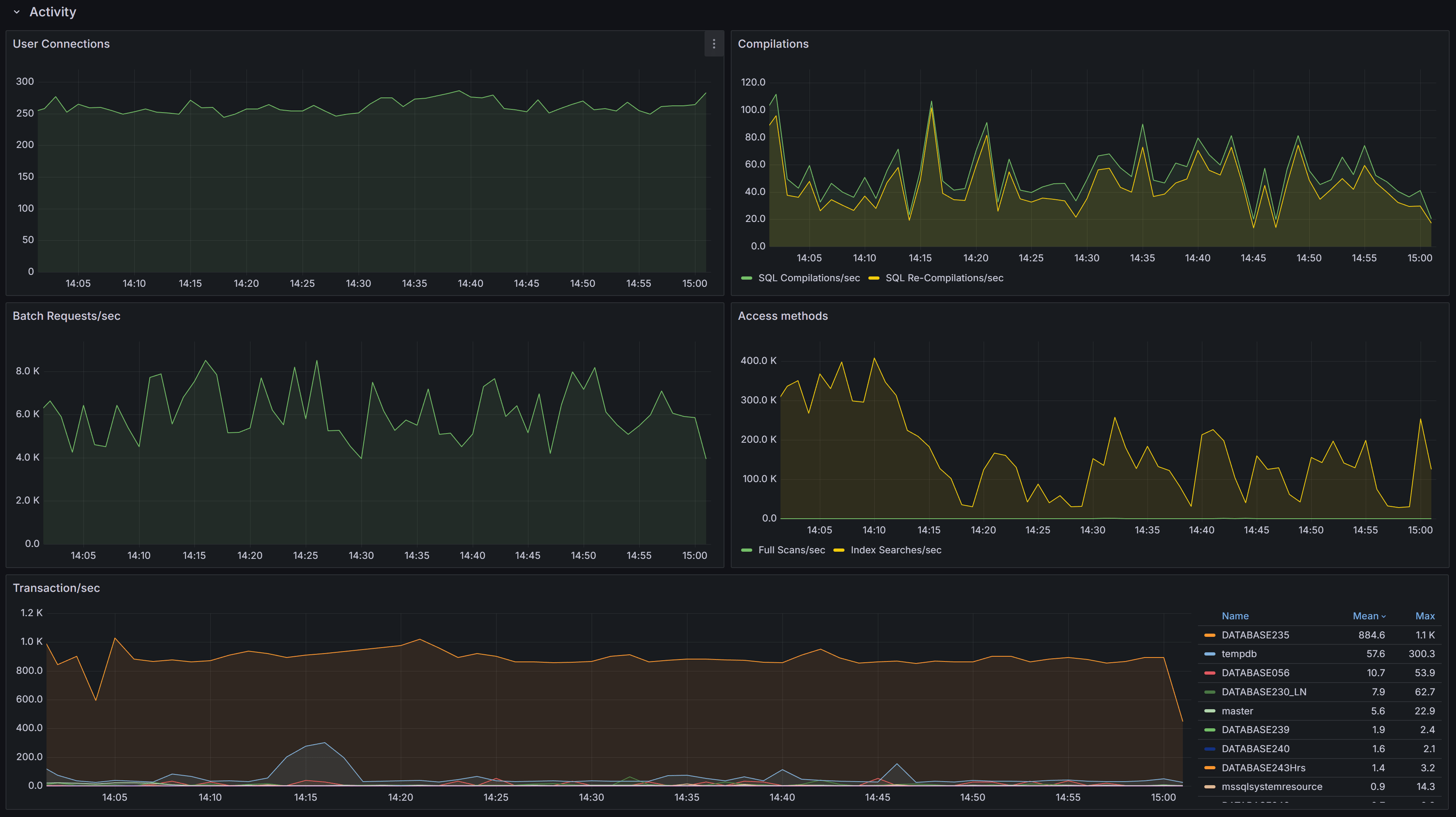

Activity

General activity metrics, including user connections, compilations, transactions, and access methods

General activity metrics, including user connections, compilations, transactions, and access methods

This section contains charts that display multiple SQL Server performance counters.

First you have the User Connections chart, which displays the number of active connections from user processes. This number should be

consistent with then number of people or processes hitting the database and should not increase indefinitely (connection leak).

Next, we have the number of Compilations/sec vs Recompilations/sec. A healthy SQL Server database caches most of its execution plans for

reuse, so that it does not need to compile a plan again: compiling plans is a CPU-intensive operation and SQL Server tries to avoid it as

much as it can. A rule of thumb is to have a number of compilations per second that is 10% of the number of Batch Requests per second.

A workload that contains a high number of ad-hoc queries will generate a higher rate of compilations per second.

Recompilations are very similar to compilations: SQL Server identifies in the cache a plan with one or more base objects that have changed

and sends the plan to the optimizer to recompile it.

Compiles and recompiles are expensive operations and you should look for excessively high values for these counters if you suffer from CPU

pressure on the instance.

The Access Methods chart displays Full Scans/sec vs Index Searches/sec. A typical OLTP system should get a low number of scans and a high

number of Index Searches. On the other hand, a typical OLAP system will produce more scans.

The Transactions/sec panel displays the number of transactions/sec on the instance. This allows you to identify which database is under the

higher load, compared to the ones that are not heavily utilized.

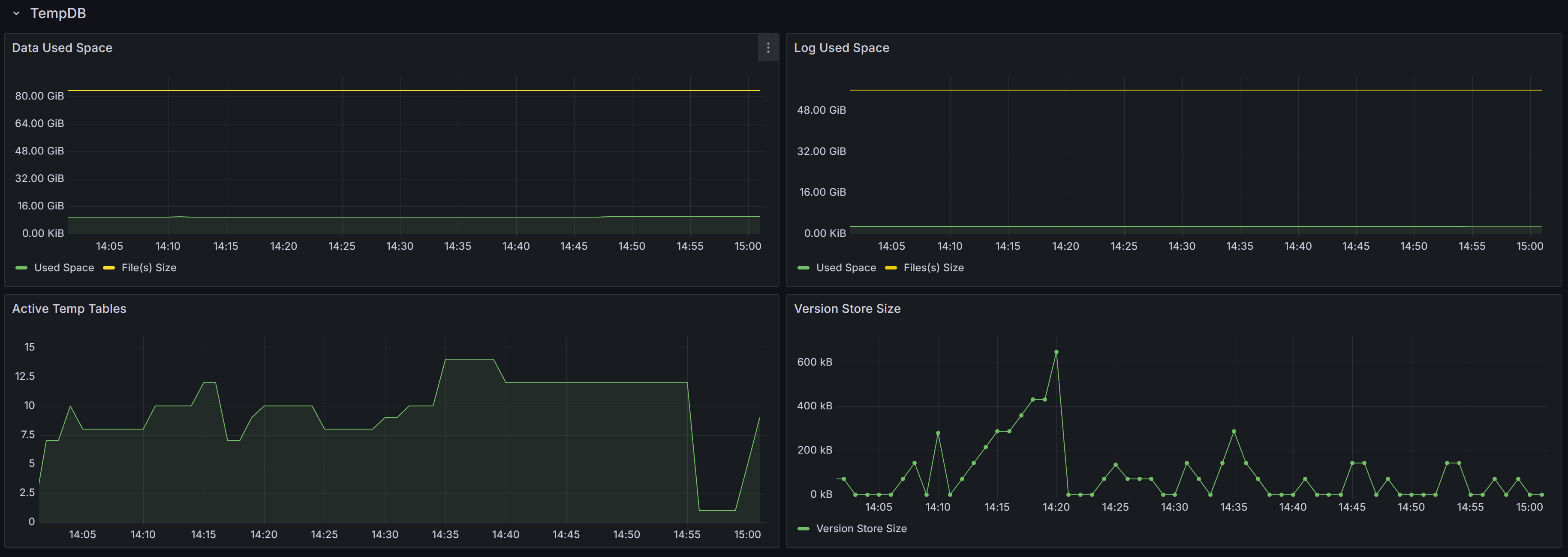

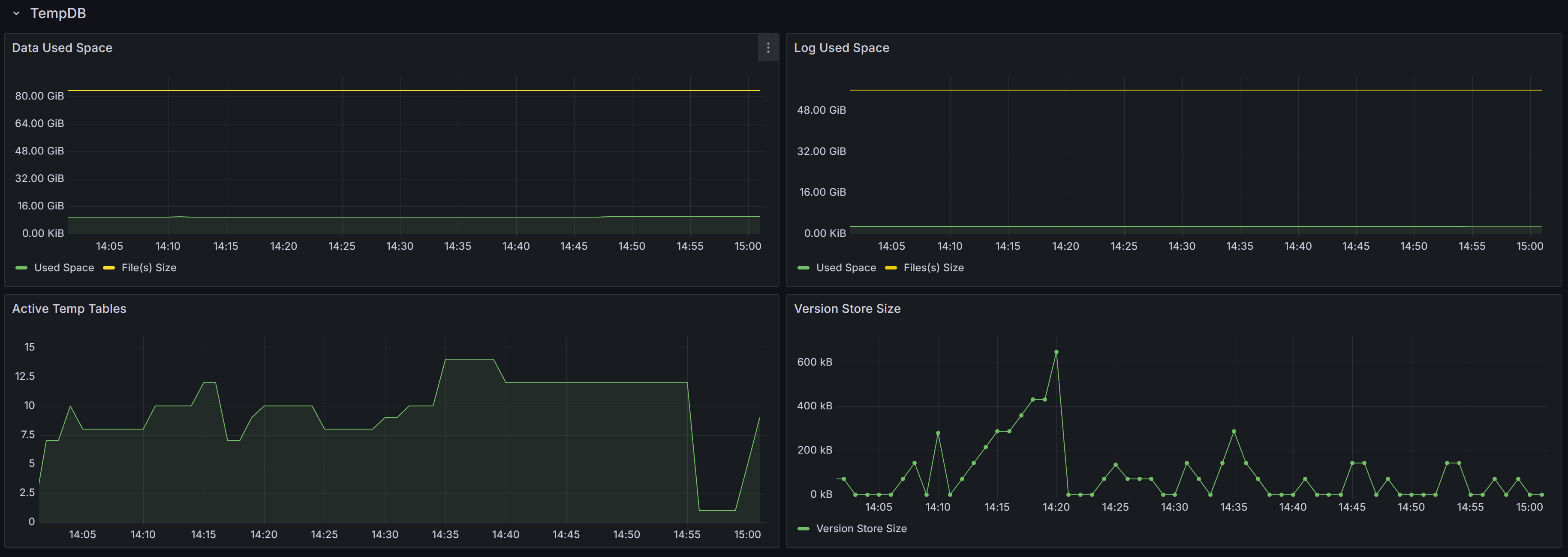

TempDB

Tempdb related metrics, including data and log space usage, active temp tables, and version store size

Tempdb related metrics, including data and log space usage, active temp tables, and version store size

This section contains panels that describe the state of the Tempdb database. The tempdb database is a shared system database that is crucial

for SQL Server performance.

The Data Used Space displays the allocated File(s) size compared to the actual Used Space in the database. Observing these metrics over time

allows you to plan the size of your tempdb database, avoiding autogrow events. It also helps you size the database correctly, to avoid wasting

too much disk space on a data file that is never entirely used by actual database pages.

The Log Used Space panel does the same, with log files.

Active Temp Tables shows the number of temporary tables in tempdb. This is not only the number of temporary tables created explicitly from the

applications (table names with the # or ## prefix), but also worktables, spills, spools and other temporary objects used by SQL Server during

the execution of queries.

The Version Store Size panel shows the size of the Version Store inside tempdb. The Version Store holds data for implementing optimistic locking

by taking transaction-consistent snapshots of the data on the tables instead of imposing locks. If you see the size of Version Store going up

continuously, you may have one or more open transactions that are not being committed or rolled back: in that case, look for long standing

sessions with transaction count greater than one.

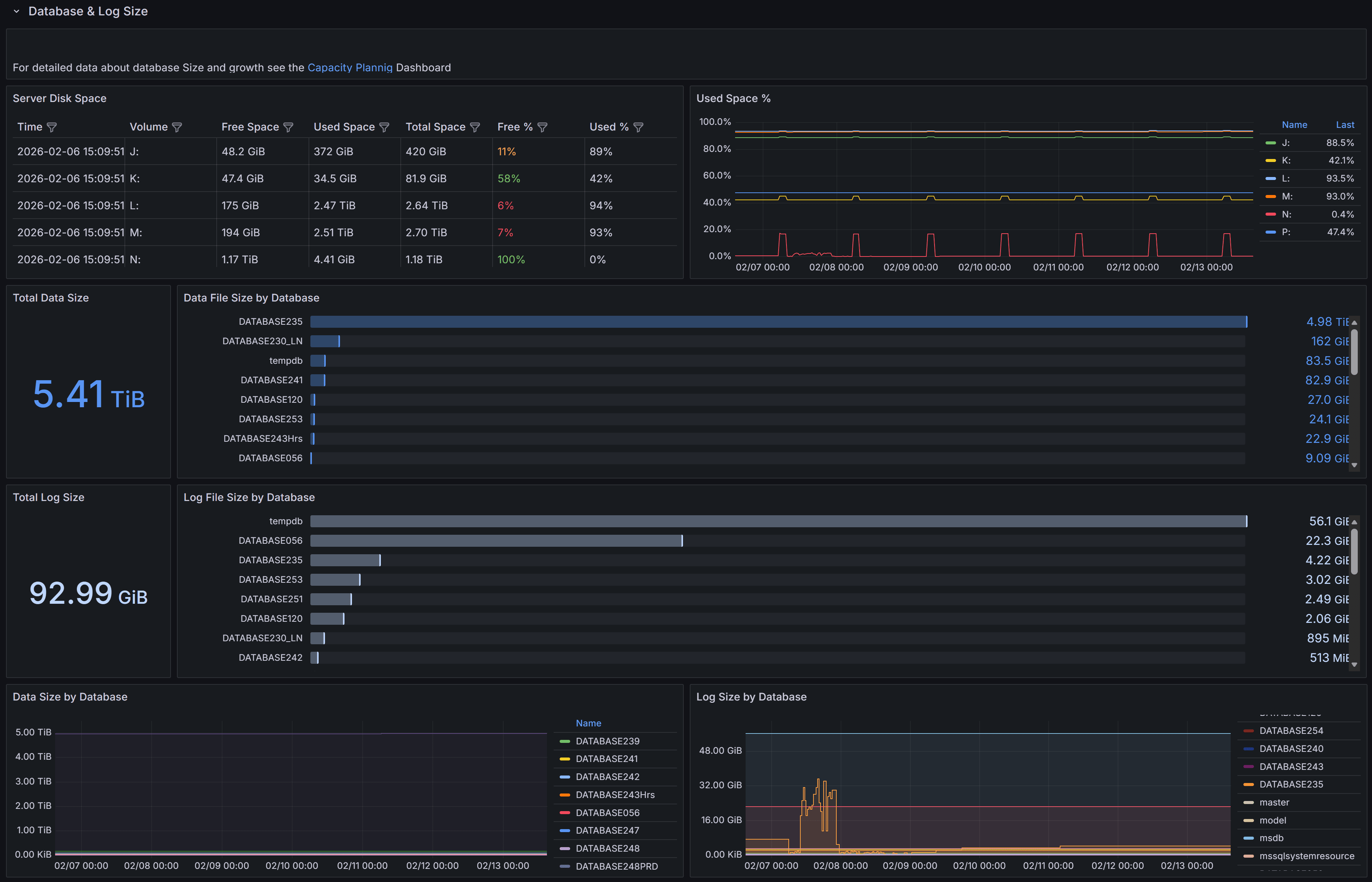

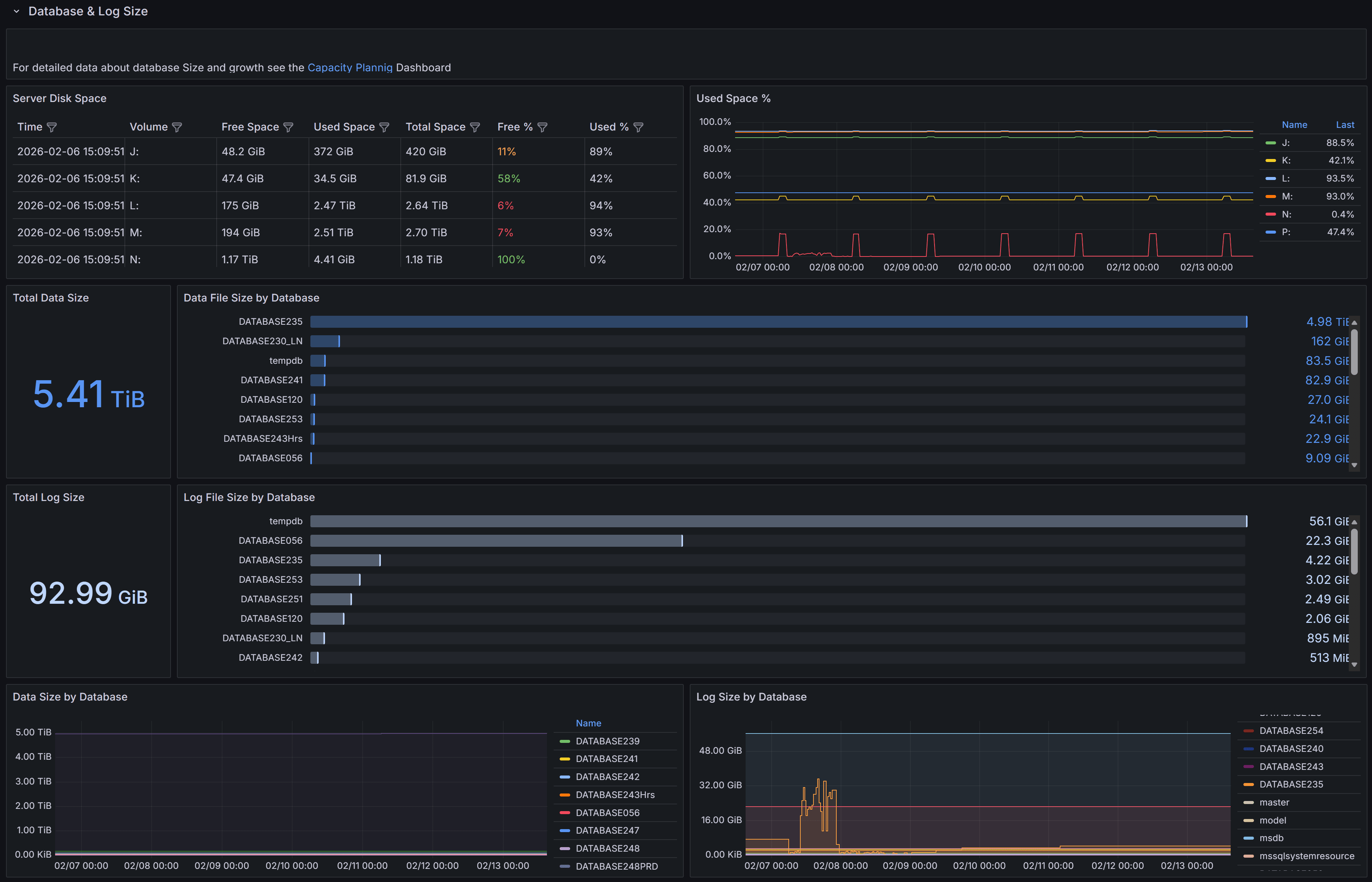

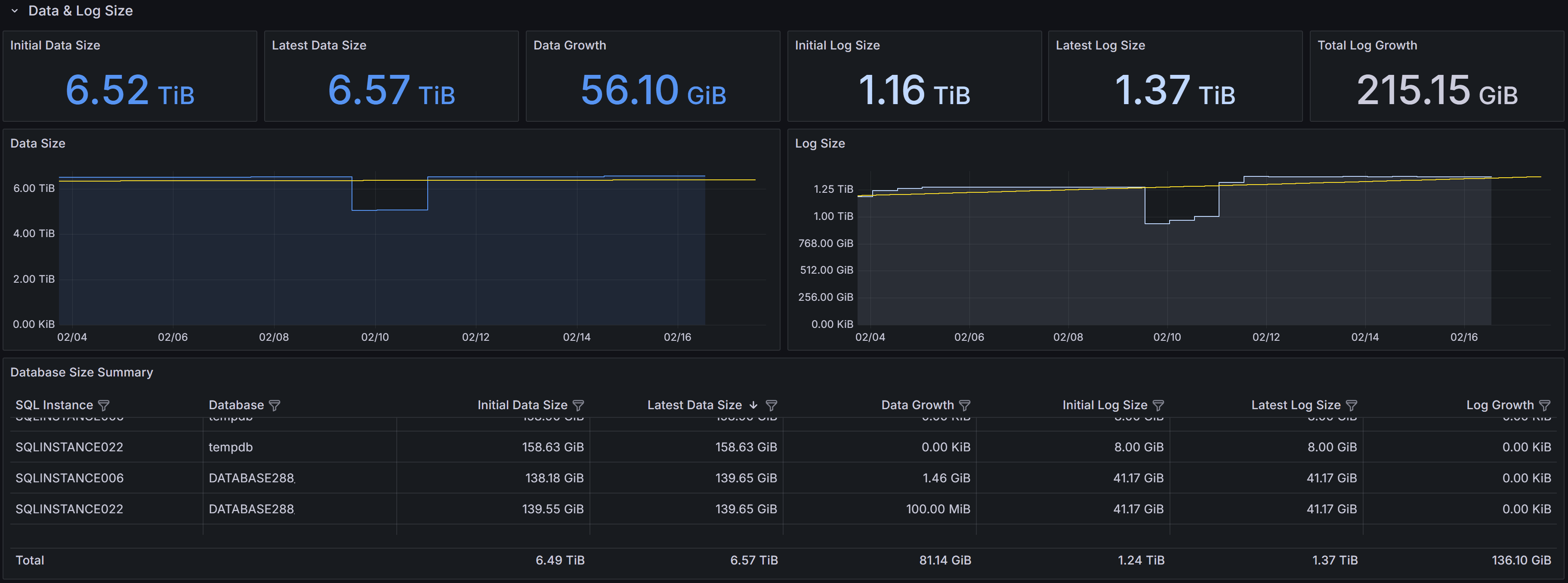

Database & Log Size

Size and growth of databases and transaction logs, with trends over time

Size and growth of databases and transaction logs, with trends over time

This section provides detailed information about the size and growth of databases and their transaction logs on the instance.

The Total Data Size panel displays the combined size of all data files across all databases on the instance.

This metric helps you understand the overall storage footprint of your databases and plan for capacity requirements.

The Data File Size by Database chart shows a horizontal bar chart with the size of data files for each individual database.

This visualization makes it easy to identify which databases consume the most storage space and helps prioritize

storage optimization efforts.

The Total Log Size panel shows the cumulative size of all transaction log files on the instance.

Monitoring log file size is important because transaction logs can grow rapidly under certain workloads,

especially when full recovery mode is enabled and log backups are not performed frequently enough.

The Log File Size by Database chart presents the log file sizes for each database in a horizontal bar format.

This allows you to quickly spot databases with unusually large transaction logs that may need attention,

such as more frequent log backups or investigation of long-running transactions.

The Data Size by Database panel at the bottom left shows the size trend over time for each database.

This time-series visualization helps you understand growth patterns and predict when additional storage capacity will be needed.

The Log Size by Database panel displays the transaction log size trend over time for each database.

Sudden spikes in this chart may indicate unusual activity, such as large bulk operations,

index maintenance, or uncommitted transactions that are preventing log truncation.

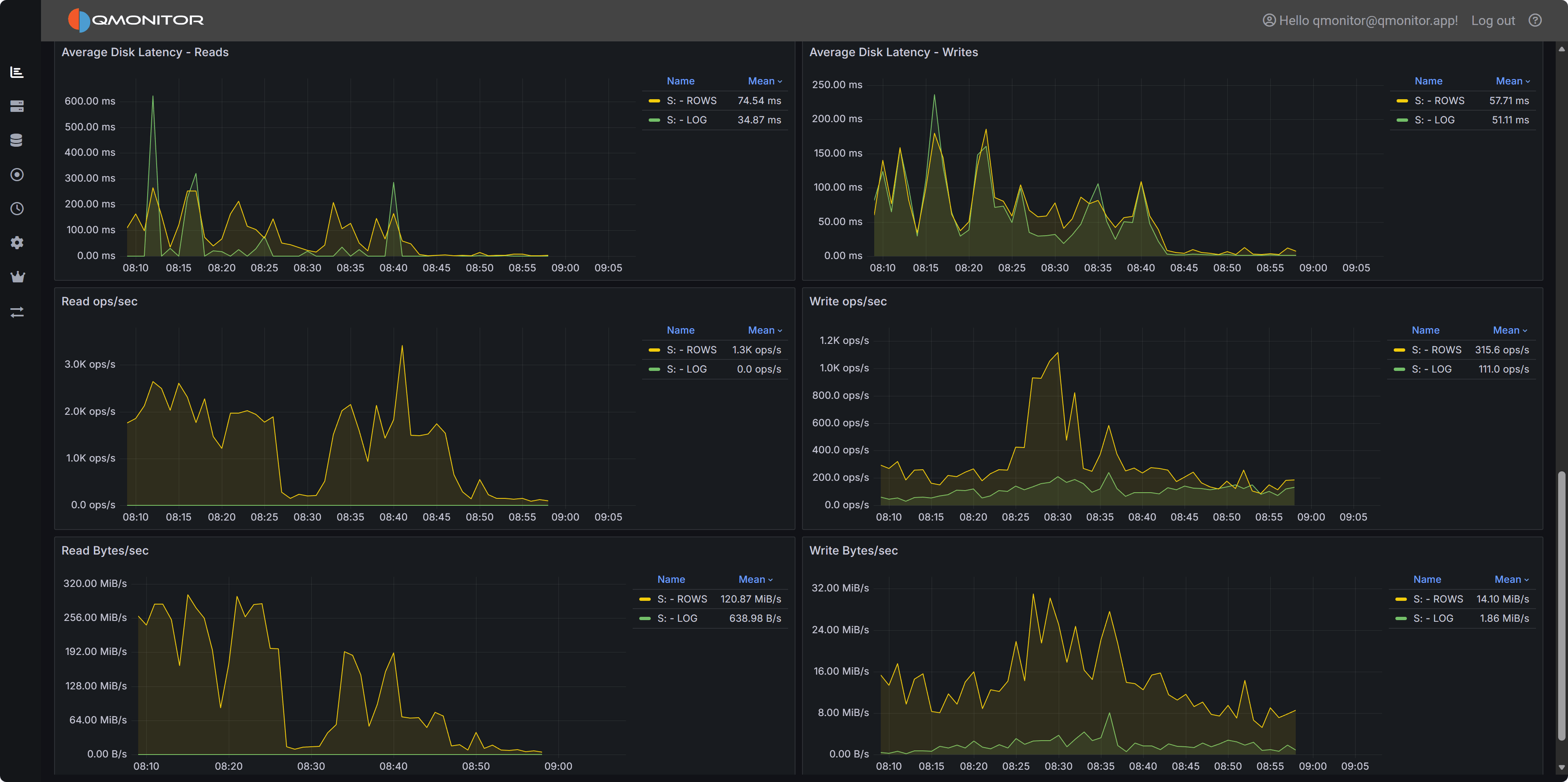

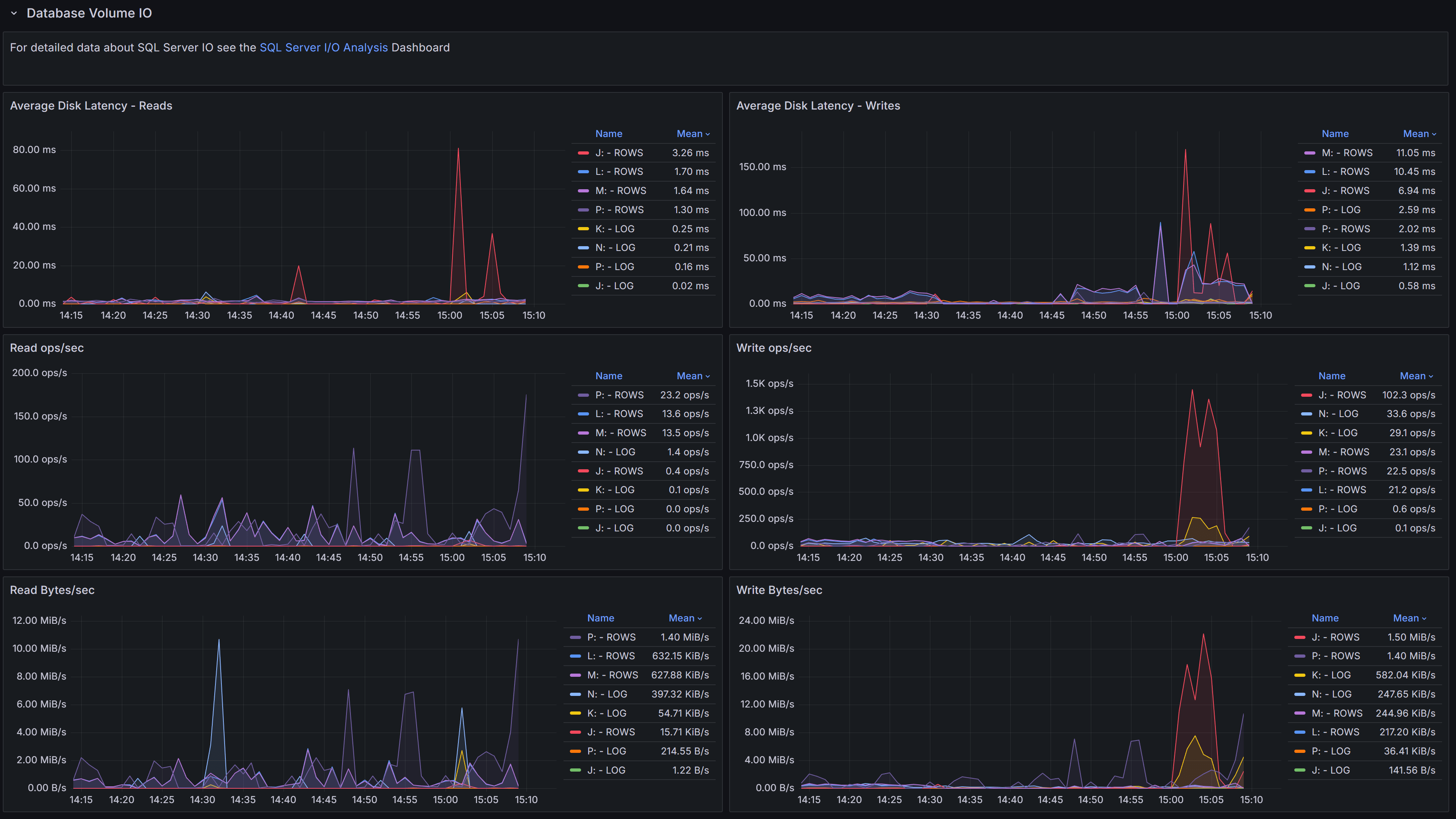

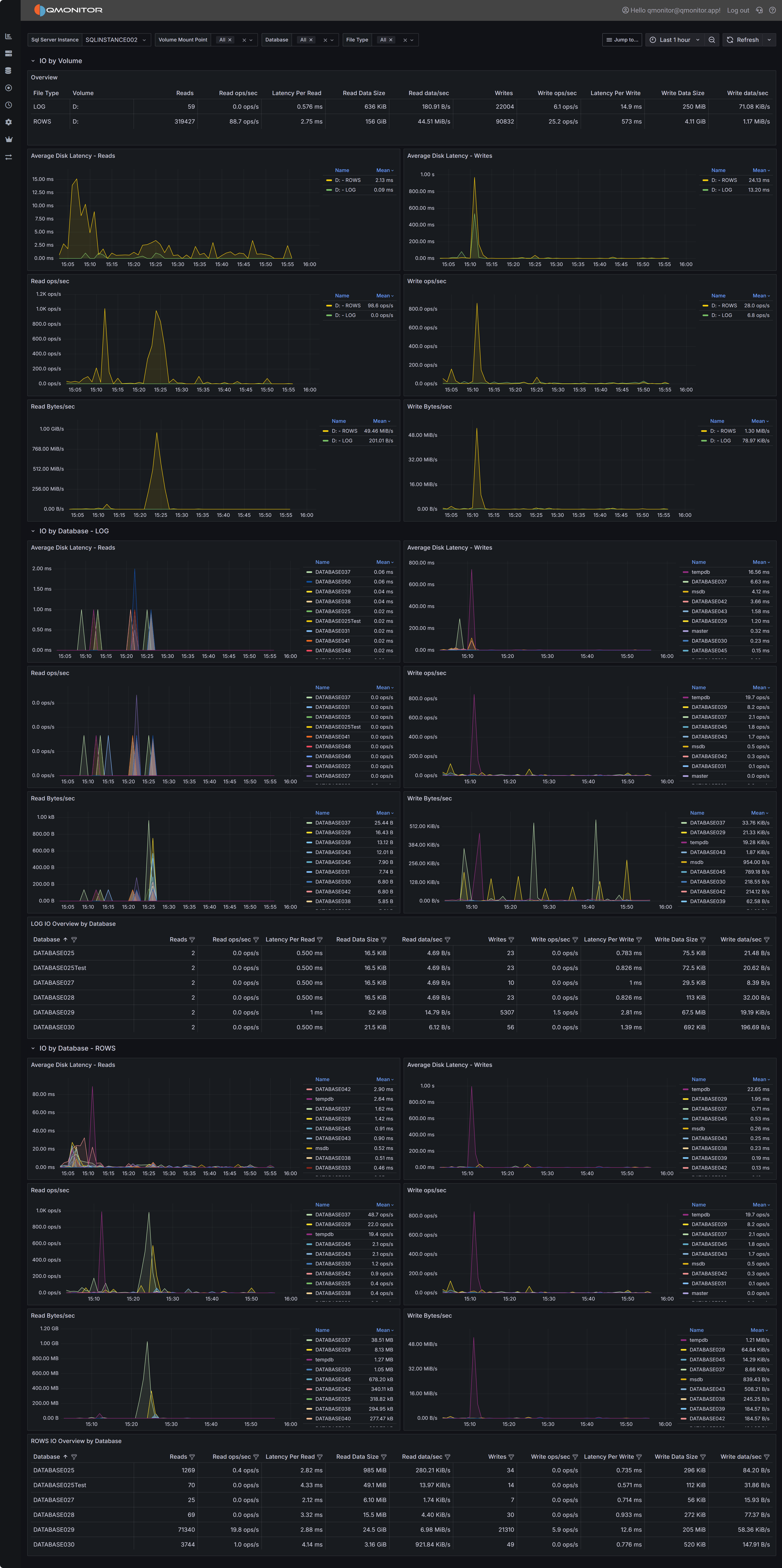

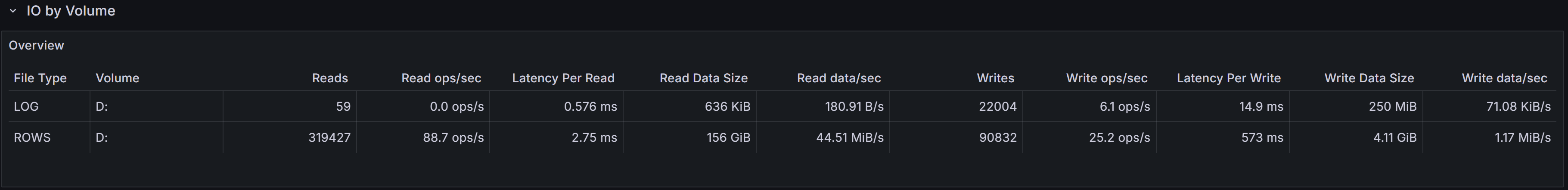

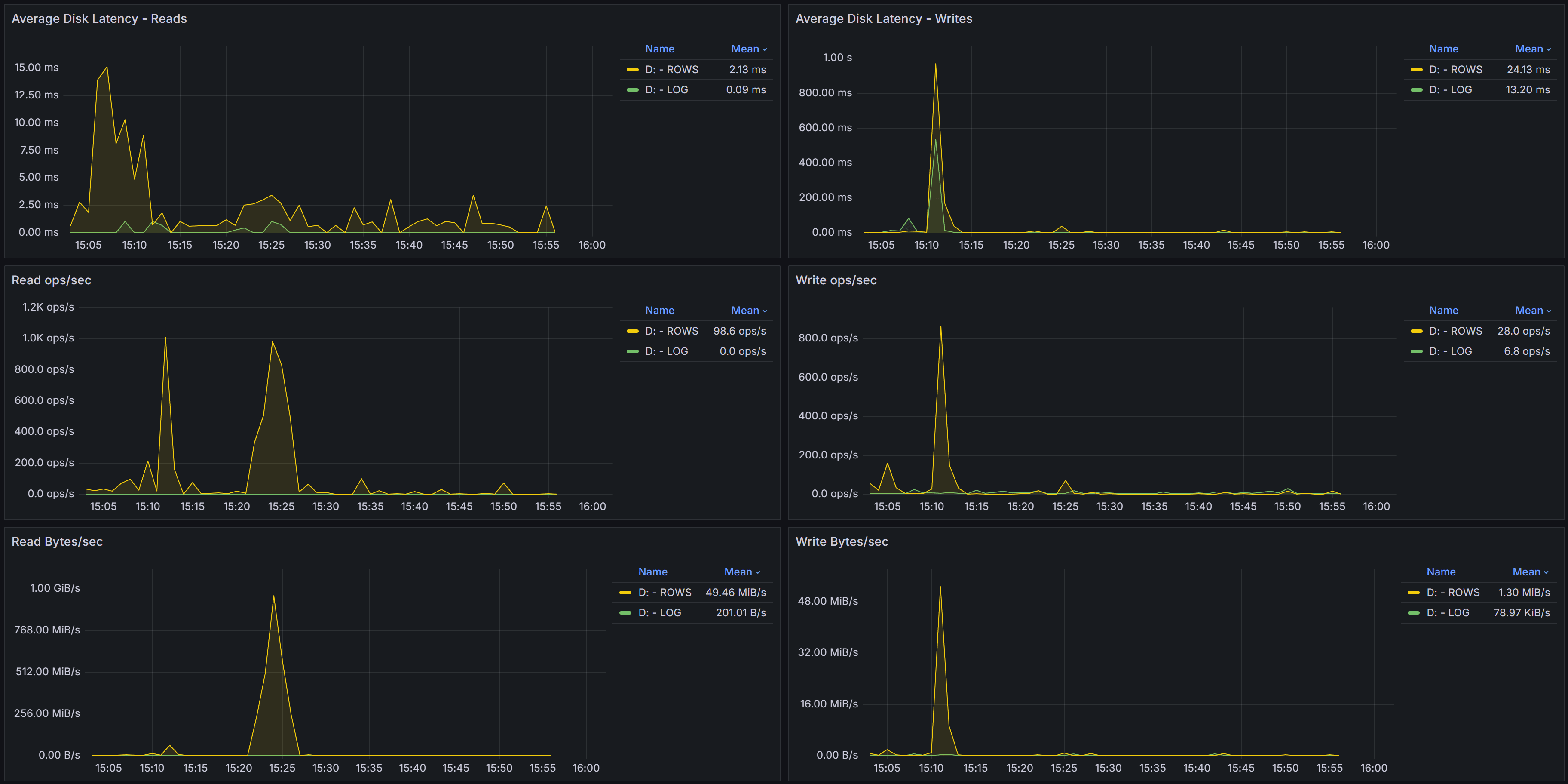

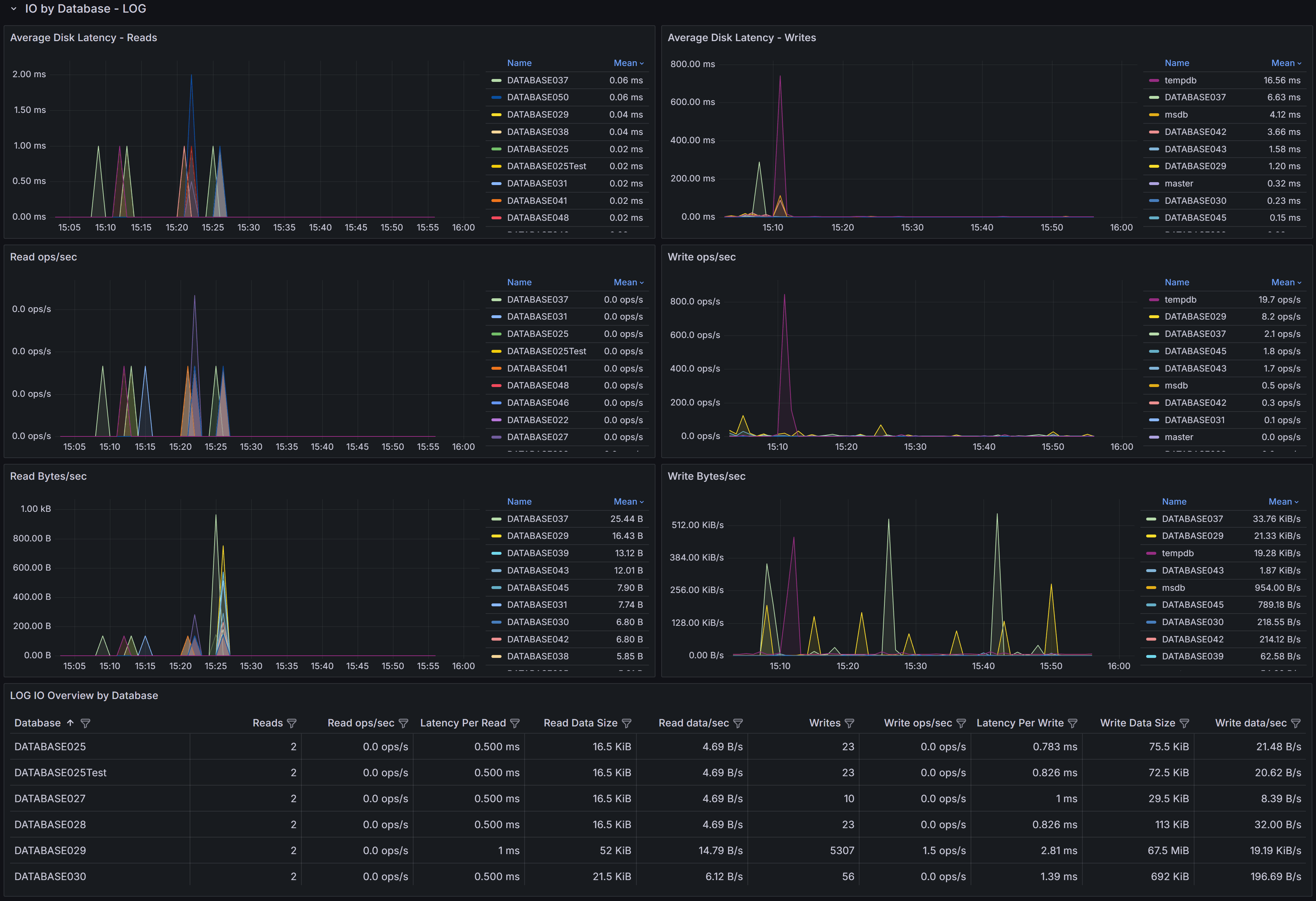

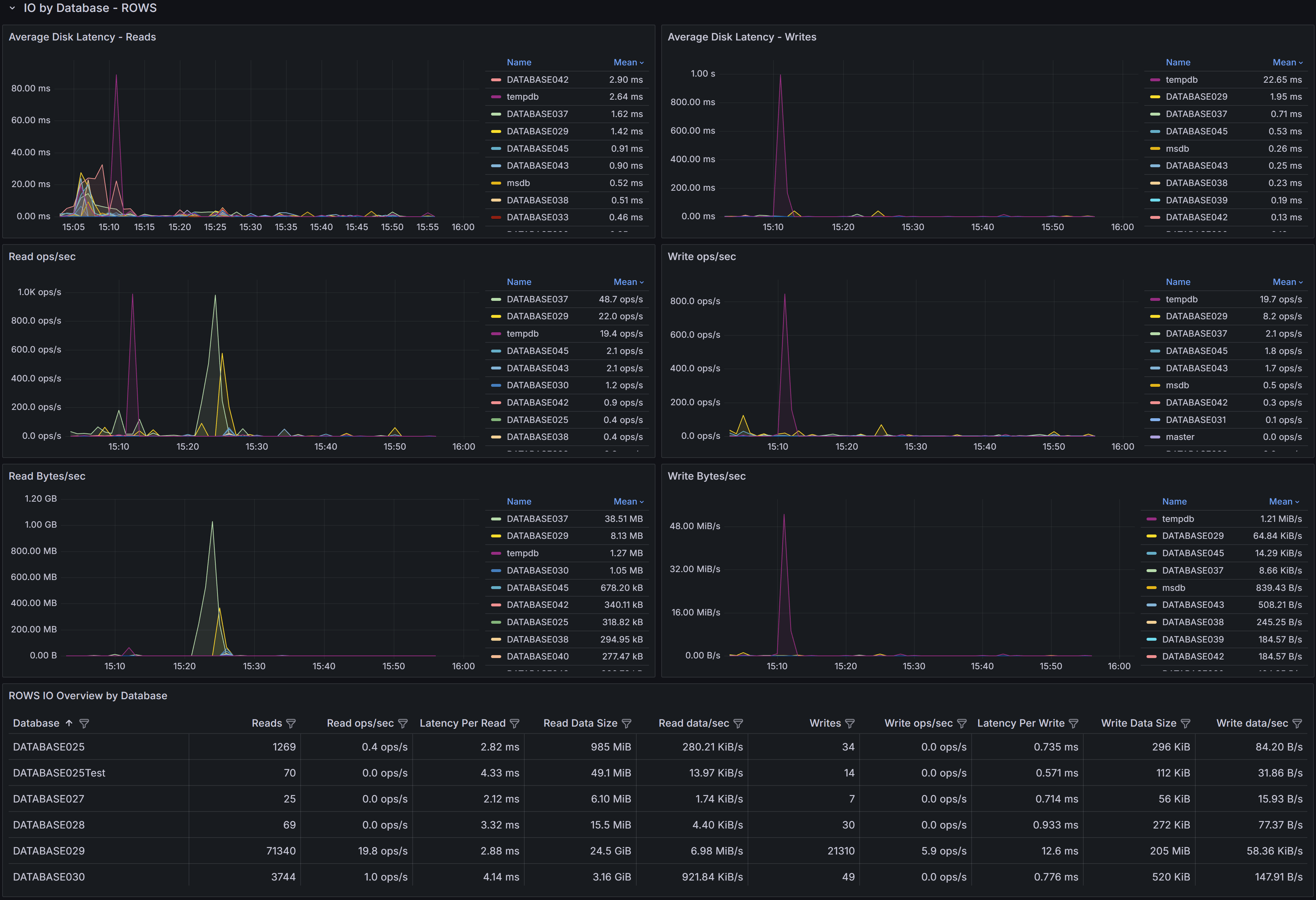

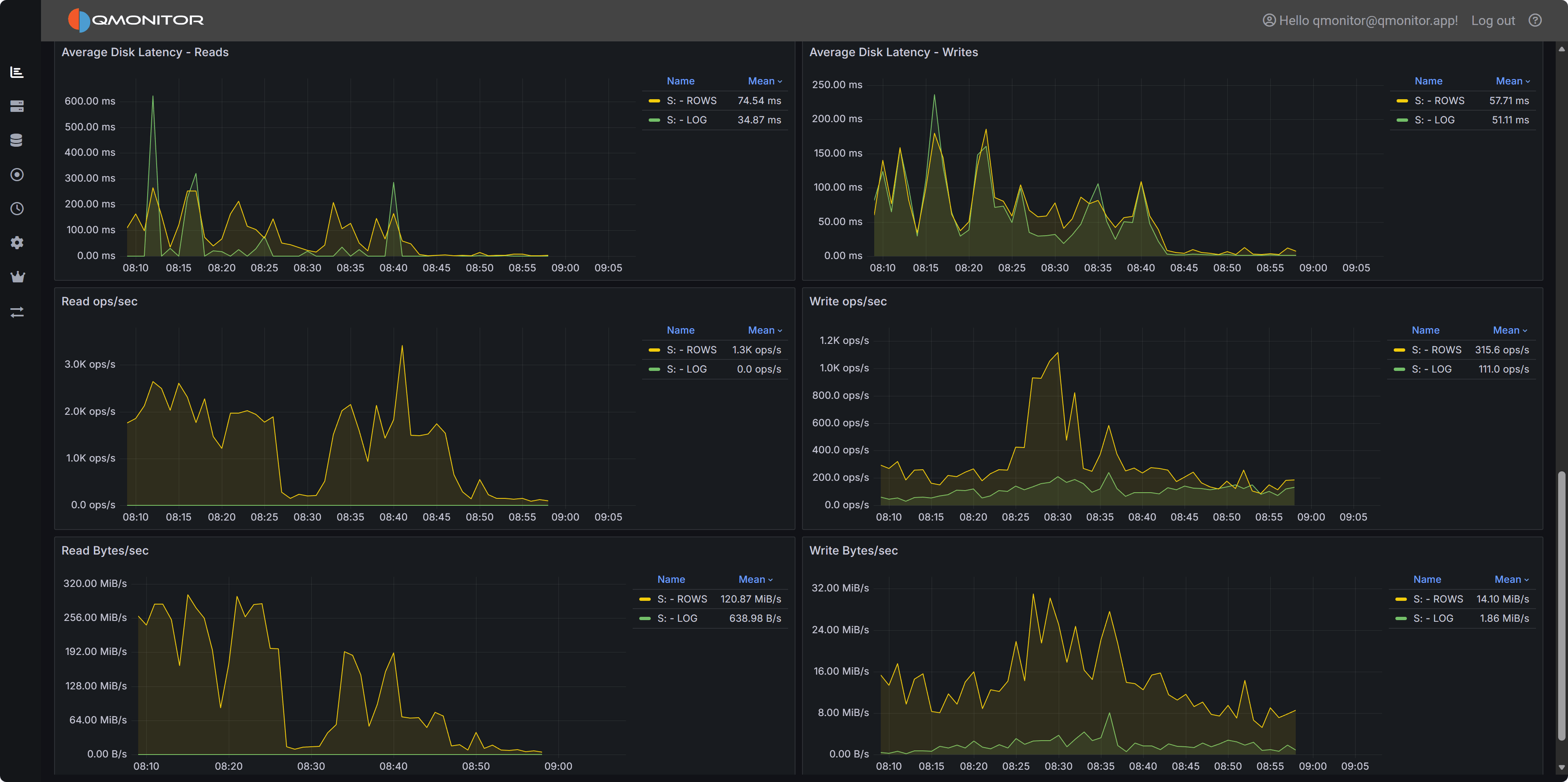

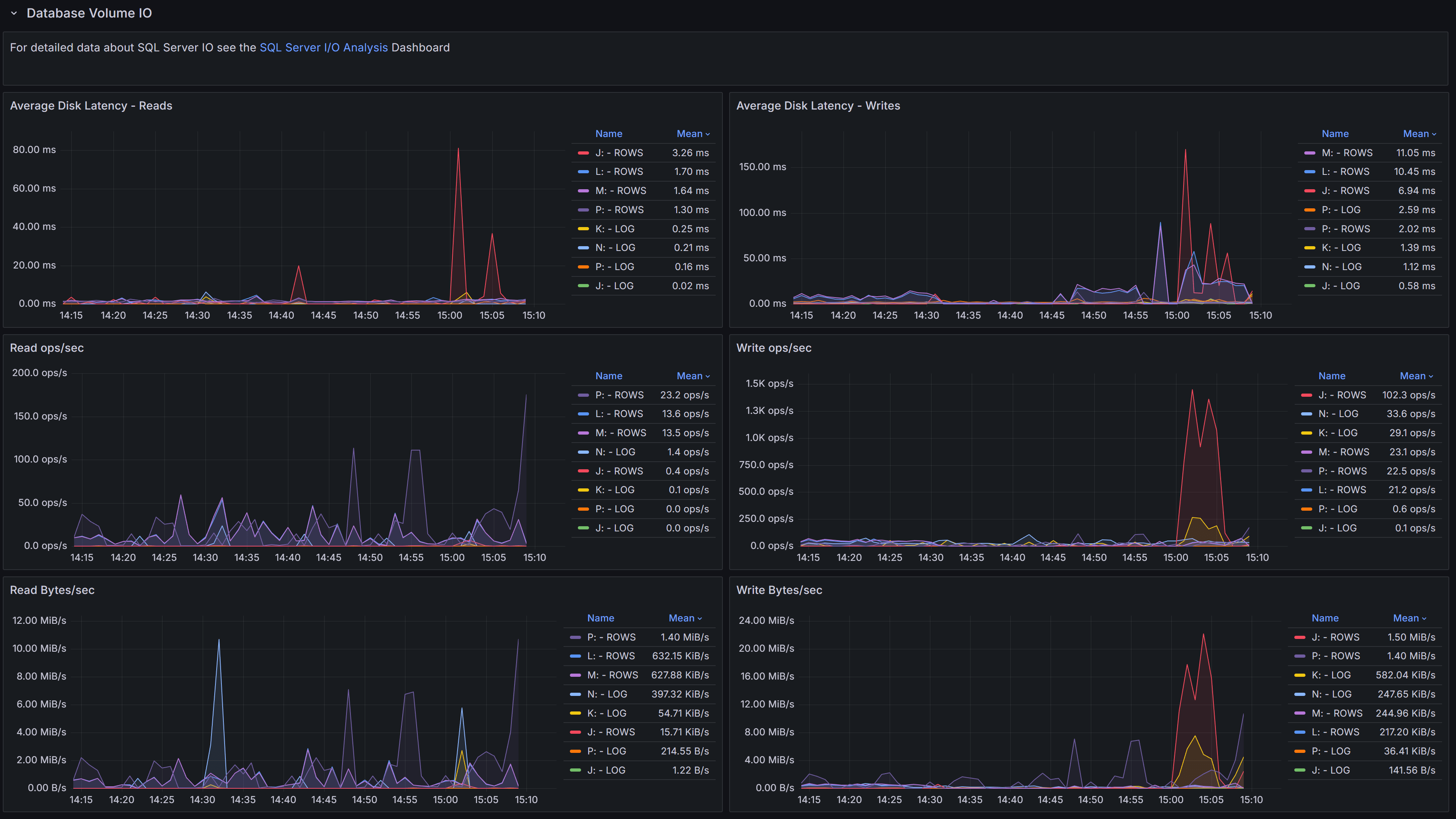

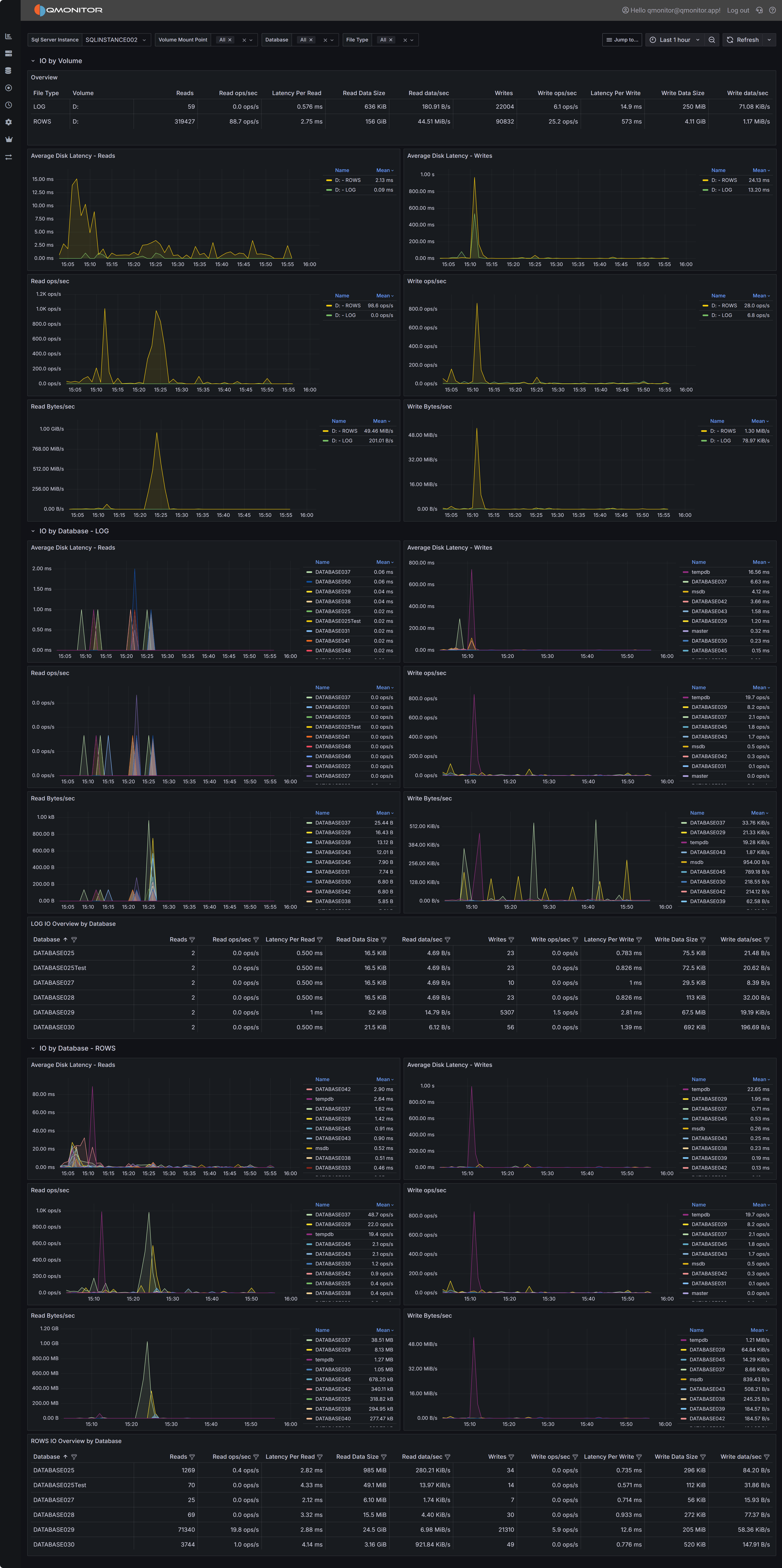

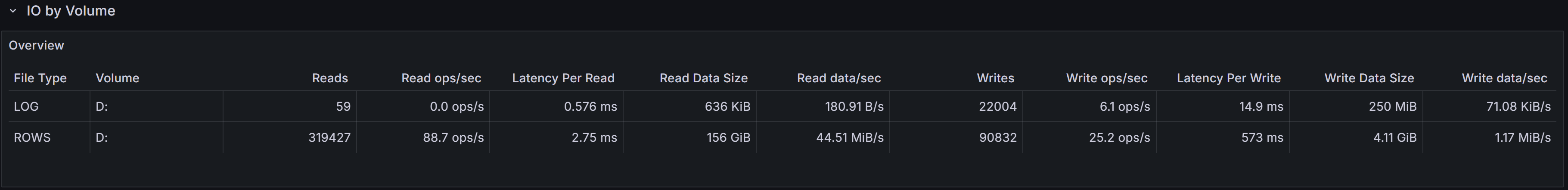

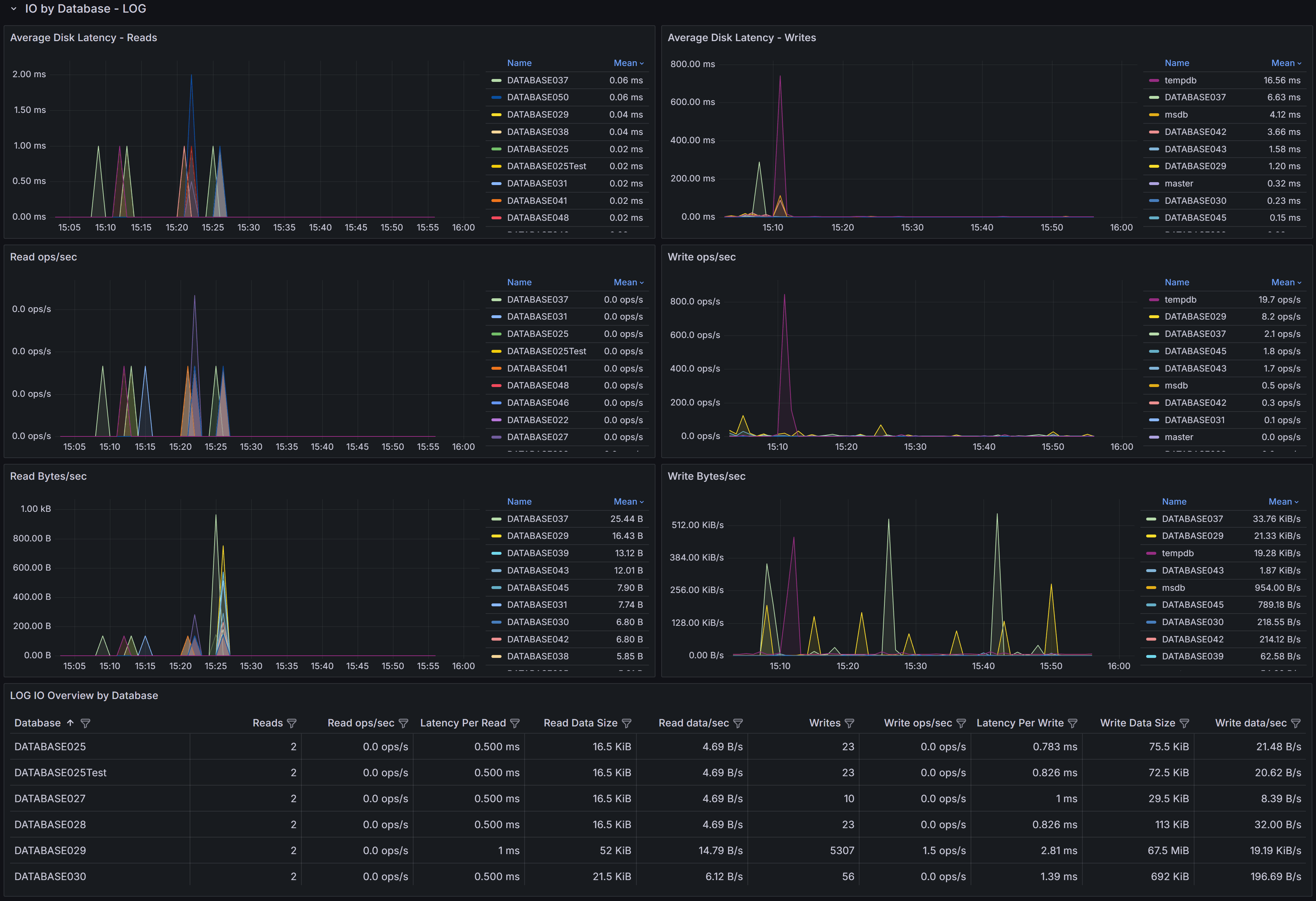

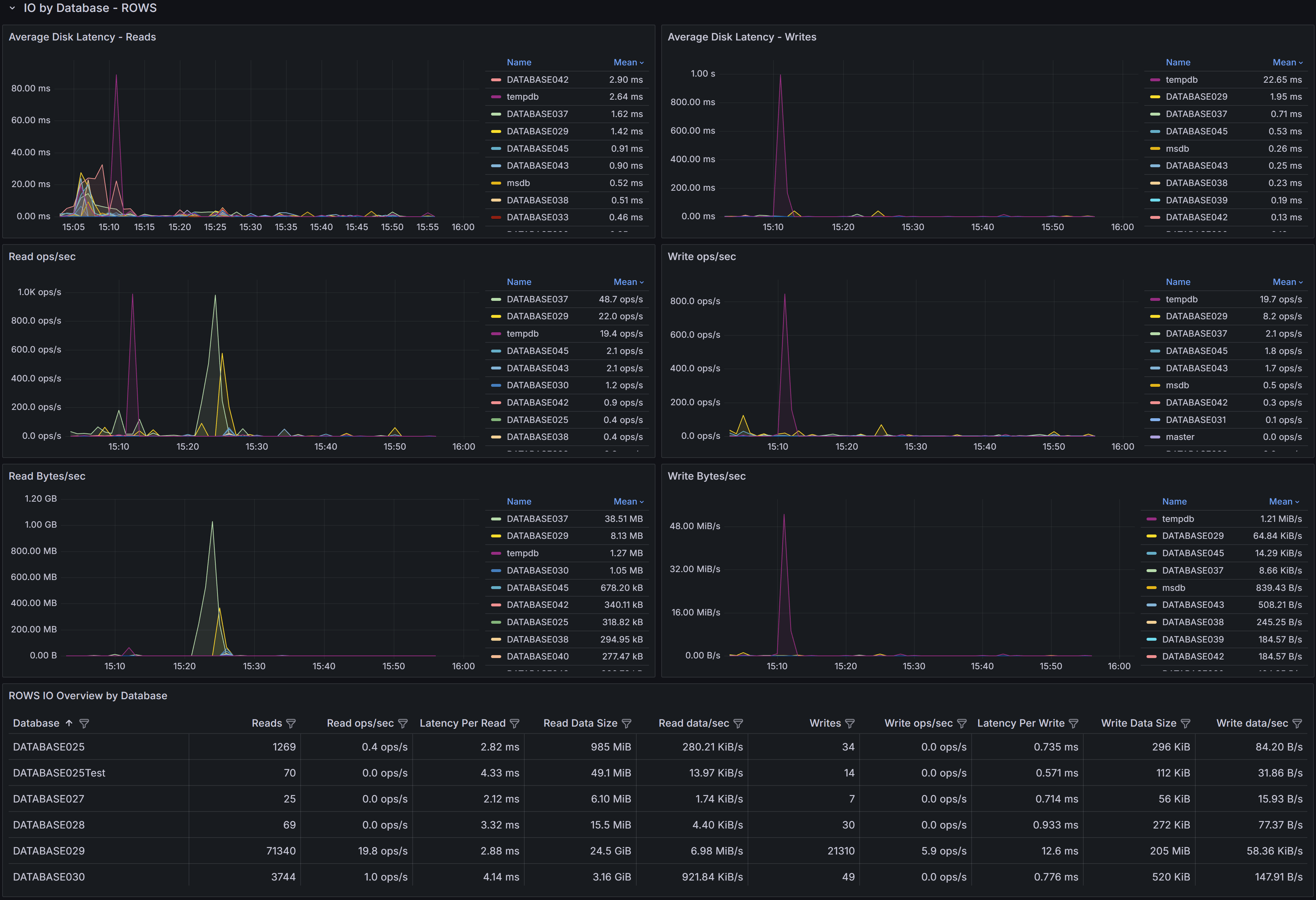

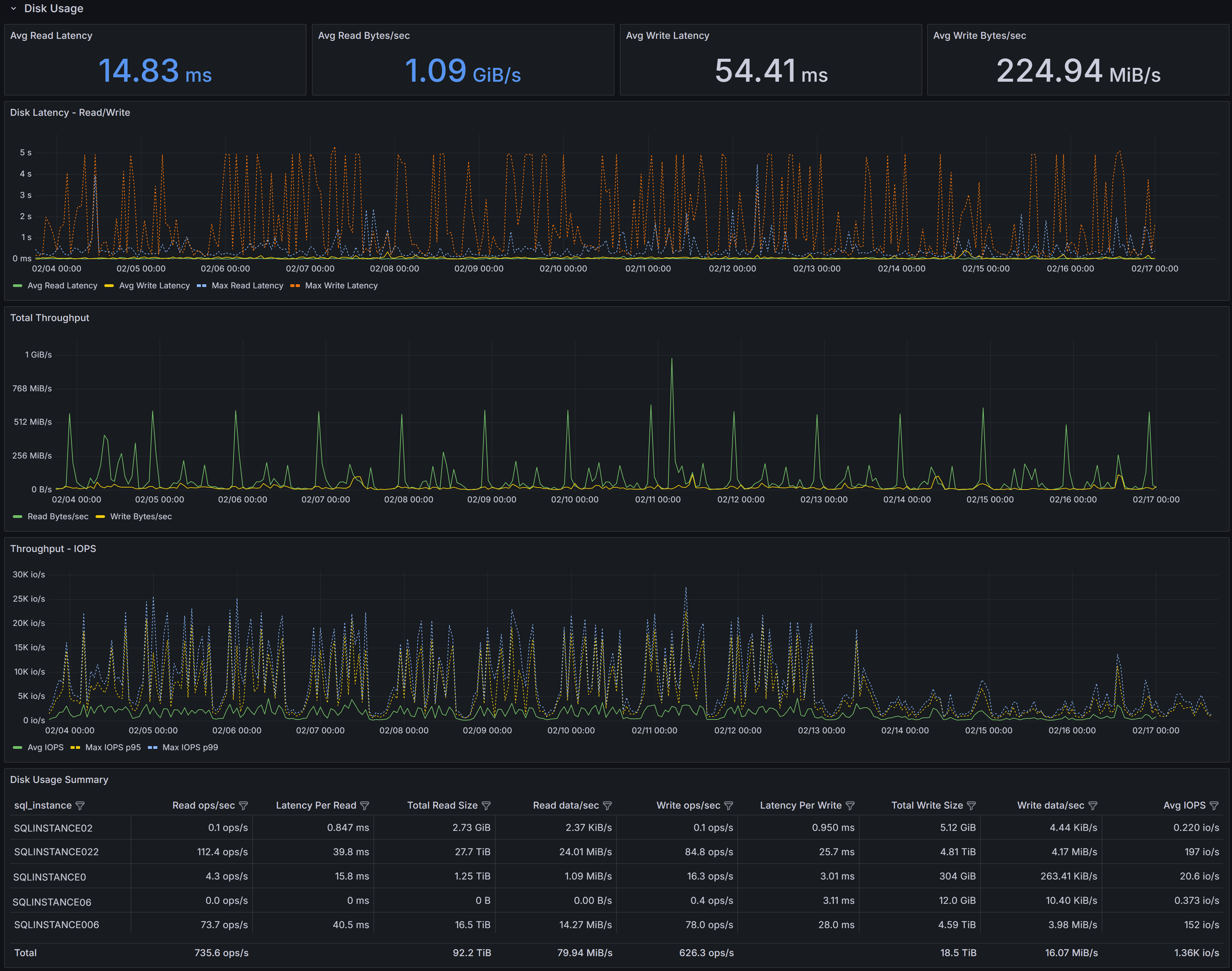

Database Volume I/O

Disk I/O performance metrics for volumes hosting database files, including latency, throughput, and operations per second

Disk I/O performance metrics for volumes hosting database files, including latency, throughput, and operations per second

This section focuses on the I/O performance characteristics of the volumes hosting your databases.

Understanding disk latency and throughput is essential for diagnosing performance problems related to storage.

The Average Data Latency - Reads chart displays the average read latency in milliseconds for data files.

Read latency measures how long it takes for SQL Server to retrieve data from disk when it is not available

in the buffer cache. For modern SSD storage, read latency should typically be under 5 milliseconds.

Higher values may indicate storage performance issues, I/O contention, or inefficient queries

causing excessive physical reads.

The Average Data Latency - Writes chart shows the average write latency in milliseconds for data files.

Write operations occur when SQL Server flushes dirty pages from the buffer cache to disk during

checkpoint operations or when the lazy writer needs to free up memory. Consistently high write latency

can impact transaction commit times and overall system responsiveness.

The Read ops/sec panel displays the number of read operations per second on data files.

This metric helps you understand the read workload intensity on your storage subsystem.

A sudden increase in read operations may indicate missing indexes, insufficient memory causing more

physical I/O, or changes in query patterns.

The Write ops/sec panel shows the number of write operations per second on data files.

Write operations increase during periods of high transaction activity, bulk data loads, or index maintenance.

Monitoring this metric helps you assess the write workload on your storage and identify periods of peak I/O activity.

The Read Bytes/sec chart represents the throughput in bytes per second for read operations.

This metric, combined with read operations per second, gives you insight into the size of read I/O requests.

Large sequential reads will show higher throughput with fewer operations, while random small reads will

show more operations with lower throughput.

The Write Bytes/sec chart displays the throughput in bytes per second for write operations.

This helps you understand the volume of data being written to disk over time. Monitoring write

throughput is important for capacity planning and ensuring your storage subsystem can handle

the write workload during peak periods.

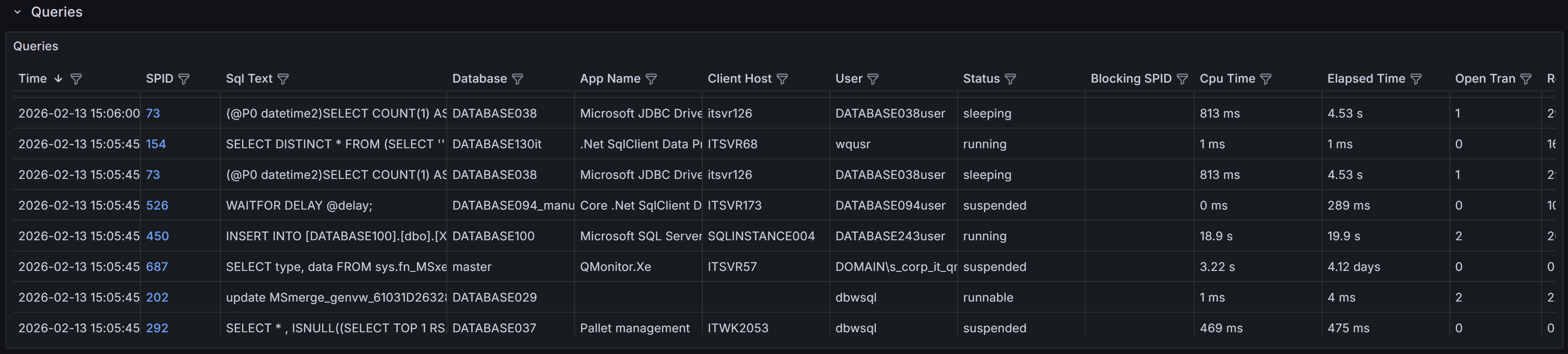

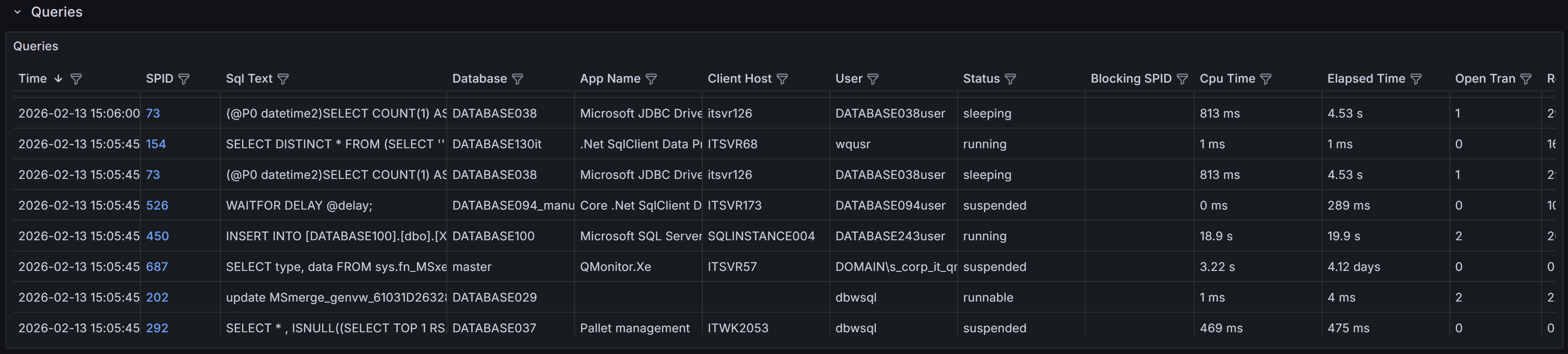

Queries

Queries captured, with details on execution, resource usage, and wait information for troubleshooting performance issues

Queries captured, with details on execution, resource usage, and wait information for troubleshooting performance issues

This section provides visibility into the queries running on the instance. The Queries table at the

bottom of the dashboard lists all queries captured during the selected time range. A capture is performed every 15 seconds,

and the table is updated in real time as new queries are captured, according to the refresh interval defined for the dashboard.

This table is a powerful tool for identifying and investigating problematic queries that may be impacting the performance

of your SQL Server instance. It is also a great way to troubleshoot performance issues in real time or in the past,

by selecting a specific time range.

The table includes the following columns to help you identify and investigate problematic queries:

- Time shows the timestamp when the query was captured.

- SPID (Server Process ID) identifies the session that is executing the query. Click on the SPID to show more details about the

query in the query detail dashboard.

- Sql Text presents a snippet of the query text, truncated to 255 characters. Click on the SPID to see the full statement.

- Database identifies which database the query is running against.

- App Name shows the application name that is connected to the instance and running the query.

This information is provided by the client application when it connects to SQL Server.

- Client Host reveals which machine is executing the query.

- User displays the SQL Server login name used to run the query.

- Status indicates whether the query is currently running, suspended, or completed.

- Blocking SPID shows if the query is blocked by another session, with the session ID of the blocking process.

- Cpu Time displays the cumulative CPU time consumed by the query in milliseconds.

- Elapsed Time shows the total wall-clock time the query has been running.

- Open Tran indicates the number of open transactions for the session, which is important for identifying

long-running transactions that may cause blocking or prevent log truncation.

- Reads shows the number of logical reads performed by the query, which is a key indicator of query efficiency

and resource consumption.

- Writes displays the number of logical writes performed by the query. High write counts may indicate operations

that modify large amounts of data or queries that create temporary objects or worktables.

- Query Hash is a binary hash value that identifies queries with similar logic, even if literal values differ.

This allows you to group and analyze similar queries together to identify patterns in query execution.

Click on the hash value to see all queries with the same query hash in the query detail dashboard.

- Plan Hash is a binary hash value that identifies queries using the same execution plan. Multiple queries with

the same plan hash share the same plan in the cache, which helps you understand plan reuse and cache efficiency.

Click on the hash value to download the execution plan for the query in XML format.

- Wait Type shows the type of wait the session is currently experiencing if it is in a suspended state.

Common wait types include PAGEIOLATCH for disk I/O, CXPACKET for parallelism coordination, and LCK for lock waits.

Understanding wait types helps diagnose the root cause of query delays.

- Wait Resource displays the specific resource the session is waiting for, such as a page ID, lock resource,

or network address. This information is valuable for pinpointing exactly what is causing a query to wait.

- Time since last request shows how long the session has been idle since its last batch completed.

Sessions with long idle times but open transactions may be holding locks unnecessarily and causing blocking issues.

- Blocking or blocked displays whether the session is blocking other queries, being blocked, or both. This helps you quickly

identify sessions involved in blocking chains and prioritize resolution efforts.

Each table column allows you to sort and filter the queries to focus on specific criteria, such as high CPU time,

long elapsed time, or specific wait types. You can also use the filter on the time column to focus on queries captured

at a specific time, such as the latest available sample.

The column filters work more or less like Excel filters: you can select specific values to include or exclude,

or you can use the search box to find specific text in the column.

Use this table to identify queries that may need optimization, such as those with high CPU time,

excessive reads, or long elapsed times. Queries that are frequently blocked or have open transactions

for extended periods may indicate locking or transaction management issues that require investigation.

Click on any SPID to drill down into more details and view the full query text and execution plan when available.

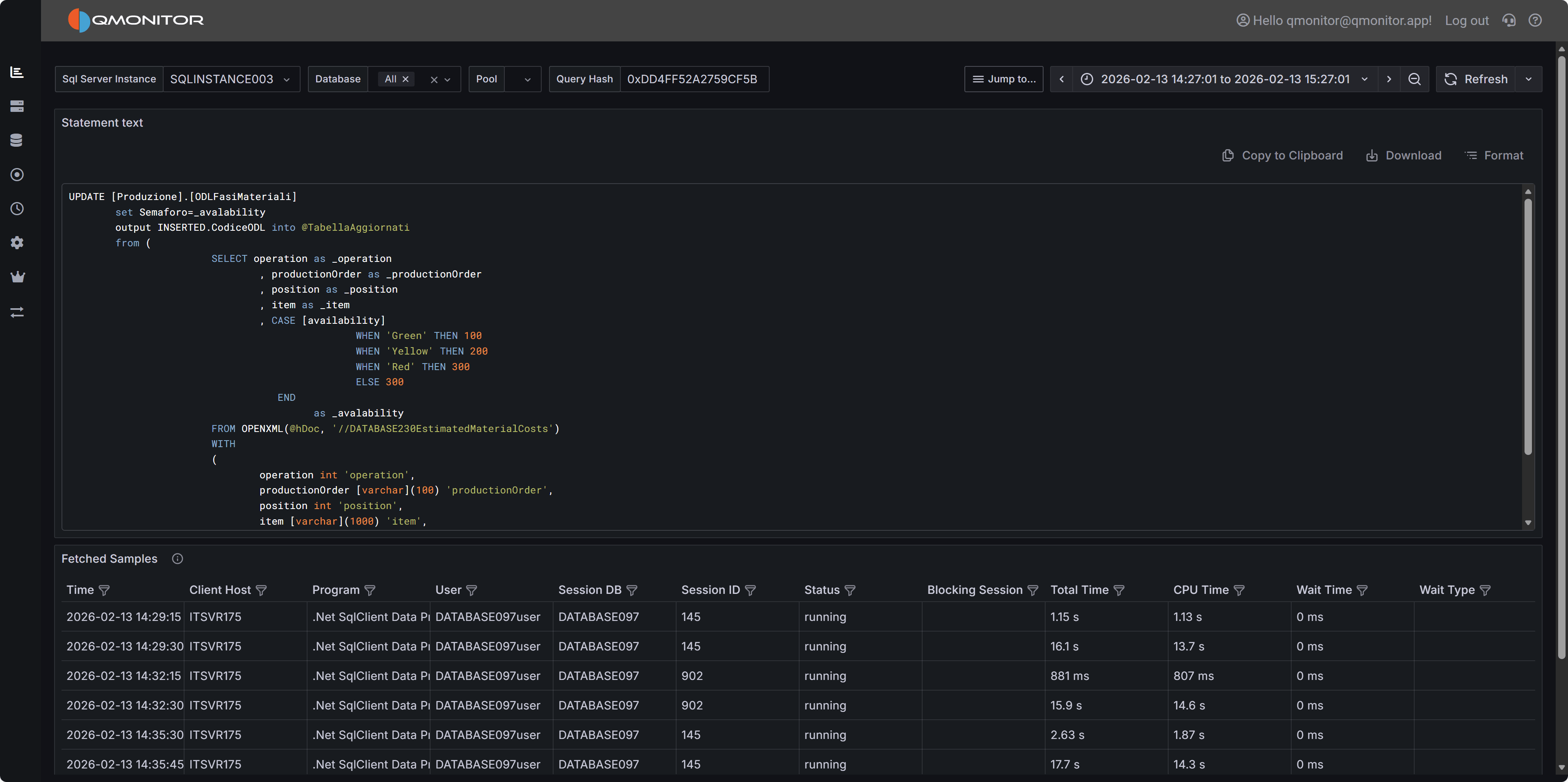

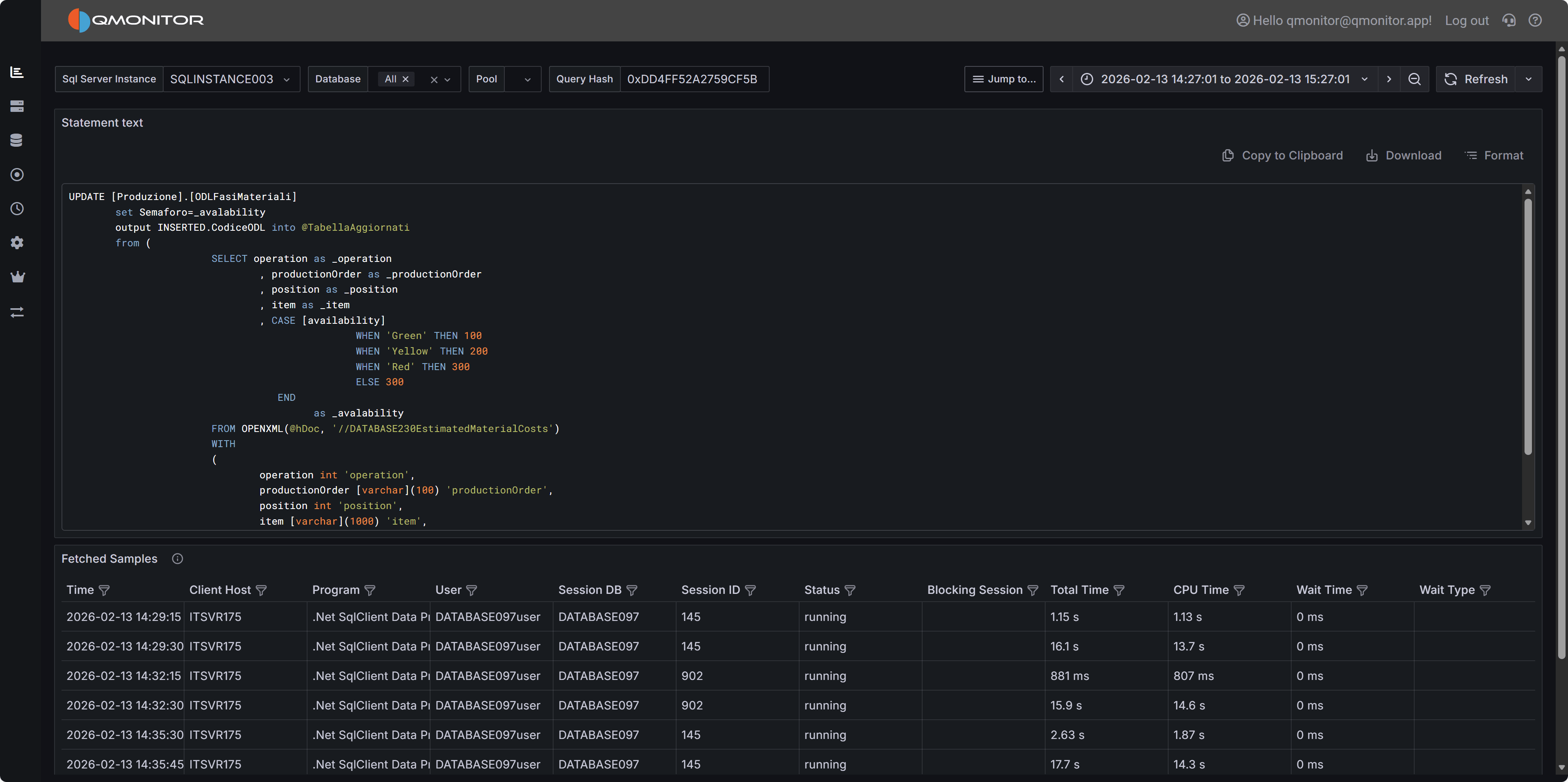

4.1.2.1 - Query Detail

Detailed information about a specific SQL query

The Query Detail dashboard displays details for a single SQL query.

Query Detail Dashboard

Query Detail Dashboard

Dashboard Sections

Query Text and Actions

The top panel shows the query text as QMonitor captured it. Queries generated by

ORMs or written on a single line can be hard to read. Click “Format” to apply

readable SQL formatting.

Click “Copy to Clipboard” to copy the query for running or analysis in external

tools (such as SSMS). Use “Download” to save the query as a .sql file.

Fetched Samples

The table below lists all executions of this query within the selected time range.

QMonitor captures a sample every 15 seconds: long-running queries will produce

multiple samples, and queries running at the instant of capture will produce a

sample as well.

Samples alone may not fully reflect a query’s resource usage or execution time.

For a complete impact analysis, rely on the Query Stats data:

Query Stats.

Note

Be aware that the “Duration” column in this table represents the duration of the query at the

moment of capture, not the total execution time. For long-running queries, this duration may

be shorter than the actual total execution time.Important

Keep in mind that each sample represents a snapshot of the query’s state at the time of capture: the

metrics shown in the table (CPU, Memory, I/O) are not meant to be added together across samples,

but rather to provide insight into the query’s resource usage at different points in time.4.1.3 - Query Stats

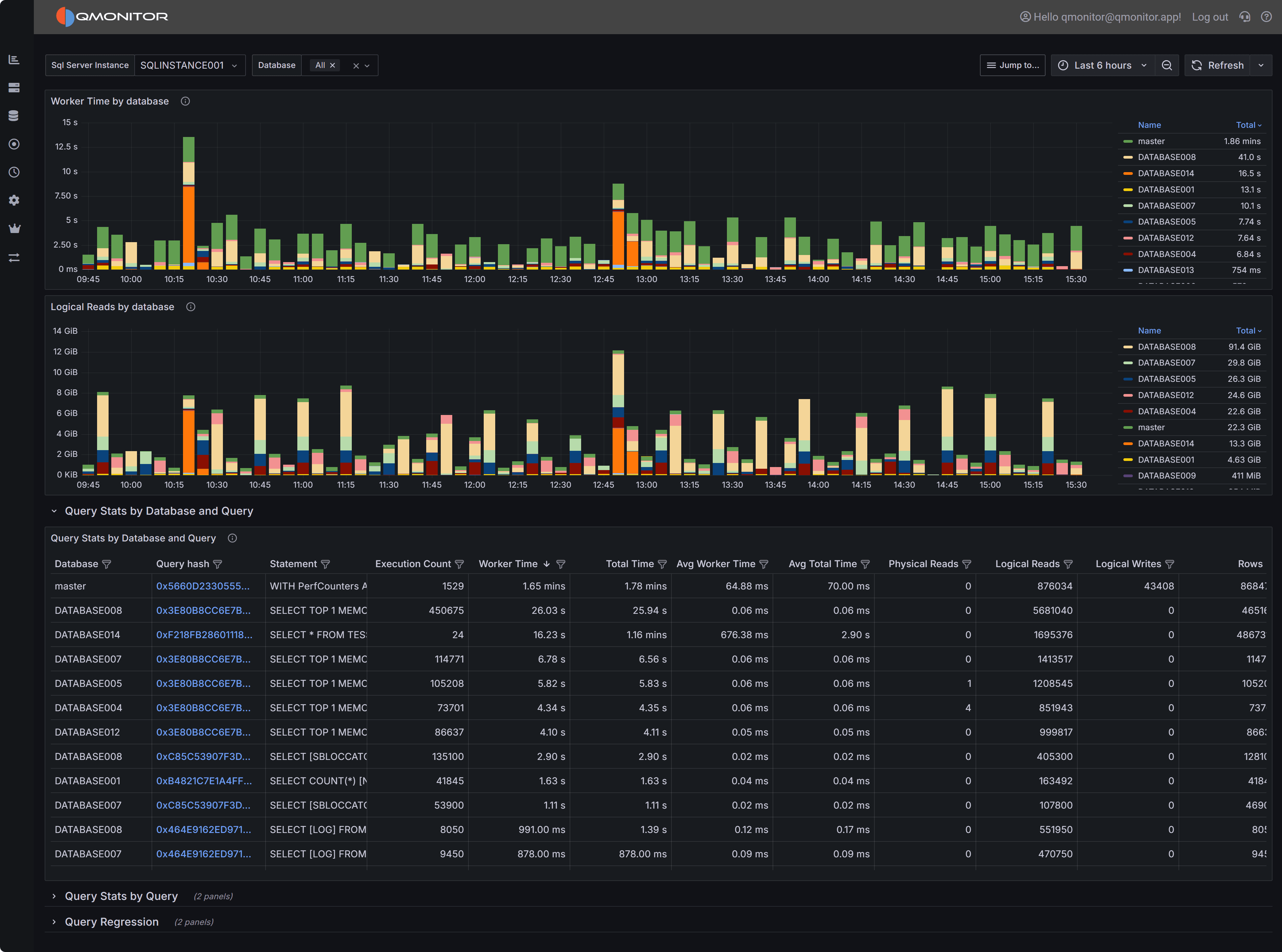

General Workload analysis

The Query Stats dashboard summarizes workload characteristics and surfaces high cost queries so you can

prioritize tuning and capacity decisions. This dashboard is your primary tool for understanding which

queries consume the most resources and where optimization efforts will have the greatest impact.

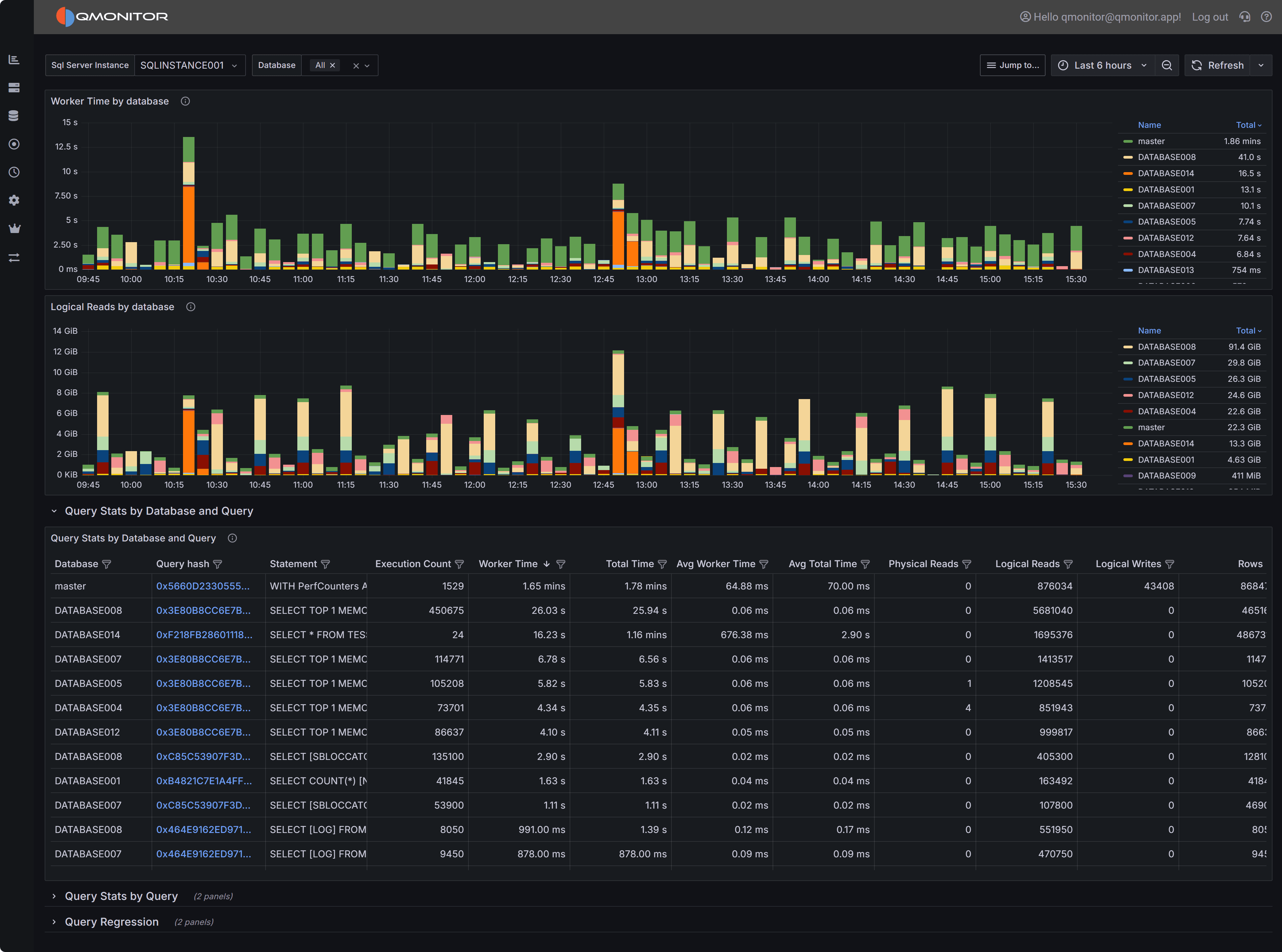

Query Stats Dashboard showing workload overview and query statistics

Query Stats Dashboard showing workload overview and query statistics

Dashboard Sections

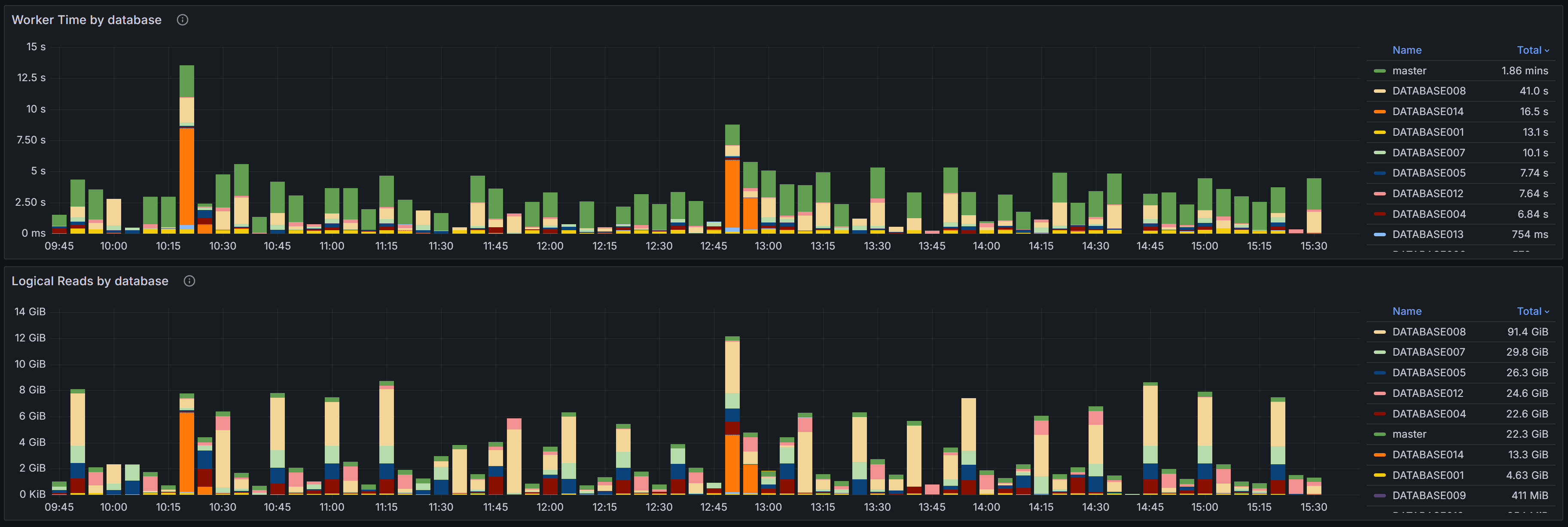

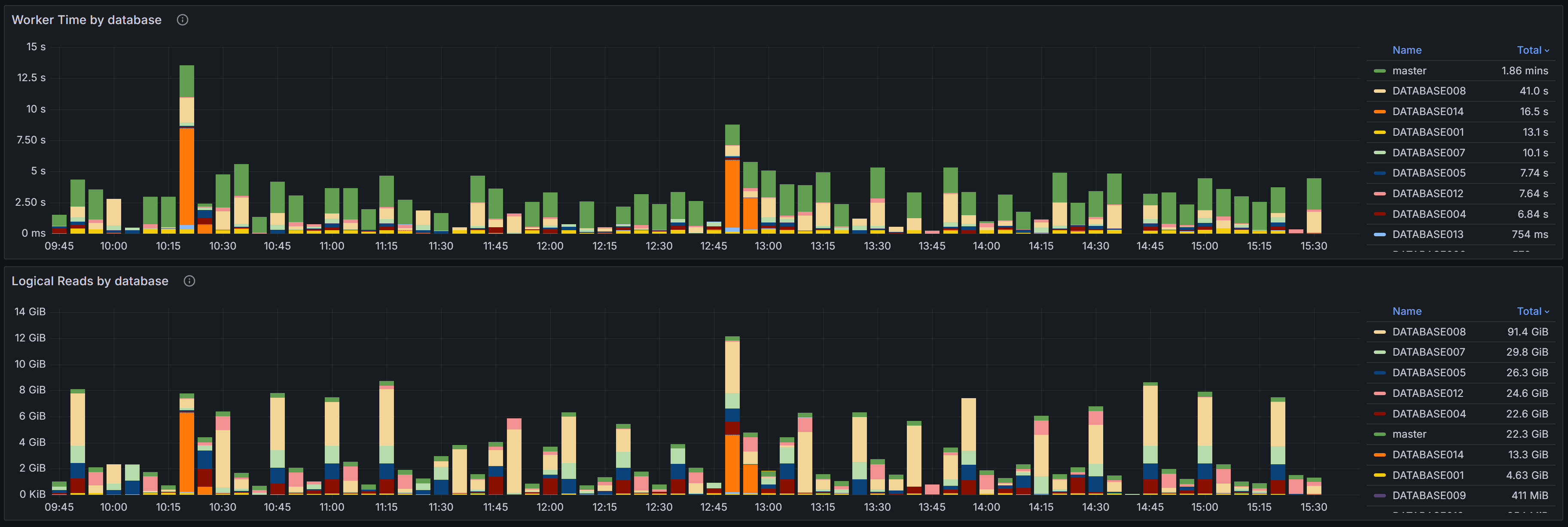

Workload Overview

At the top of the dashboard you have two charts that provide a high-level view of resource consumption

across your databases.

Query Stats Overview

Query Stats Overview

The Worker Time by Database chart shows the cumulative CPU time consumed by queries in each database

during the selected time range. Worker time represents the actual CPU cycles spent executing queries,

making it one of the most important metrics for understanding which databases are driving CPU usage on

your instance. By analyzing this chart over time, you can identify databases that consistently consume

high CPU resources or spot sudden increases that may indicate new workloads or inefficient queries.

This information is valuable when planning capacity, troubleshooting performance issues, or identifying

which databases deserve the most tuning attention.

The Logical Reads by Database chart displays the number of logical page reads performed by queries in

each database. Logical reads measure how many 8KB pages SQL Server accessed from memory or disk to

satisfy query requests. High logical reads indicate either large result sets, missing indexes forcing

table scans, or inefficient query patterns that read more data than necessary. Unlike physical reads

which measure actual disk I/O, logical reads capture all data access regardless of whether the page

was in cache or required a disk read. Databases with high or rising logical reads may suffer from I/O

pressure, especially if memory is limited and pages must be read from disk frequently. Use this chart

to compare databases and track whether optimization efforts are reducing unnecessary data access.

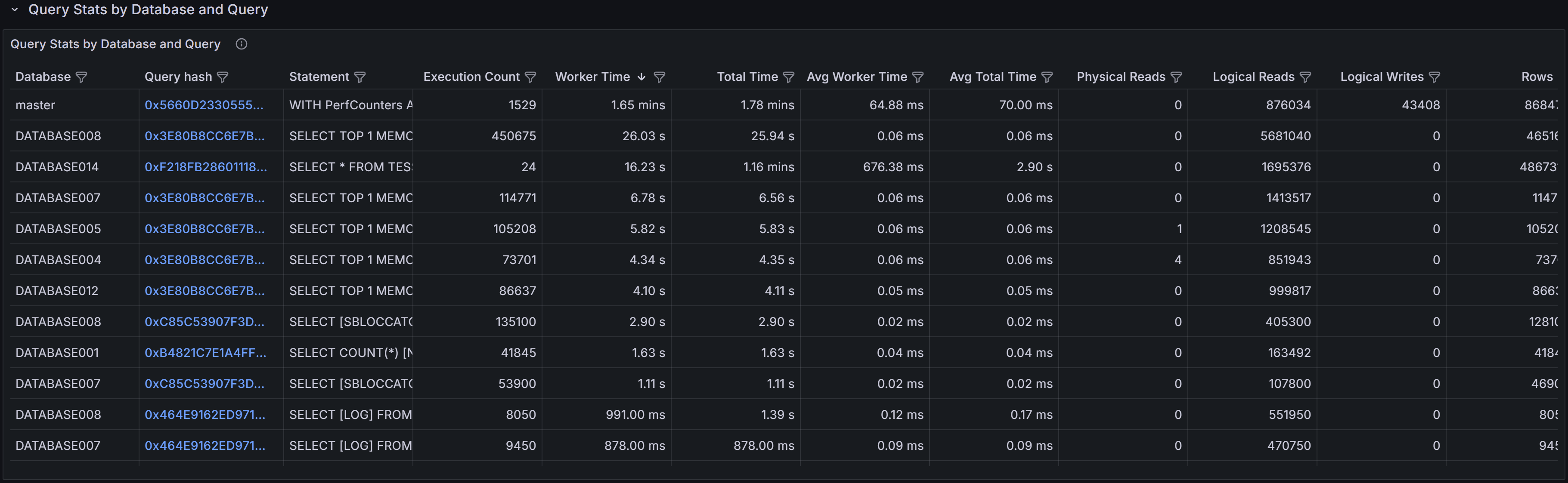

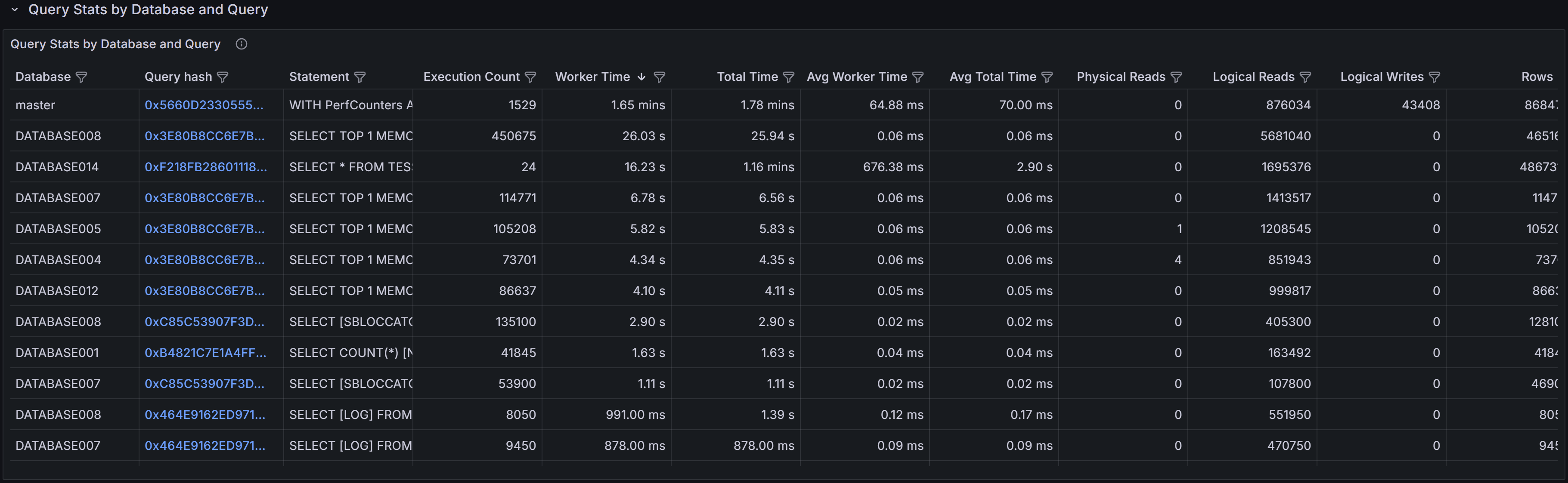

Query Stats by Database and Query

Query Stats By Database and Query

Query Stats By Database and Query

This section shows the top queries grouped by both database and query text. Each row in the table

represents a specific query running in a specific database, allowing you to drill down into the most

resource-intensive queries within individual databases.

The table includes several key metrics to help you assess query performance. Worker Time displays the

cumulative CPU time consumed by all executions of this query. Logical Reads shows the total number of

pages read from the buffer pool across all executions. Duration represents the total elapsed wall-clock

time for all executions, which may be higher than worker time when queries wait for resources like locks

or I/O. Execution Count tells you how many times the query has run during the selected time period.

Understanding the relationship between these metrics is crucial for effective tuning. A query with high

cumulative worker time and many executions might benefit from better indexing to reduce the cost per

execution. A query with high worker time but few executions may have an inefficient execution plan that

needs rewriting or better statistics. High duration relative to worker time suggests the query spends

significant time waiting rather than executing, pointing to blocking, I/O latency, or resource contention

issues.

Use the filters at the top of the table to narrow your analysis by database name, application name, or

client host. This helps you focus on specific workloads or troubleshoot issues reported by particular

applications. Sort the table by different columns to identify queries with the highest cumulative cost,

longest individual executions, or most frequent execution patterns. Click any row to open the query

detail dashboard where you can examine the full query text, execution plans, and detailed runtime

statistics.

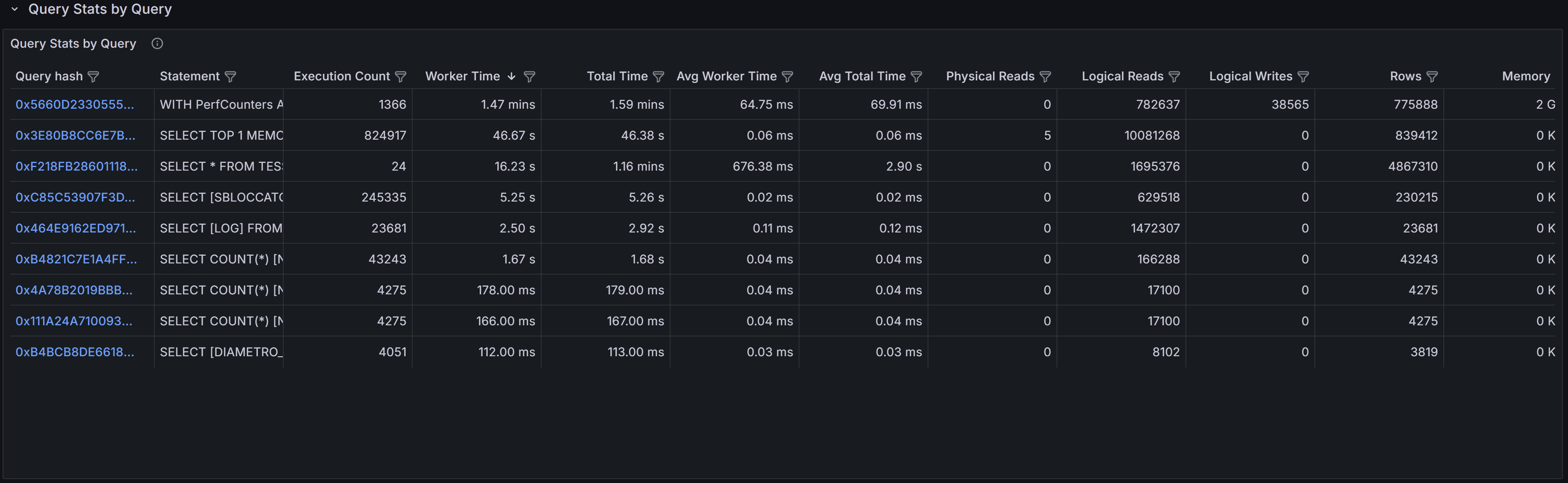

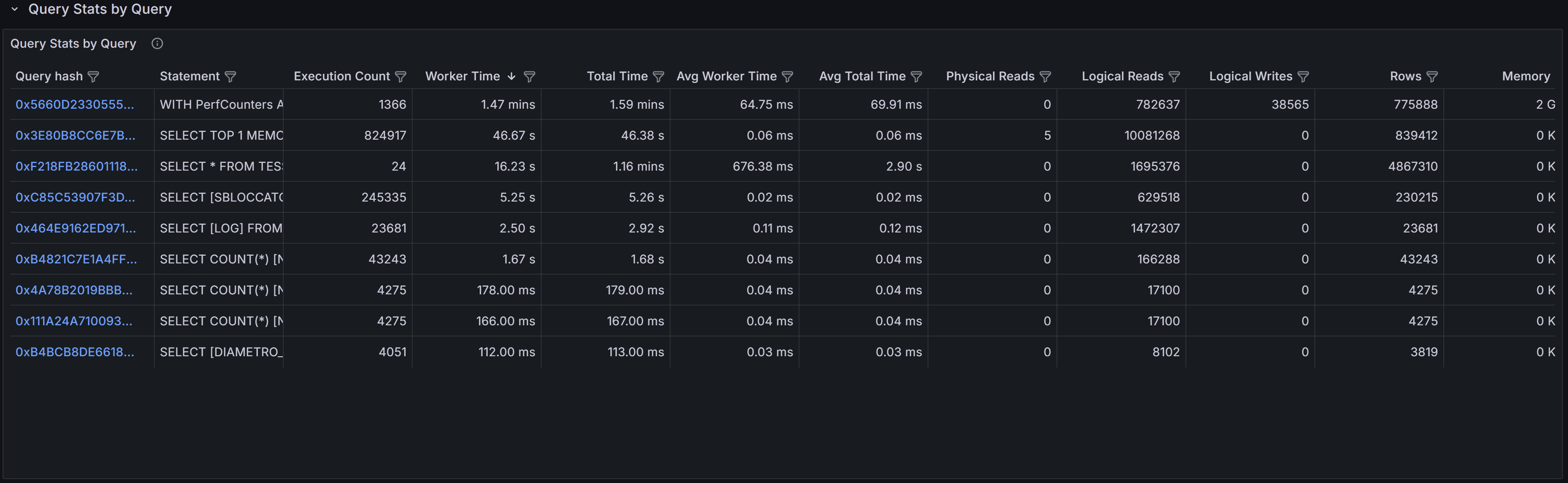

Query Stats by Query

Query Stats By Query

Query Stats By Query

The Query Stats by Query section aggregates statistics across all databases for queries with identical

or similar text. This view is particularly useful for identifying widely-used queries that appear in

multiple databases, a common pattern in multi-tenant applications where the same queries run against

different tenant databases.

By aggregating across databases, you can see the total impact of a specific query pattern on your entire

instance. A query that seems moderately expensive in a single database might actually be consuming

significant resources when its cumulative cost across dozens of tenant databases is considered. This view

helps you prioritize optimization efforts toward queries that will have the broadest impact across your

infrastructure.

The columns in this table provide both cumulative totals and per-execution averages. Total Worker Time

and Total Logical Reads show the combined cost across all databases and executions, while Average Worker

Time and Average Logical Reads indicate the typical cost of a single execution. High averages suggest

inefficient query plans that need tuning, while high totals with low averages indicate frequently-executed

queries that might benefit from caching, result set optimization, or better application-level batching.

This section is especially valuable for detecting candidates for query parameterization. If you see

similar query text with slightly different literal values appearing as separate entries, these queries

may not be using parameterized queries or prepared statements, leading to plan cache pollution and

increased compilation overhead. Converting these to parameterized queries can reduce CPU usage and

improve plan reuse.

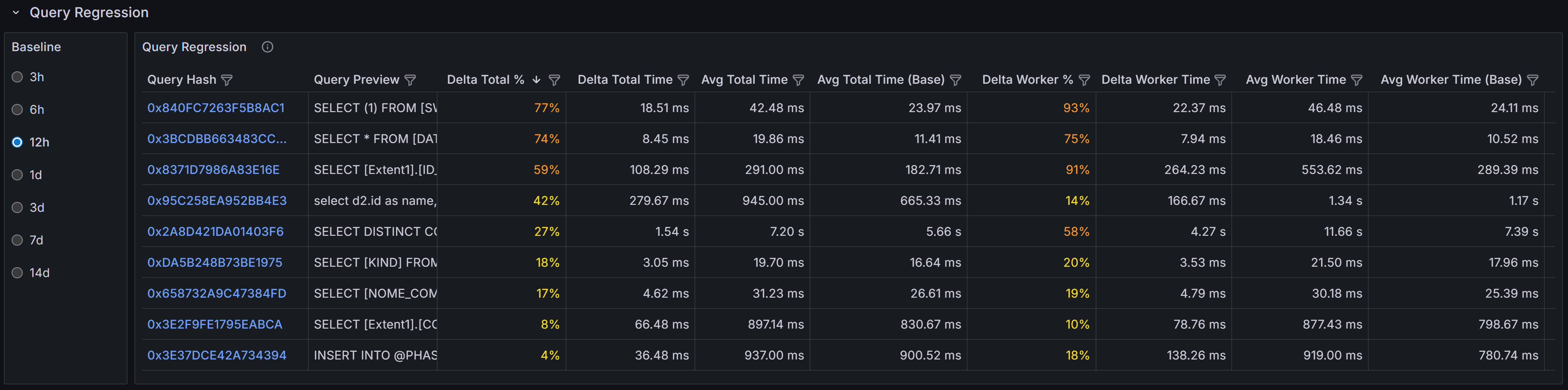

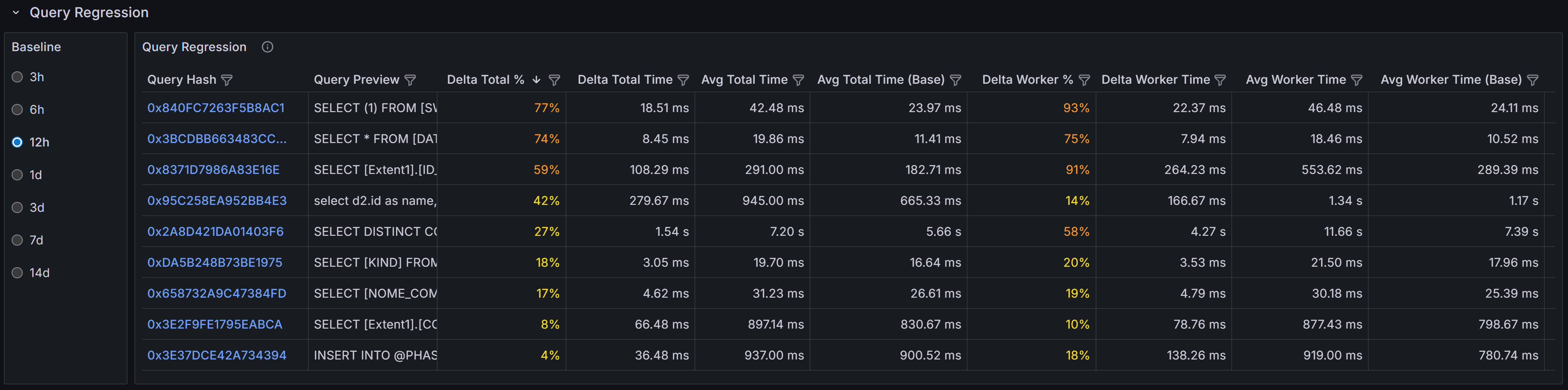

Query Regressions

Query Regressions

Query Regressions

The Query Regressions section highlights queries whose performance has degraded significantly compared

to their historical baseline. Performance regressions often occur after SQL Server chooses a different

execution plan due to statistics updates, parameter sniffing issues, schema changes, or increases in

data volume.

This section compares query performance during the selected time window against previous periods to

identify substantial increases in duration, CPU consumption, or logical reads. Regressions are typically

caused by execution plan changes, shifts in data distribution that make existing plans inefficient,

increased blocking as concurrency grows, or resource contention from other workloads. When a query

suddenly takes longer to execute or consumes more CPU than it did previously, investigating the execution

plan history can reveal whether SQL Server switched from an efficient index seek to a costly table scan,

or from a nested loop join to a less optimal hash join.

Click on a query hash value to drill into the detailed execution history for that query. The query detail

view shows historical execution plans, runtime statistics over time, and the complete query text. By

comparing current and historical plans side by side, you can identify exactly what changed and decide

whether to force a specific plan, update statistics, add missing indexes, or rewrite the query to avoid

plan instability.

Query regressions are particularly important to monitor because they represent sudden performance changes

that may not be caused by code changes. A query that worked well for months can suddenly become a

performance problem without any application deployment, making these issues challenging to diagnose

without historical performance data.

Data Sources and Query Store Integration

Query statistics displayed in this dashboard are gathered from two primary sources depending on your

SQL Server configuration and version.

QMonitor continuously captures query execution data through snapshots of the query stats DMVs,

providing query statistics even when Query Store is not available or disabled. This capture

gives you visibility into query performance across all SQL Server versions and editions that QMonitor

supports.

When Query Store is enabled on your databases, QMonitor integrates Query Store data into the

dashboard to provide richer historical information. Query Store is a SQL Server feature introduced in

SQL Server 2016 that automatically captures query execution plans, runtime statistics, and performance

metrics. It is enabled at the database level and retains historical data of query executions,

even for queries that are no longer in the plan cache or are never available in the cache.

Qmonitor tries to rely on query store data when available, but if query store is disabled or

not supported on your SQL Server version, it will fall back to using the query stats DMVs for real-time data.

While you will still see query performance data, you may have less historical plan information and

fewer options for plan comparison. Enabling Query Store on your production databases is recommended

for comprehensive query performance monitoring and troubleshooting.

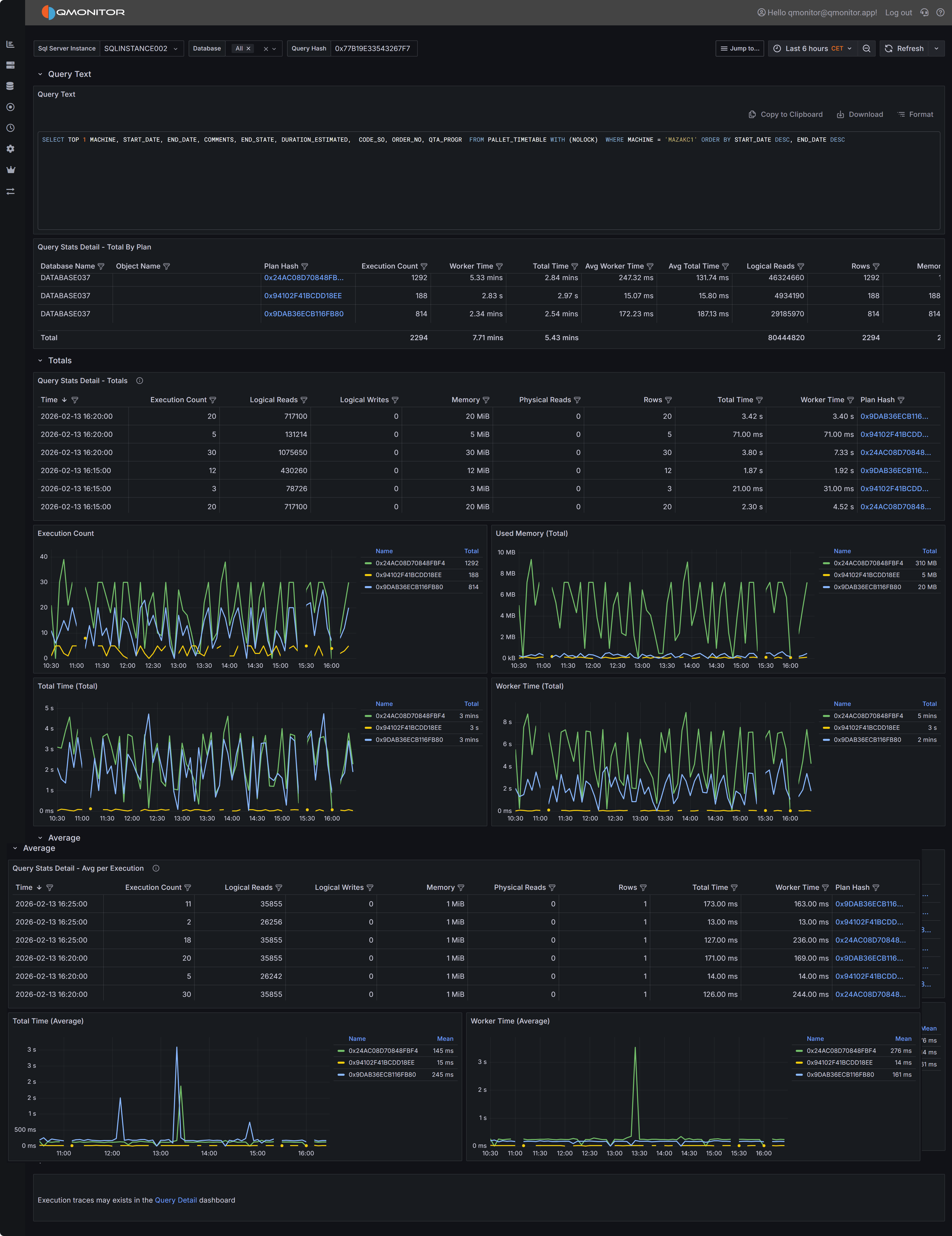

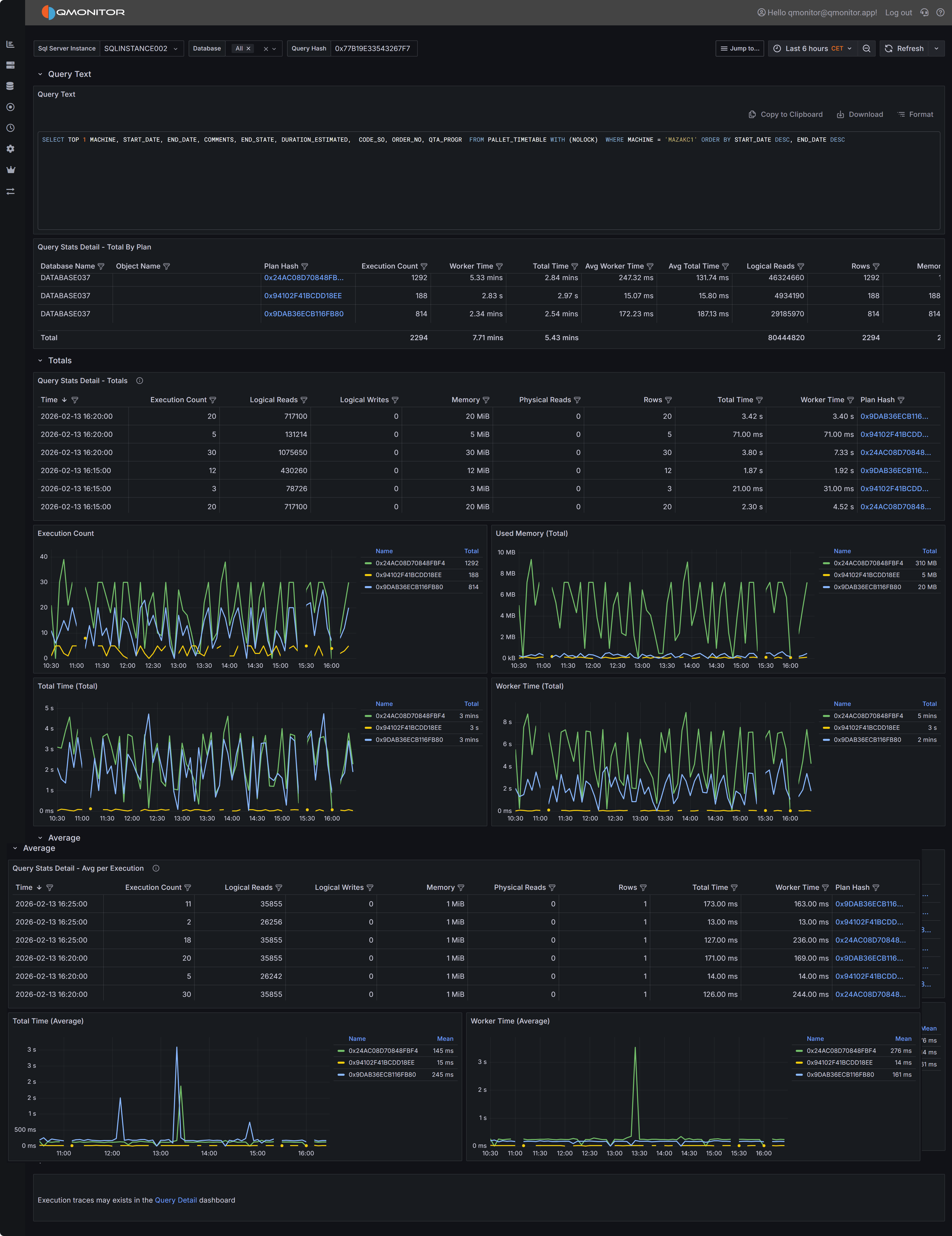

4.1.3.1 - Query Stats Detail

Detailed statistics about a specific SQL server query

The Query Stats Detail dashboard focuses on a single query and shows how each compiled plan for that

query performed over the selected time interval. This dashboard is your primary tool for understanding

query performance variations, comparing execution plans, and diagnosing performance issues at the

individual query level.

Query Stats Detail dashboard showing query text, plan summaries, and performance metrics

Query Stats Detail dashboard showing query text, plan summaries, and performance metrics

Dashboard Sections

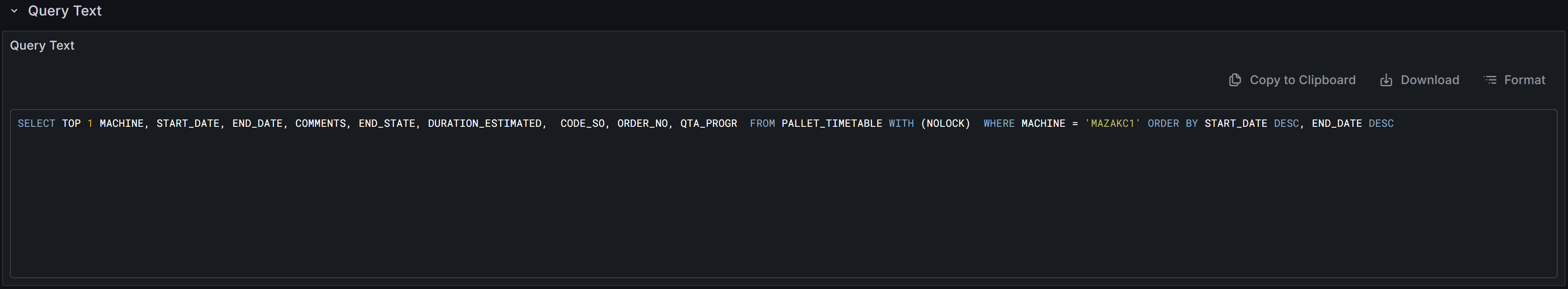

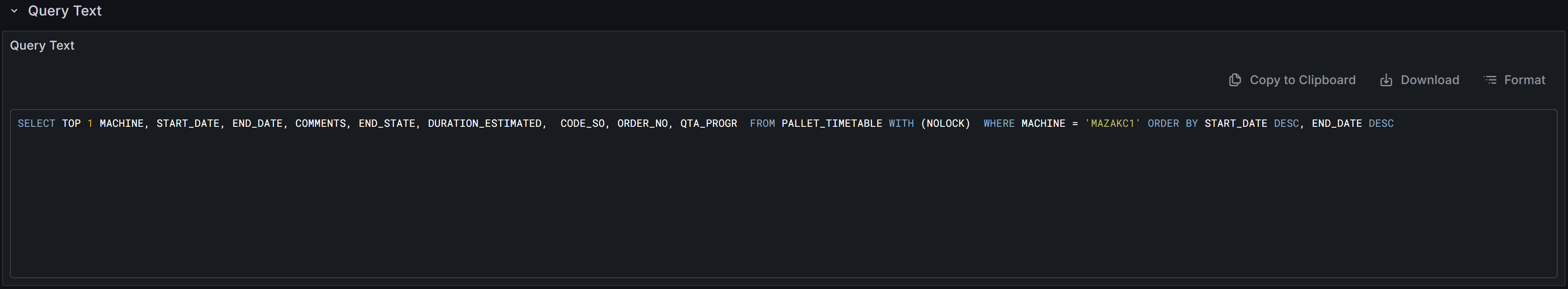

Query Text Display

At the top of the dashboard you will find the complete SQL text for the query you are investigating.

Query text display with copy, download, and format controls

Query text display with copy, download, and format controls

The toolbar above the query text provides several useful functions. The copy button allows you to quickly

copy the entire query text to your clipboard so you can paste it into SQL Server Management Studio or

another query editor for testing and optimization. The download button saves the query text as a SQL file

to your local machine, which is useful when you need to share the query with colleagues or save it for

documentation purposes. The format button reformats the query text with proper indentation and line breaks,

making complex queries easier to read and understand. Properly formatted queries are particularly helpful

when analyzing deeply nested subqueries or queries with many joins and predicates.

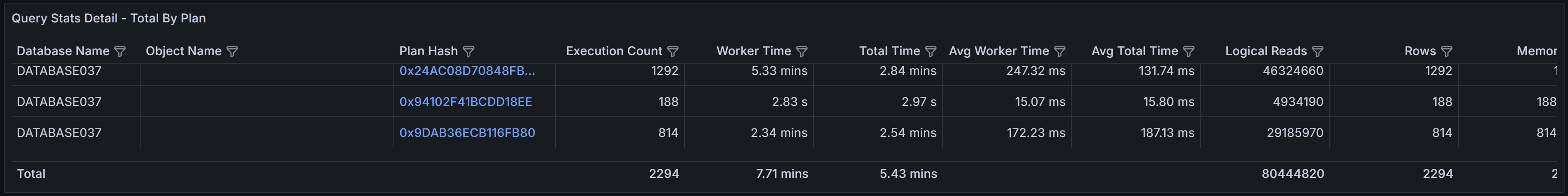

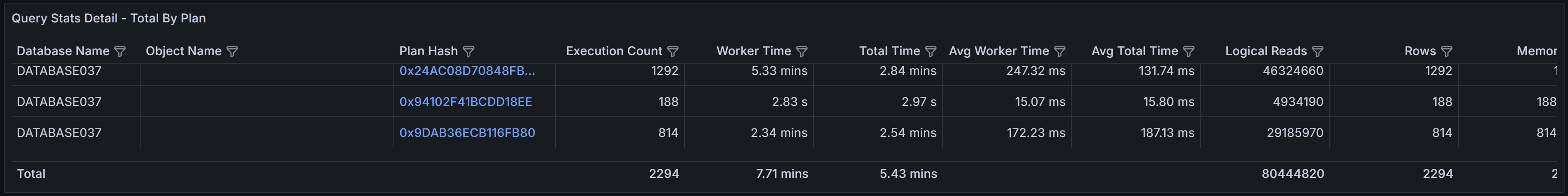

Plans Summary Table (Totals by Plan)

The Plans Summary table provides a high-level comparison of all execution plans that SQL Server has

compiled for this query during the selected time range. Each row in the table represents a distinct

execution plan, identified by its plan hash value.

Plans summary showing execution counts and performance metrics for each plan

Plans summary showing execution counts and performance metrics for each plan

The table includes several key metrics to help you compare plan performance.

- Database Name indicates which database the query ran in

- Object Name shows the name of the stored procedure, function, or view that contains the query, if applicable.

- Execution Count shows how many times each plan was executed during the time range.

- Worker Time displays the total CPU time consumed by all executions of this plan.

- Total Time represents the total elapsed wall-clock time, which includes both execution time and

any waiting time for resources.

- Average Worker Time and Average Total Time show the per-execution cost, helping you identify

plans that are individually expensive versus plans that accumulate high cost through frequent execution.

- Rows indicates the number of rows returned by the query, which can help you identify whether

different plans are returning different result sets or processing different amounts of data.

- Memory Grant shows the total memory allocated to executions of this plan.

This table is particularly valuable for identifying plan variations and understanding their performance

impact. If you see multiple plans with significantly different performance characteristics for the same

query text, this often indicates parameter sniffing issues where SQL Server cached different plans

optimized for different parameter values. Plans with high average worker time or total time deserve

investigation to understand what makes them expensive. Plans with very high execution counts but low

average cost might benefit from application-level caching or query result reuse rather than query-level

optimization.

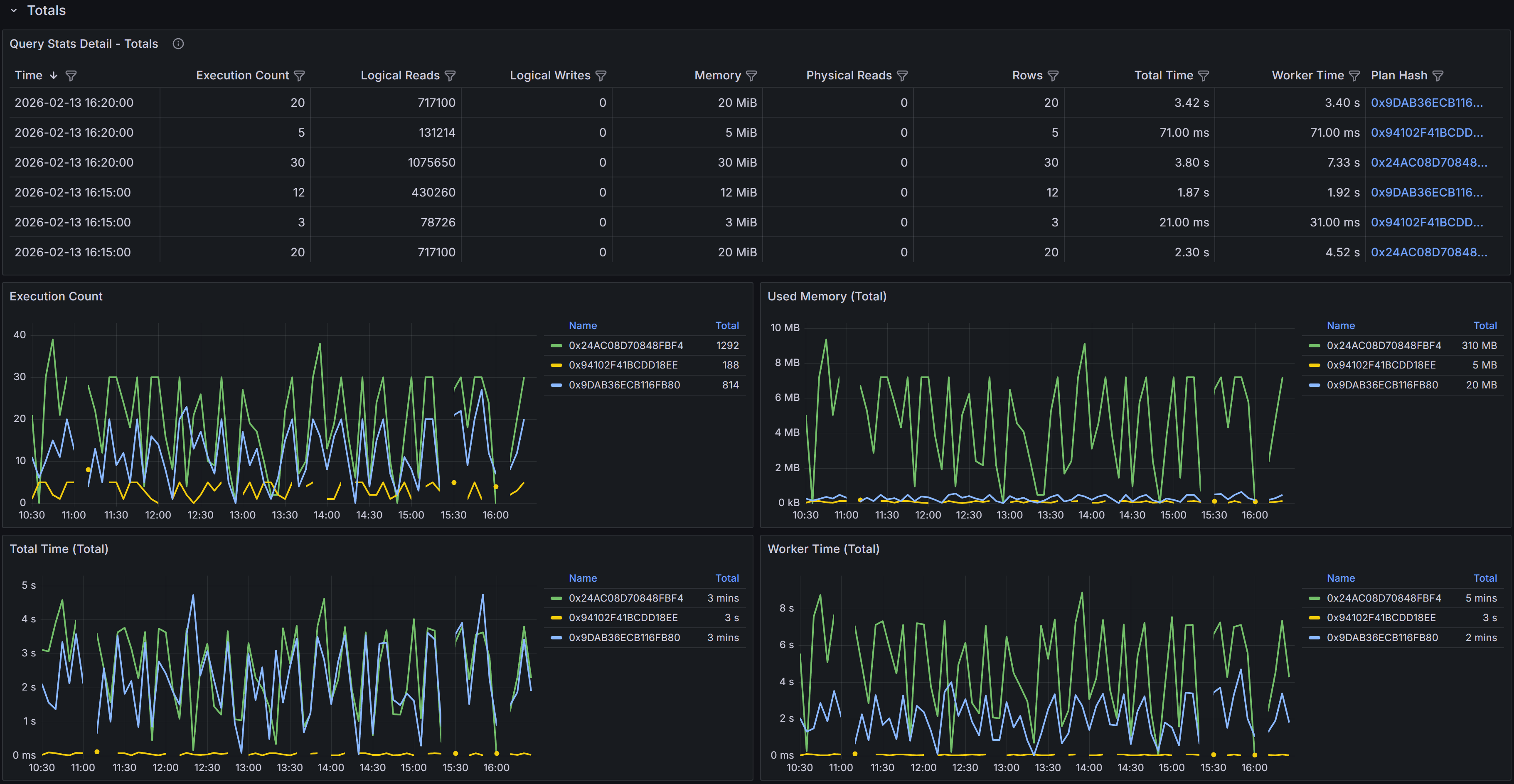

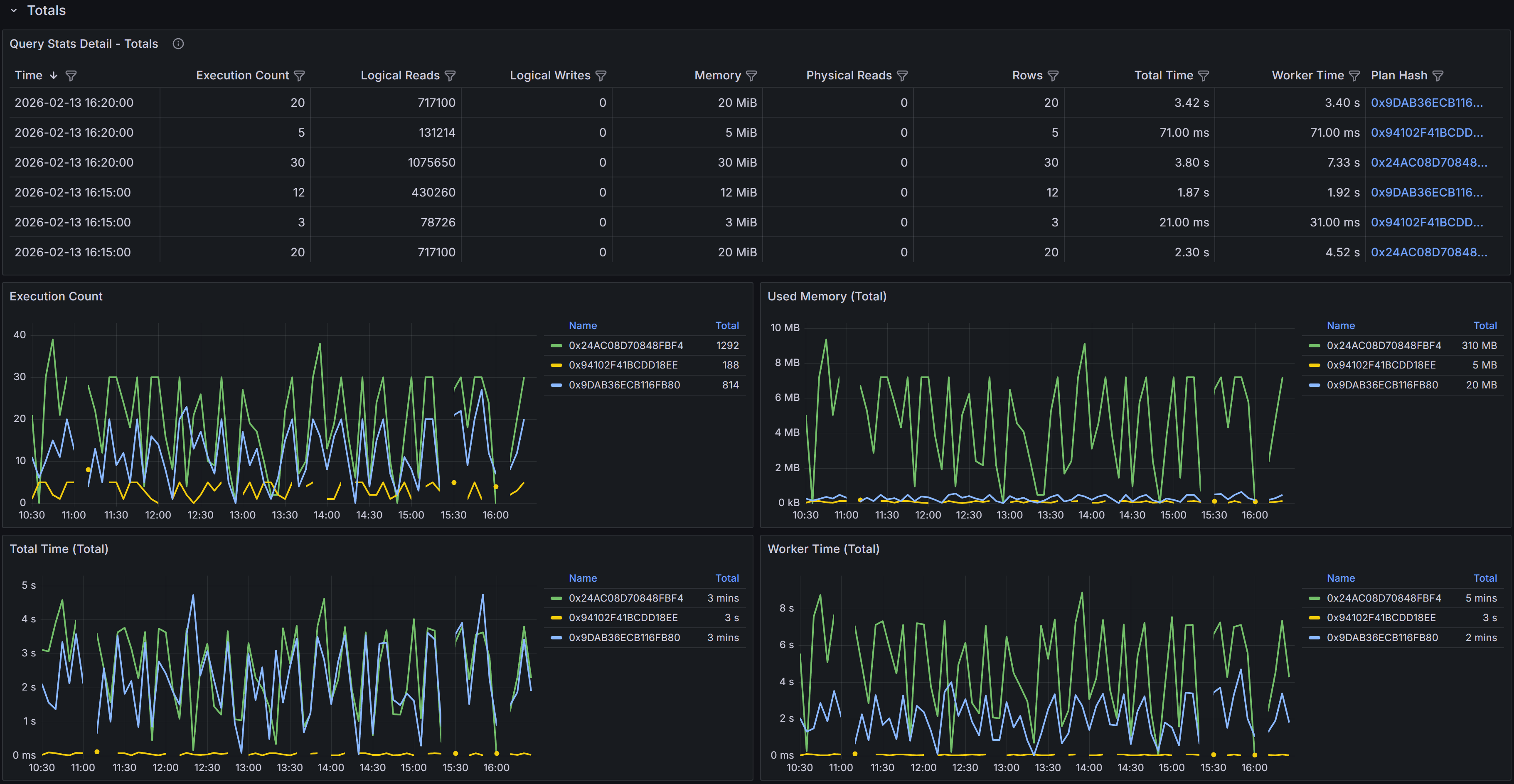

Totals Section

The Totals section displays time-series data showing how query performance varied over the selected time

range. The data is aggregated into 5-minute buckets, with each row representing the cumulative metrics

for all query executions that occurred during that 5-minute period.

Time-series table and charts showing cumulative query performance metrics

Time-series table and charts showing cumulative query performance metrics

The time-series table includes detailed metrics for each 5-minute bucket.

- Time shows when the 5-minute bucket started.

- Execution Count indicates how many times the query ran during that period.

- Logical Reads displays the total number of 8KB pages read from memory during all executions in the bucket.

- Logical Writes shows the total pages written.

- Memory represents the cumulative memory grants for all executions.

- Physical Reads indicates how many pages had to be read from disk because they were not in the buffer cache.

- Rows shows the total number of rows returned by all executions.

- Total Time represents the cumulative elapsed time for all executions

- Worker Time shows the cumulative CPU time.

- Plan Hash identifies which plan was used during each sample period.

The Plan Hash values in the table are clickable links. When you click a plan hash, QMonitor downloads

the execution plan as a .sqlplan file that you can open in SQL Server Management Studio.

The charts in the Totals section visualize how query performance varied over time and how different plans

contributed to resource consumption. The Execution Count by Plan chart shows how frequently each plan was

executed during each time bucket, helping you understand plan usage patterns. The Memory by Plan chart

displays memory grant trends, which is valuable for identifying queries that request excessive memory or

queries whose memory requirements vary significantly over time. The Total Time by Plan and Worker Time

by Plan charts show how much elapsed time and CPU time each plan consumed, making it easy to spot periods

when query performance degraded or when a particular plan dominated resource usage.

Tip

Use these charts to identify performance patterns and correlate them with other events. If you see a sudden

spike in worker time or total time at a specific point in time, you can investigate what changed at that

moment. Did SQL Server switch to a different execution plan? Did the query start receiving different

parameter values? Did concurrent workload increase and cause resource contention? The time-series view

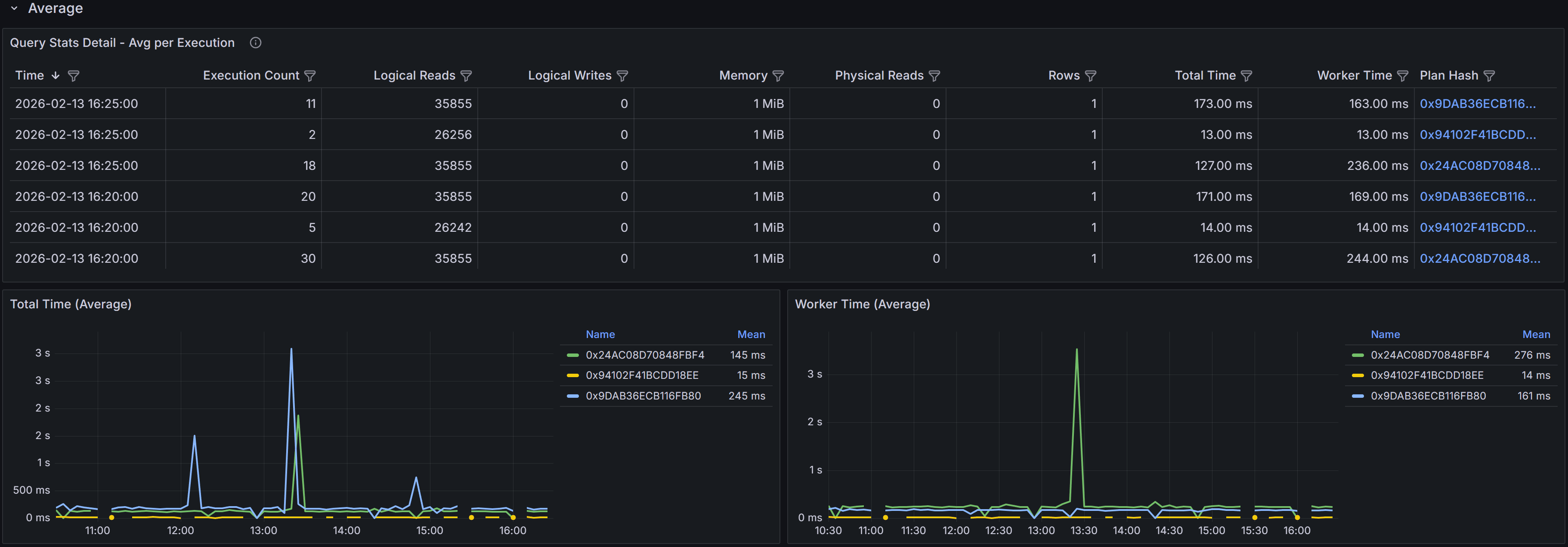

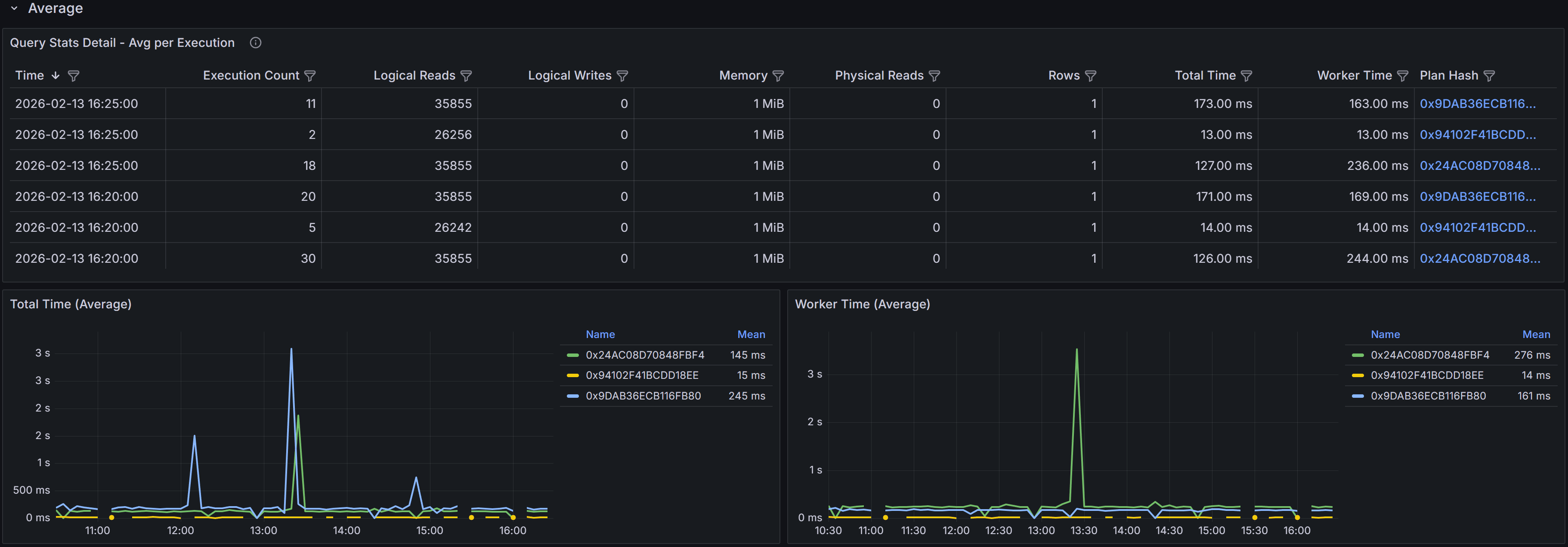

provides the temporal context needed to answer these questions.Averages Section

The Averages section presents the same time-series data as the Totals section, but with metrics averaged

per execution rather than aggregated cumulatively. This view is particularly valuable for understanding

the per-execution cost of the query and identifying when individual executions became more expensive.

Time-series table and charts showing average per-execution metrics

Time-series table and charts showing average per-execution metrics

The time-series table in the Averages section shows metrics averaged across all executions that occurred

during each 5-minute bucket. For example, if the query executed 100 times during a 5-minute period with

a total worker time of 50,000 milliseconds, the average worker time would be 500 milliseconds per execution.

This per-execution perspective helps you identify whether query performance degradation is due to the

query becoming inherently more expensive or simply running more frequently.

The columns mirror those in the Totals table: Sample Time, Execution Count, averaged Logical Reads,

Logical Writes, Memory, Physical Reads, Rows, Total Time, Worker Time, and Plan Hash. The Execution Count

is not averaged since it represents the number of times the query ran, but all other metrics show the

average value per execution during that time bucket.

The charts in the Averages section focus on per-execution cost trends. The Total Time (avg) by Plan chart

shows how the average elapsed time per execution varied over time for each plan. The Worker Time (avg) by

Plan chart displays the average CPU time per execution. These charts help you distinguish between

performance issues caused by increased query frequency versus issues caused by increased per-execution cost.

Example

For example, if the Totals section shows high cumulative worker time but the Averages section shows low

average worker time per execution, the high total cost is due to query frequency rather than query

efficiency. In this case, application-level solutions like caching, query result reuse, or reducing

unnecessary query executions might be more effective than query optimization.

Conversely, if the Averages

section shows high per-execution cost, the query itself is expensive and needs optimization through better

indexes, query rewrites, or plan improvements.

When analyzing query performance using this dashboard, start by examining the Plans Summary table to

understand how many distinct plans exist for the query and whether any plans are significantly more

expensive than others. Multiple plans with different performance characteristics often indicate parameter

sniffing issues where SQL Server cached plans optimized for specific parameter values that may not be

optimal for all parameter combinations.

Use the Totals and Averages charts together to understand performance patterns. High totals with low

averages suggest the query runs frequently but each execution is relatively cheap, pointing to

application-level optimization opportunities. High averages indicate expensive individual executions that

need query-level optimization. Comparing performance across different time periods helps you identify

whether performance degradation was gradual or sudden, which provides clues about the root cause.

Using the Dashboard Effectively

Set the time range selector to focus on the period when performance issues occurred. If you are

investigating a regression that started yesterday, select a time range that includes both before and after

the regression so you can compare plan behavior and metrics. For ongoing performance issues, use a recent

time range like the last few hours to analyze current behavior.

Sort and filter the time-series tables to focus on specific time periods or plans. If you know that

performance degraded at a specific time, filter the table to show only samples from that period. If you

want to compare two different plans, filter by plan hash to isolate their metrics.

Download multiple execution plans when comparing plan variations. Open them side by side in SQL Server

Management Studio to identify exactly what changed between plans. Look for differences in join types,

index selection, join order, and operator choices. Understanding why SQL Server chose different plans

helps you decide whether to update statistics, add indexes, use query hints, or enable plan forcing.

When you identify a specific plan that performs best, consider using Query Store plan forcing to lock the

query to that plan. This prevents SQL Server from choosing suboptimal plans in the future, providing

stable and predictable performance. However, plan forcing should be used carefully and monitored regularly,

as data volume changes or schema changes may eventually make the forced plan suboptimal.

Compare Totals and Averages to determine whether optimization efforts should focus on reducing per-execution

cost or reducing execution frequency. If totals are high but averages are low, work with application teams

to reduce unnecessary query executions, implement caching, or batch operations. If averages are high, focus

on database-level optimization like indexes, query rewrites, or schema changes.

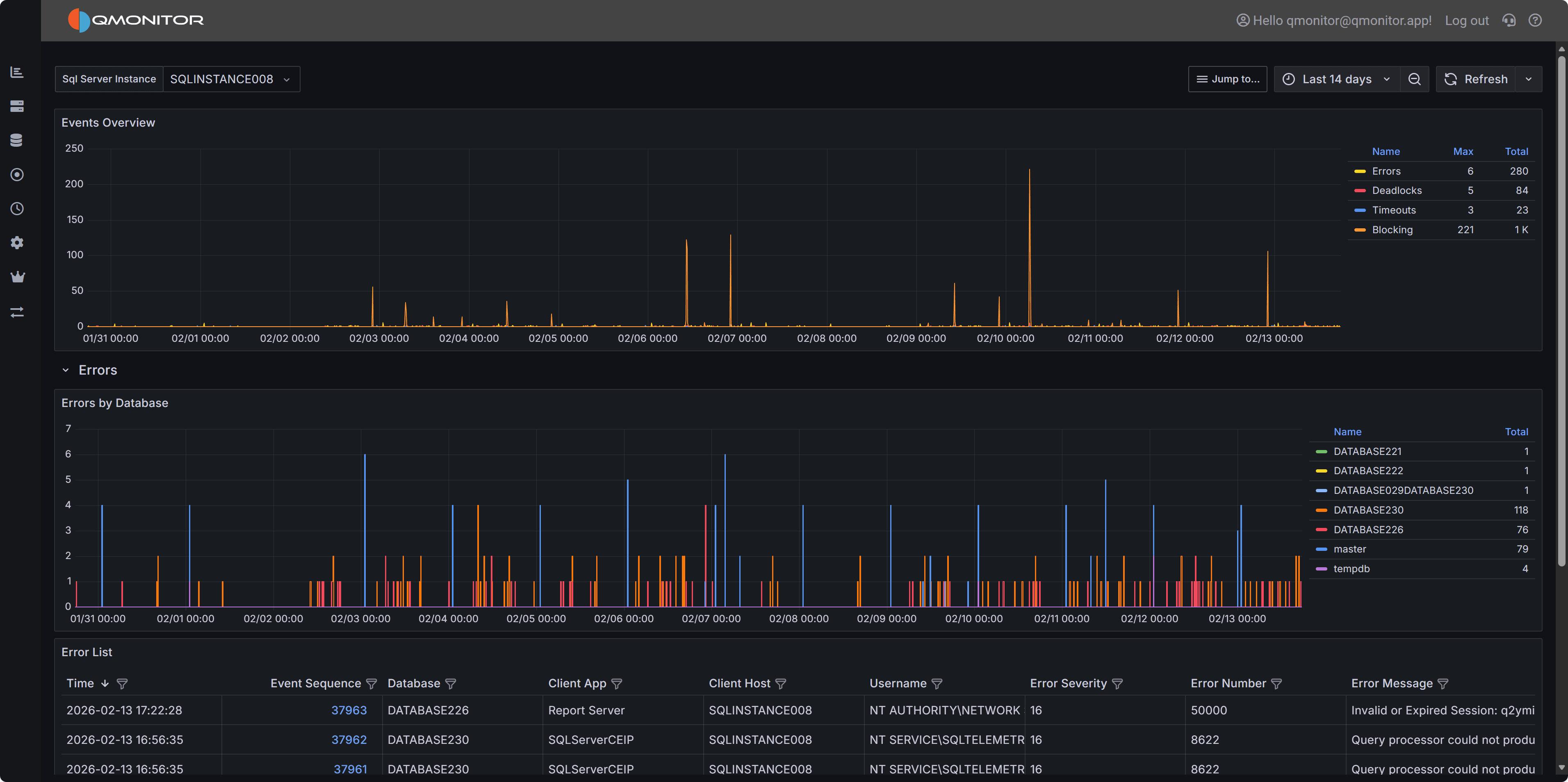

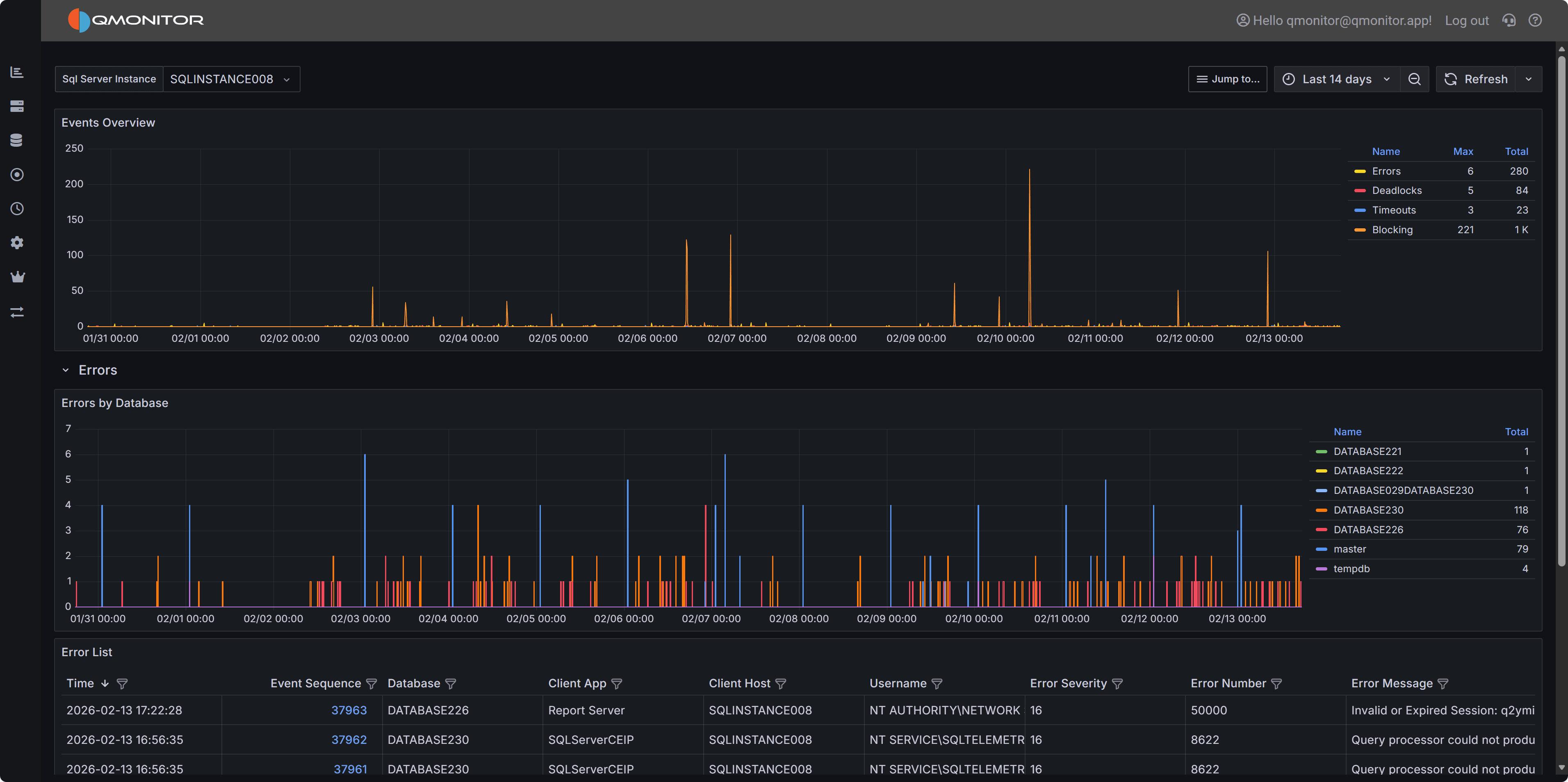

4.1.4 - SQL Server Events

Events analysis

The Events dashboard shows the number of events that occurred on the SQL

Server instance during the selected time range.

SQL Server Events dashboard showing events by type

SQL Server Events dashboard showing events by type

The top chart breaks events down by type:

- Errors

- Deadlocks

- Blocking

- Timeouts

Expand a row to view a chart for that event type by database and a list of

individual events. Click a row’s hyperlink to open a detailed dashboard for

that event type, where you can inspect the event details.

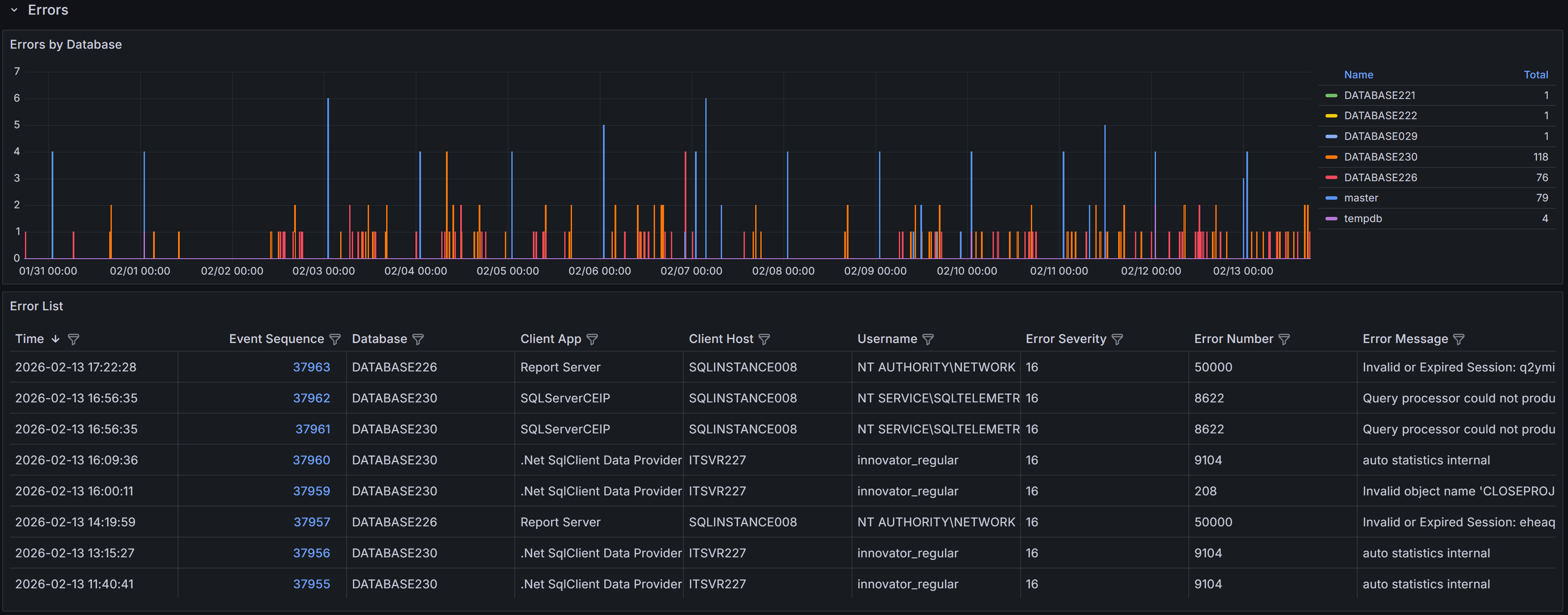

4.1.4.1 - Errors

Details about errors occurring on the instance

The Errors dashboard helps you monitor and diagnose SQL Server errors that may indicate application

issues, security problems, or infrastructure failures. By tracking error patterns over time and

analyzing error details, you can proactively identify and resolve problems before they impact users.

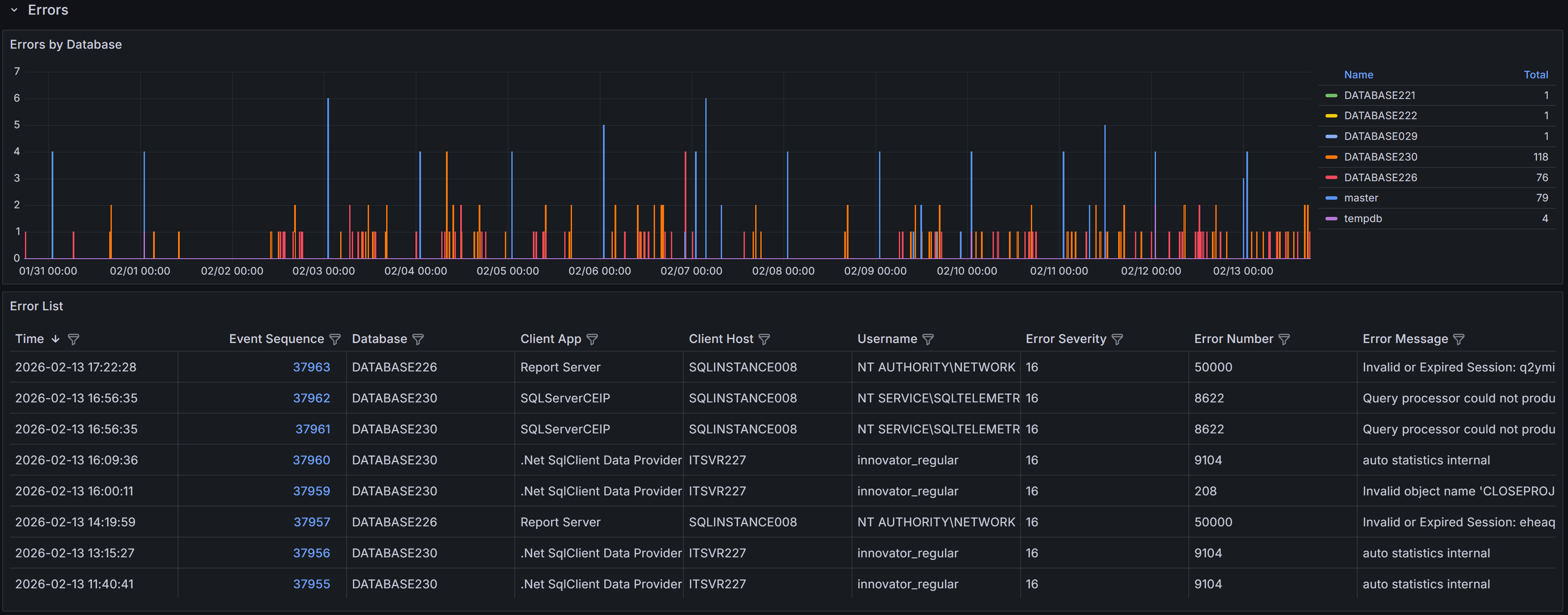

Expand the “Errors” row to see a chart that shows the number of errors per database over time.

Errors by database

Errors by database

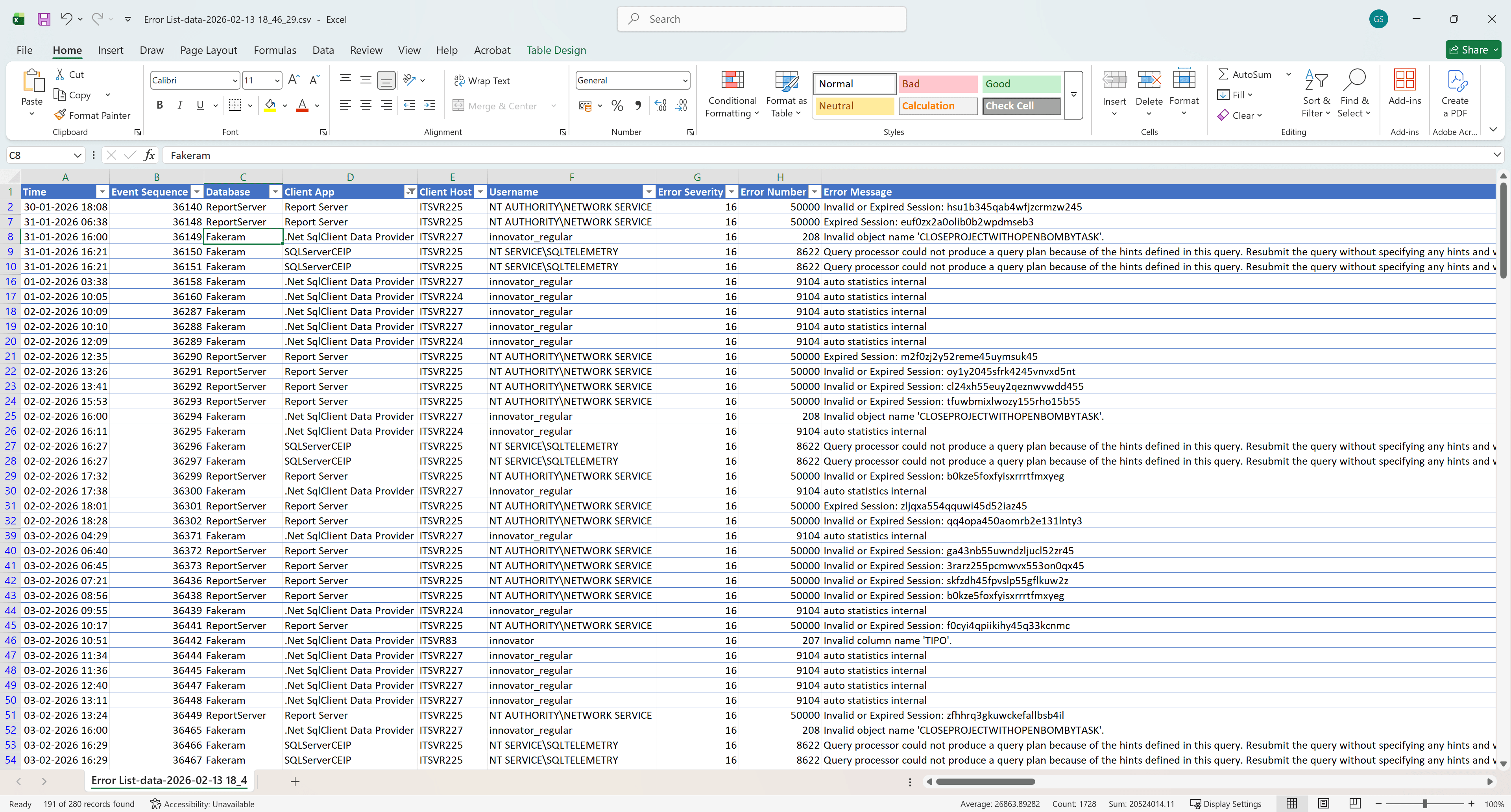

Below the chart, a table lists individual error events with these columns:

- Time: the time the error occurred

- Event sequence: a unique identifier for the error event

- Database: Name of the database where the error occurred

- Client App: Name of the client application that caused the error

- Client Host: Name of the client host that originated the error

- Username: Login name of the connection where the error occurred

- Error Severity: the severity level of the error, on a scale from 16 to 25

- Error Number: the error number, which identifies the type of error

- Error Message: a brief description of the error

SQL Server error severity levels range from 0 to 25. This dashboard displays only errors with

severity 16 or higher, which represent user-correctable errors and system-level problems. Severity

16-19 errors are typically application or query errors that users can fix. Severity 20-25 errors

indicate serious system problems that may require DBA intervention. Understanding severity helps you

prioritize which errors need immediate attention.

Note

Error 17830 (“Network error code 0x2746 occurred while establishing a connection”) is excluded from

this view because it can occur very frequently during normal connection pooling and retry logic,

creating noise that obscures more actionable errors.Tip

Use the filter controls in the column headers to filter the table. Click a column header to

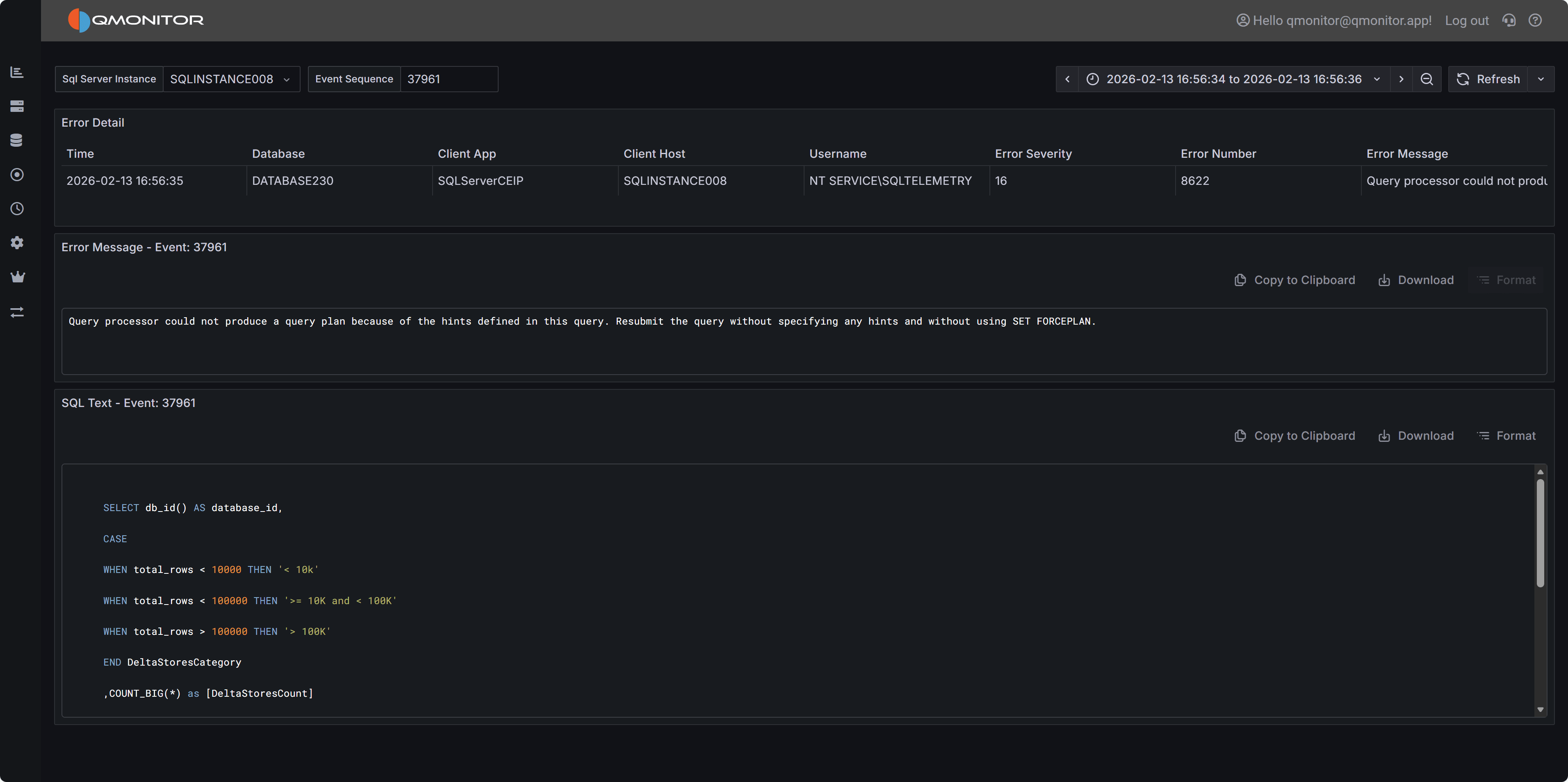

sort by that column: each click cycles through ascending, descending, and no sort.Error Details

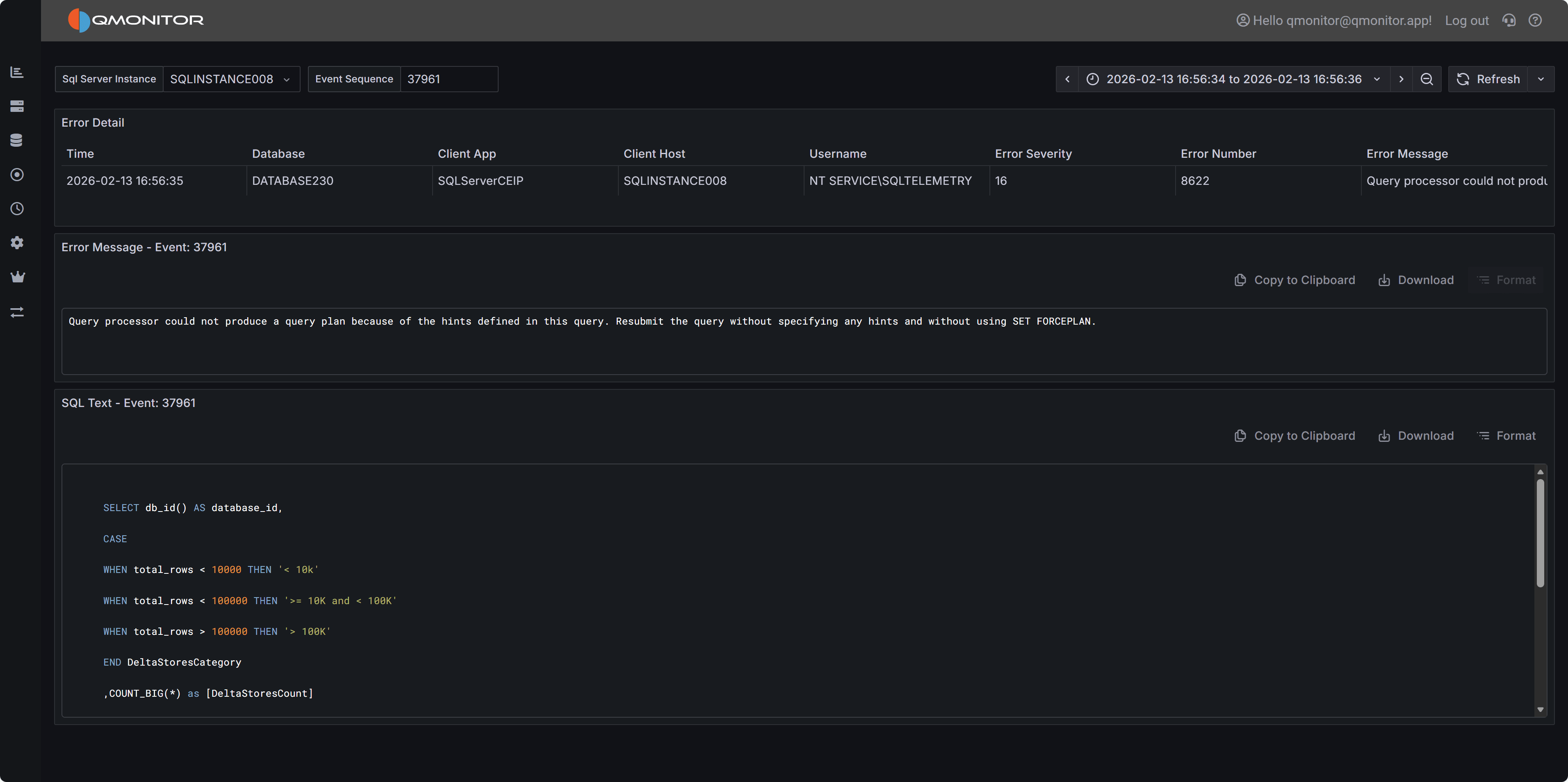

Click the link in the Event Sequence column to open the error details dashboard.

It shows the full error message and, when available, the SQL statement that caused the error.

The SQL text may be unavailable for some error types.

Error Details

Error Details

Common Error Patterns to Investigate

When analyzing errors, watch for these common patterns:

Permission Errors (229, 297, 300, 15247) - Users attempting operations they don’t have rights to perform.

Review permissions and ensure applications are using appropriate service accounts.

Connection Errors (18456) - Failed login attempts. May indicate incorrect credentials, expired

passwords, or potential security issues.

Object Not Found (207, 208) - Queries referencing columns, tables, views, or procedures that don’t exist. Often

occurs after deployments or when applications use wrong database contexts.

A complete list of SQL Server error numbers and their meanings can be found in the official documentation:

Using the Errors Dashboard Effectively

Start by filtering the time range to focus on recent errors or specific time periods when users

reported issues. Use the database filter to focus on specific databases if you’re responsible for

particular applications.

Filter by Error Number to display similar errors together. Grouping by error number helps you

identify whether a single issue is affecting multiple users or databases.

When you find patterns of repeated errors, click through to the error details to examine the full

error message and SQL statement. The SQL text often reveals the specific query or operation causing

problems, allowing you to identify whether the issue is in application code, database schema, or

data quality.

Monitor error trends over time by comparing different time periods. Increasing error rates may

indicate degrading application quality, growing data volumes causing queries to fail, or infrastructure

issues affecting database connectivity.

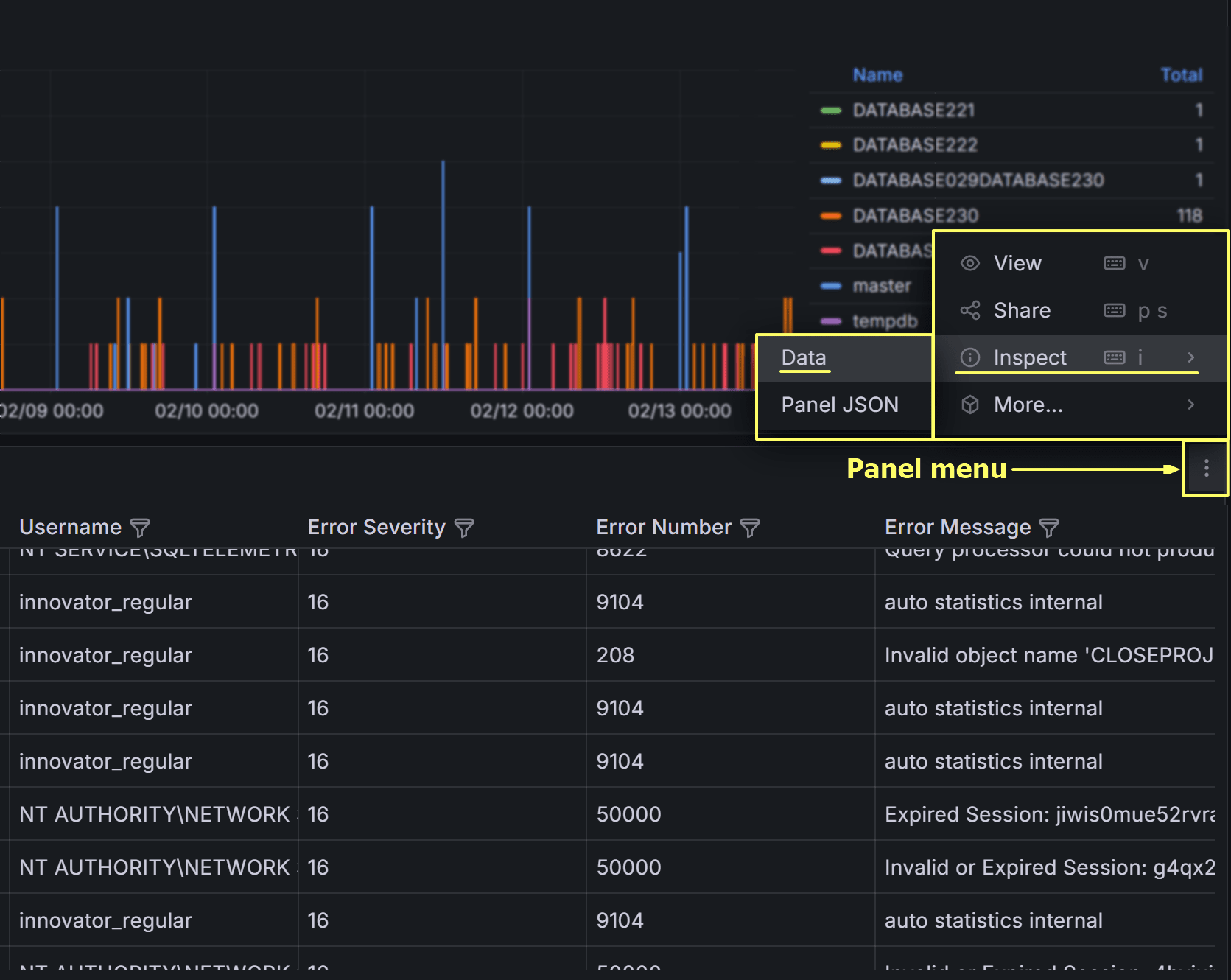

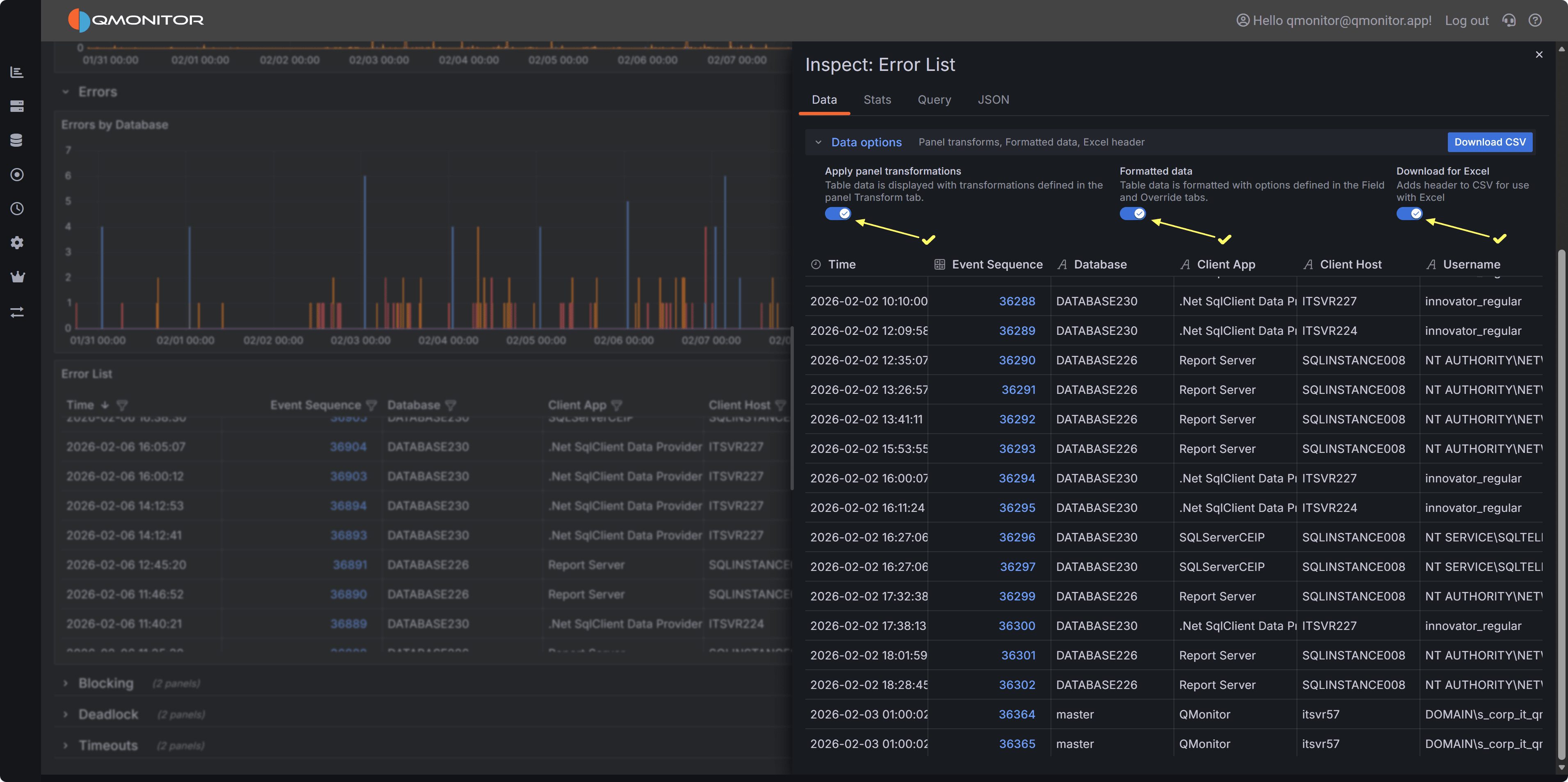

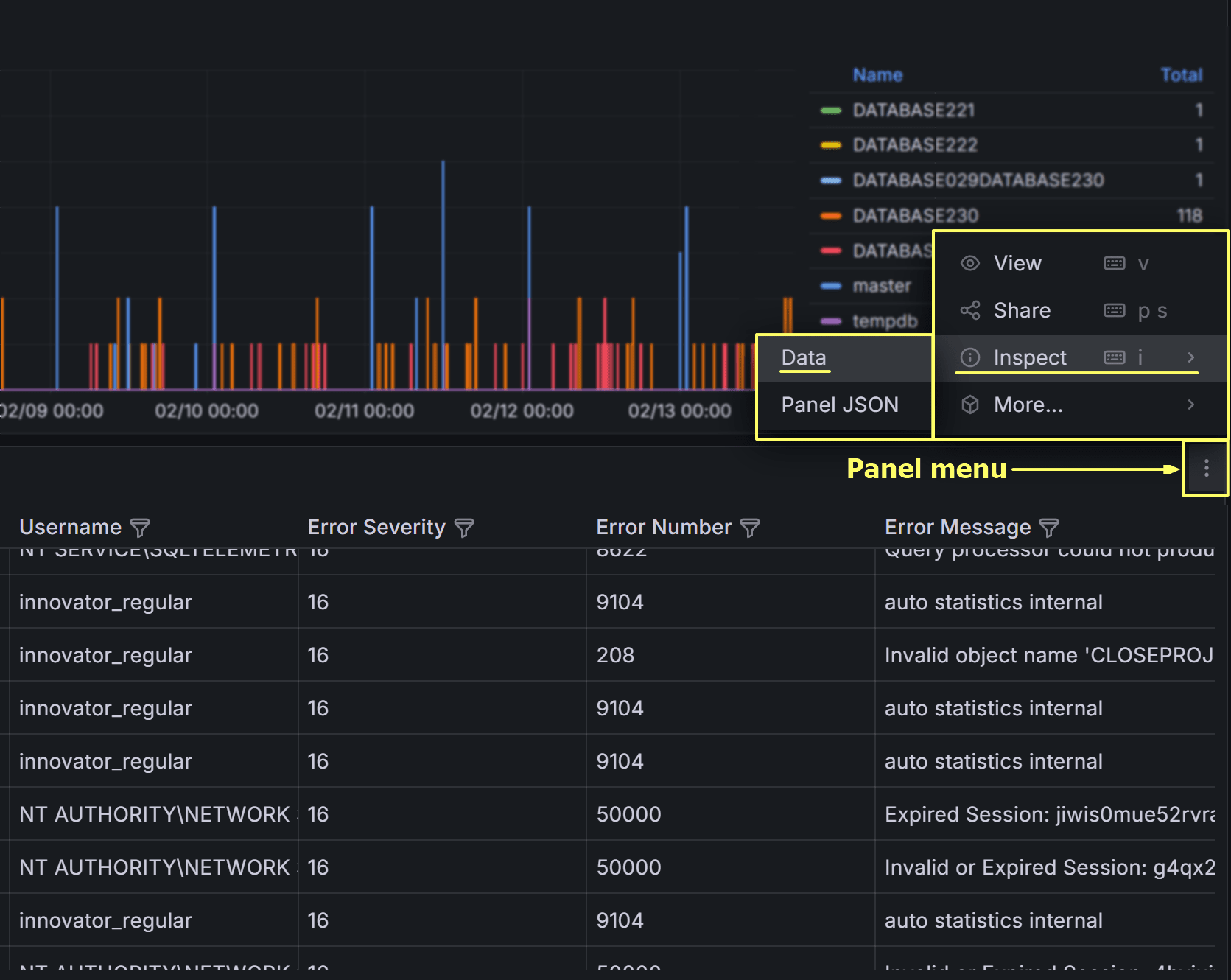

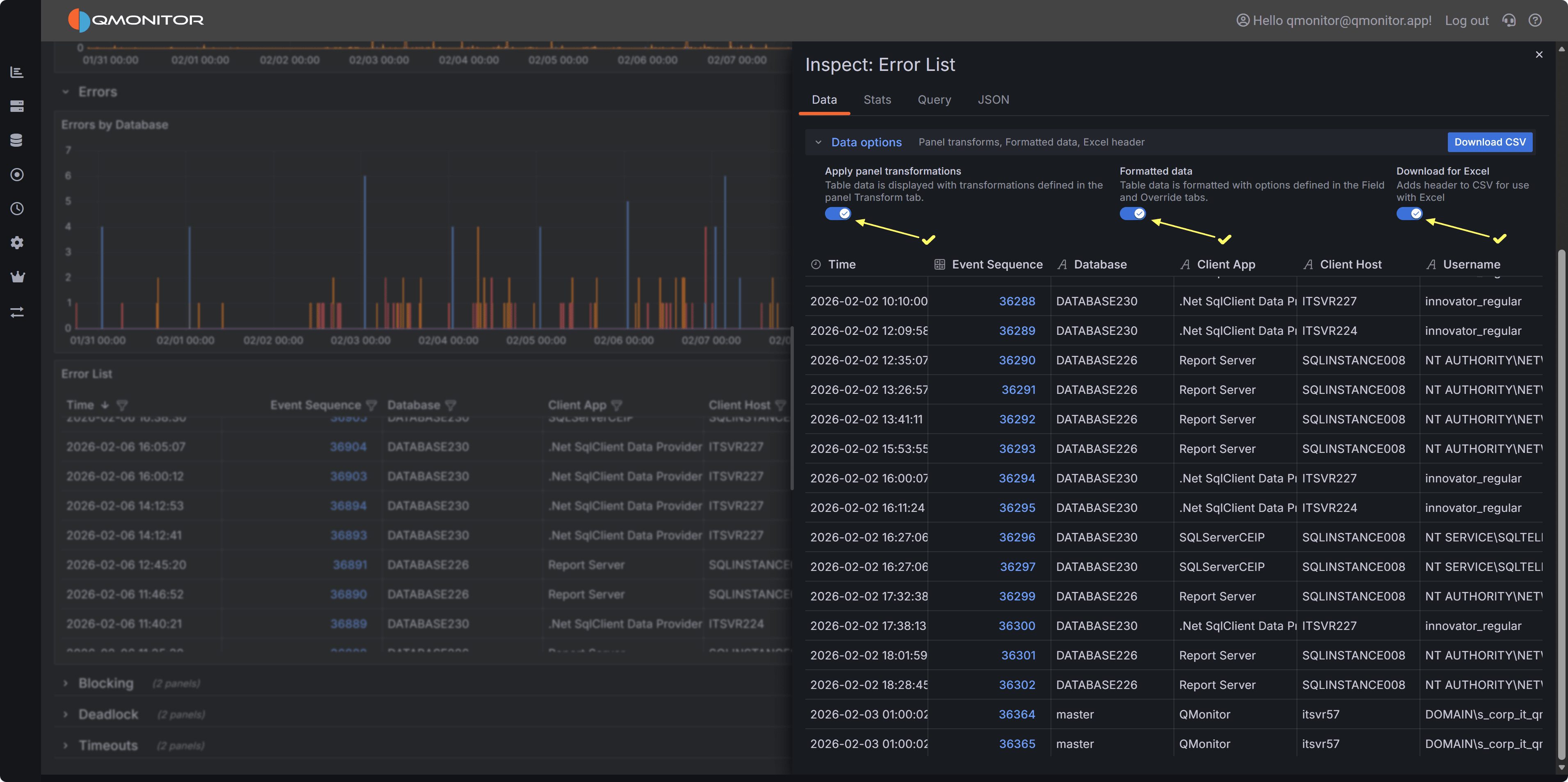

Exporting Error Events

You can export the error events table to CSV for offline analysis or sharing with development teams.

Click on the three-dot menu in the table header and select Inspect –> Data to download the data in CSV format.

On the next dialog, make sure to check all three switches to download a result set that resembles the

table view in the dashboard as closely as possible, including all columns and filters.

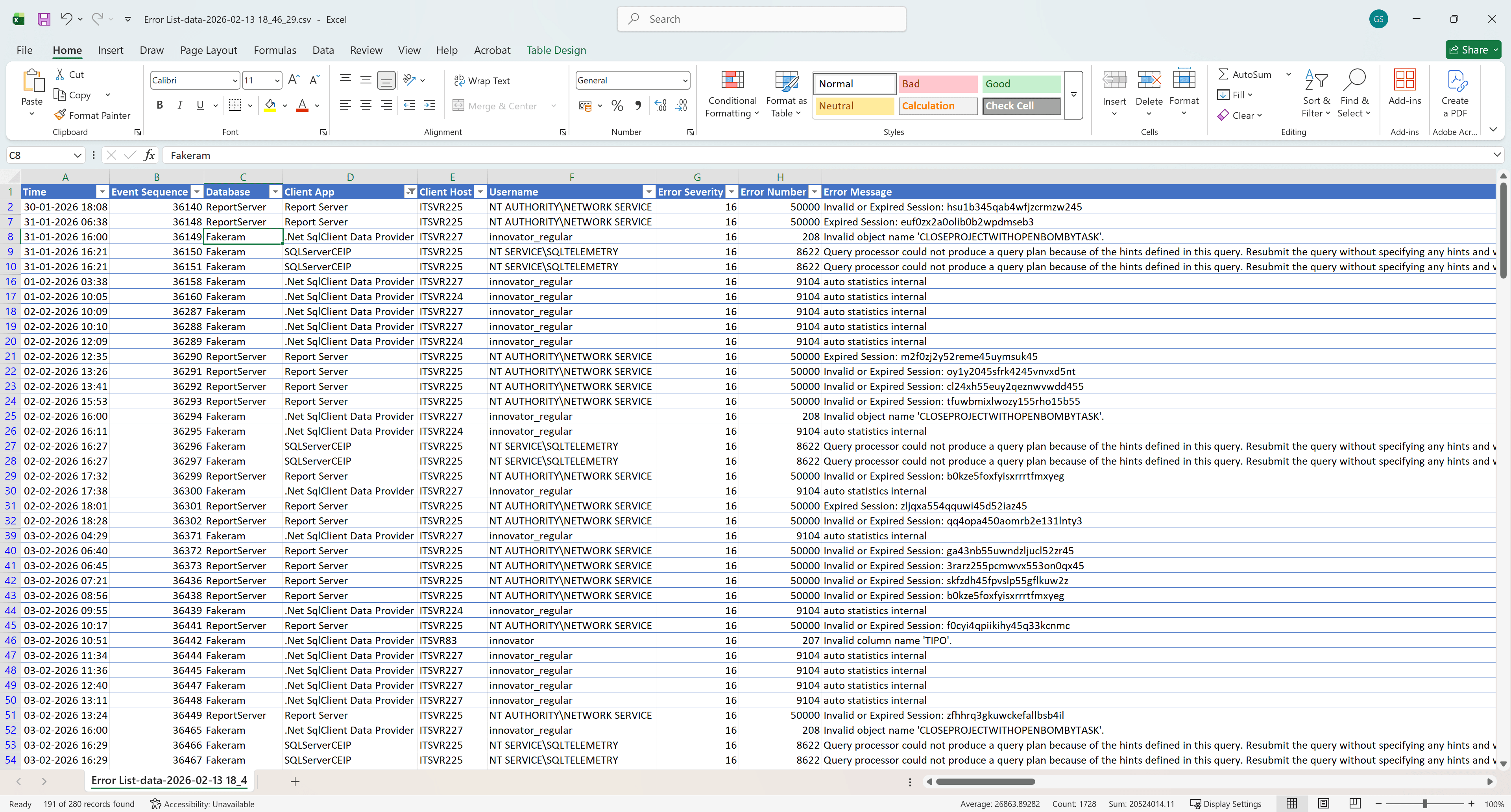

Click Download CSV to export the data. You can then open the CSV file in Excel or other tools for further analysis,

such as pivoting by error number or database to identify common issues.

This is what the exported CSV file looks like when opened in Excel, with all columns and filters applied:

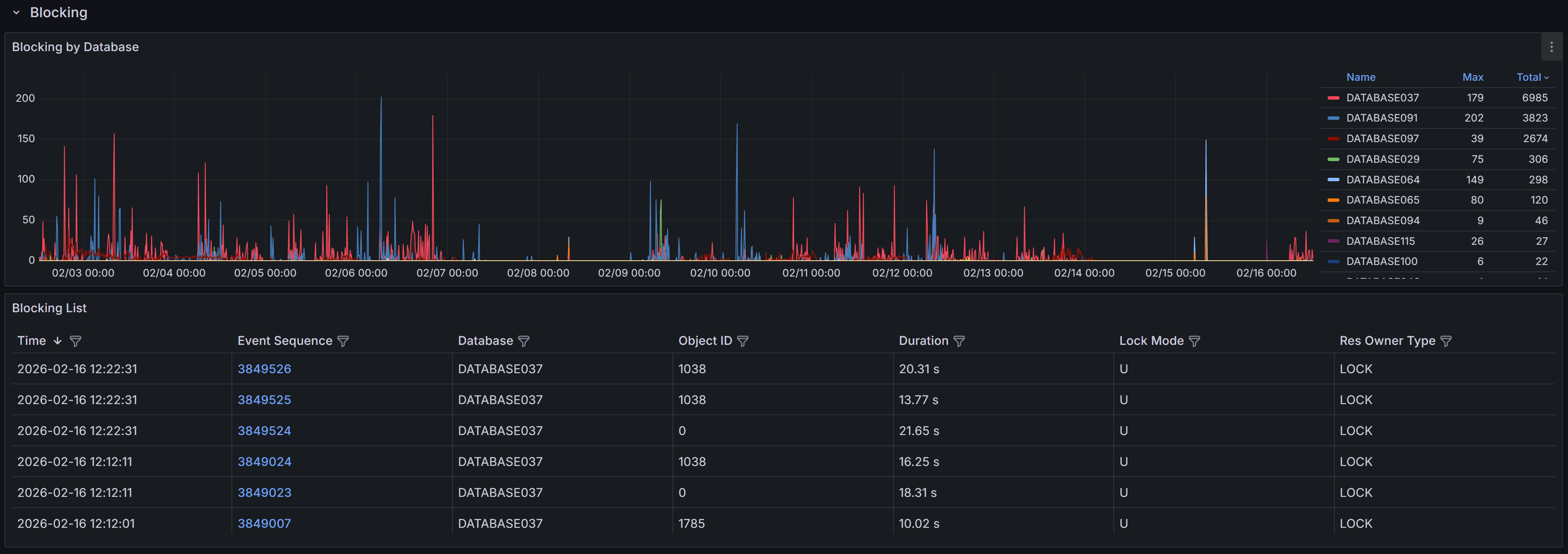

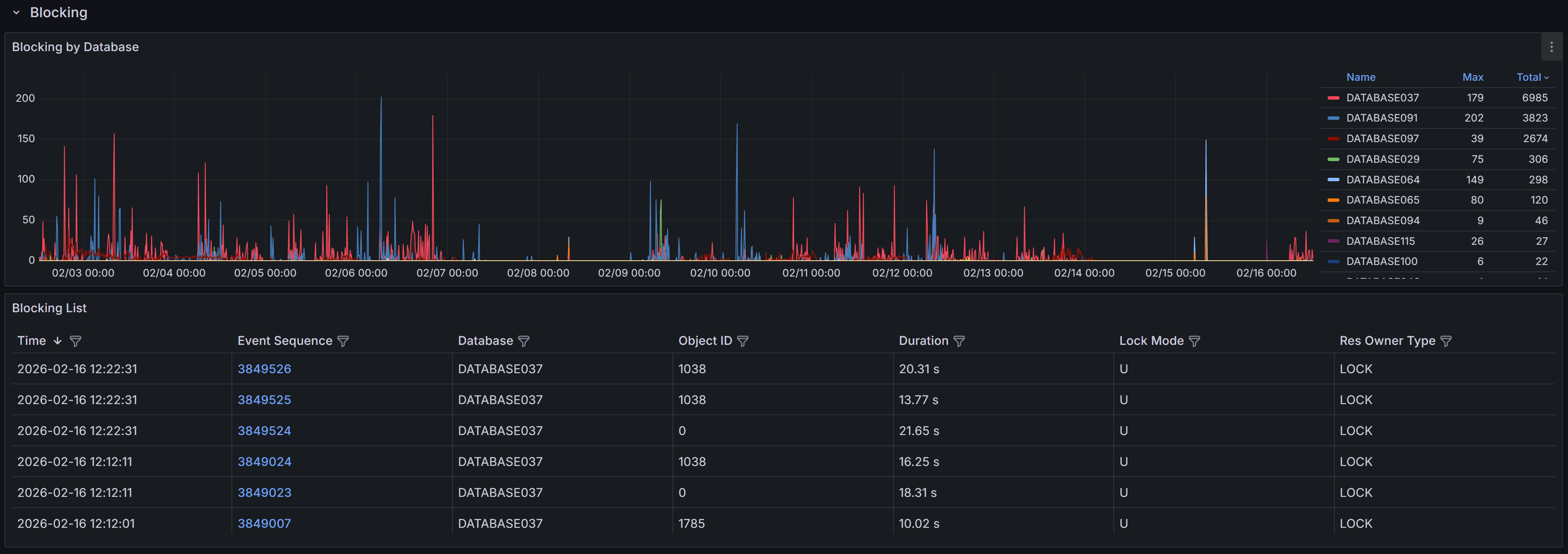

4.1.4.2 - Blocking

Blocking Events

The Blocking dashboard helps you identify and diagnose sessions that are waiting for locks held by

other sessions.

Important

Blocking occurs when one transaction holds a lock on a resource that another transaction needs to access,

causing the second transaction to wait. While some blocking is normal in multi-user database systems,

excessive or prolonged blocking can severely impact application performance and user experience.Understanding blocking patterns helps you identify problematic

queries, optimize transaction design, and improve concurrency.

Expand the “Blocking” row to view a chart that shows the number of blocking events

for each database.

SQL Server generates blocked process events only when a session waits on a lock longer than the configured

blocked process threshold. By default, this threshold is set to 0 seconds, which means SQL Server

does not generate blocking events at all.

Tip

The QMonitor setup script configures the blocked process threshold to 10 seconds as a recommended starting

point. If you see too many blocking events for brief waits that don’t cause problems, increase the threshold

to 15-20 seconds. After resolving most major blocking issues, lower it to 5 seconds to catch emerging problems

early.The blocking events table below the chart provides detailed information about each blocking occurrence:

- Time shows when the blocking event was captured, helping you correlate blocking with other

activities like batch jobs or peak usage periods.

- Event Sequence provides a unique identifier for the blocking event that you can reference when

investigating or communicating with team members.

- Database identifies which database the blocking occurred in, helping you route investigation to

the appropriate database owners.

- Object ID indicates the specific table or index involved in the lock, useful for identifying

which database objects are causing contention.

- Duration displays how long the blocked session waited before the event was captured. Note that

this is the wait time at the moment of capture; if blocking continued, the actual total wait time

may be longer.

- Lock Mode shows the type of lock the blocking session holds (e.g., Exclusive, Shared, Update).

Understanding lock modes helps you identify whether blocking is caused by writes blocking reads,

writes blocking writes, or other lock compatibility issues.

- Resource Owner Type indicates what type of resource is being locked, such as a row, page, table,

or database.

Use the column filters and sort controls to filter and sort the table.

Click a row to open the Blocking detail dashboard.

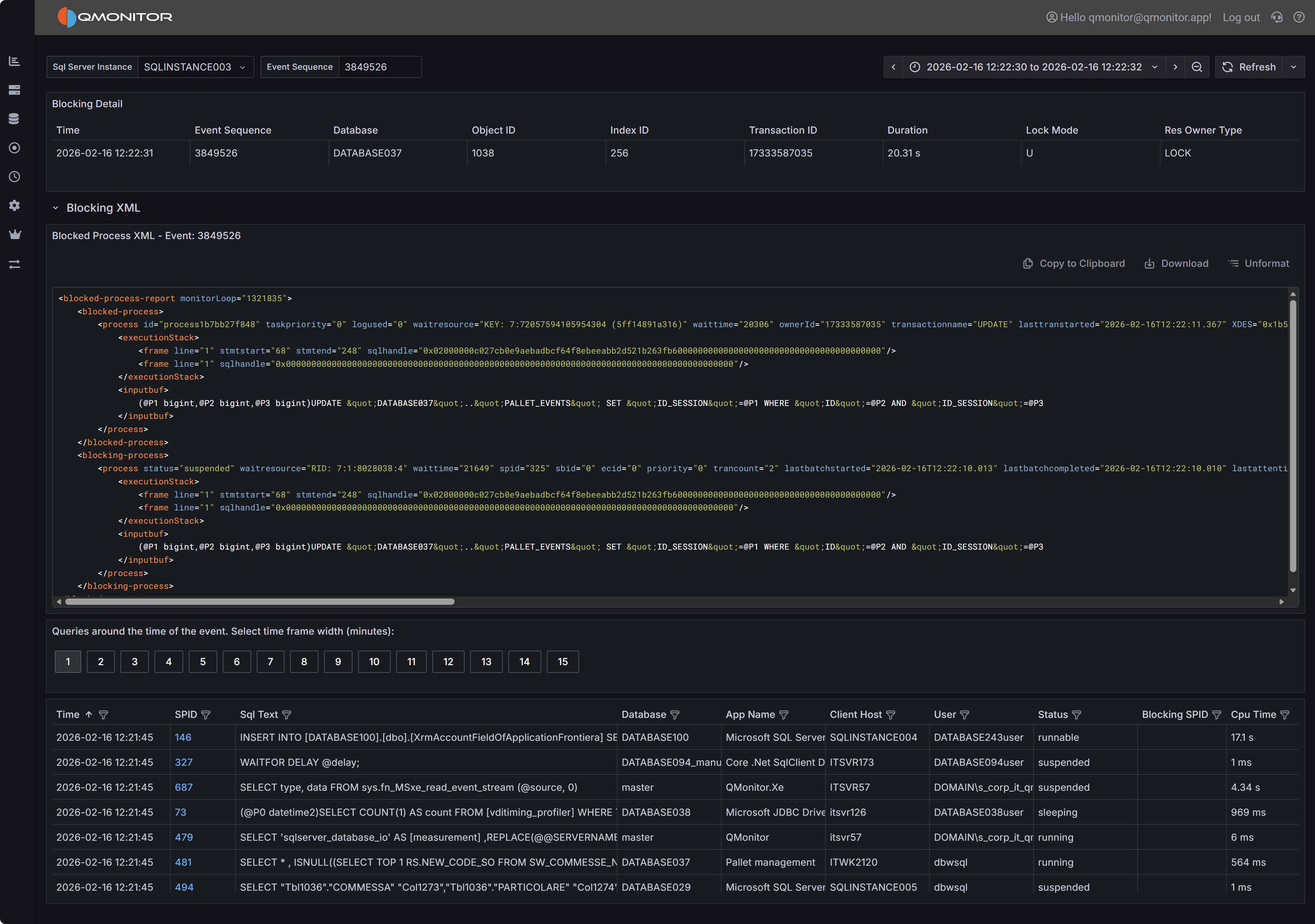

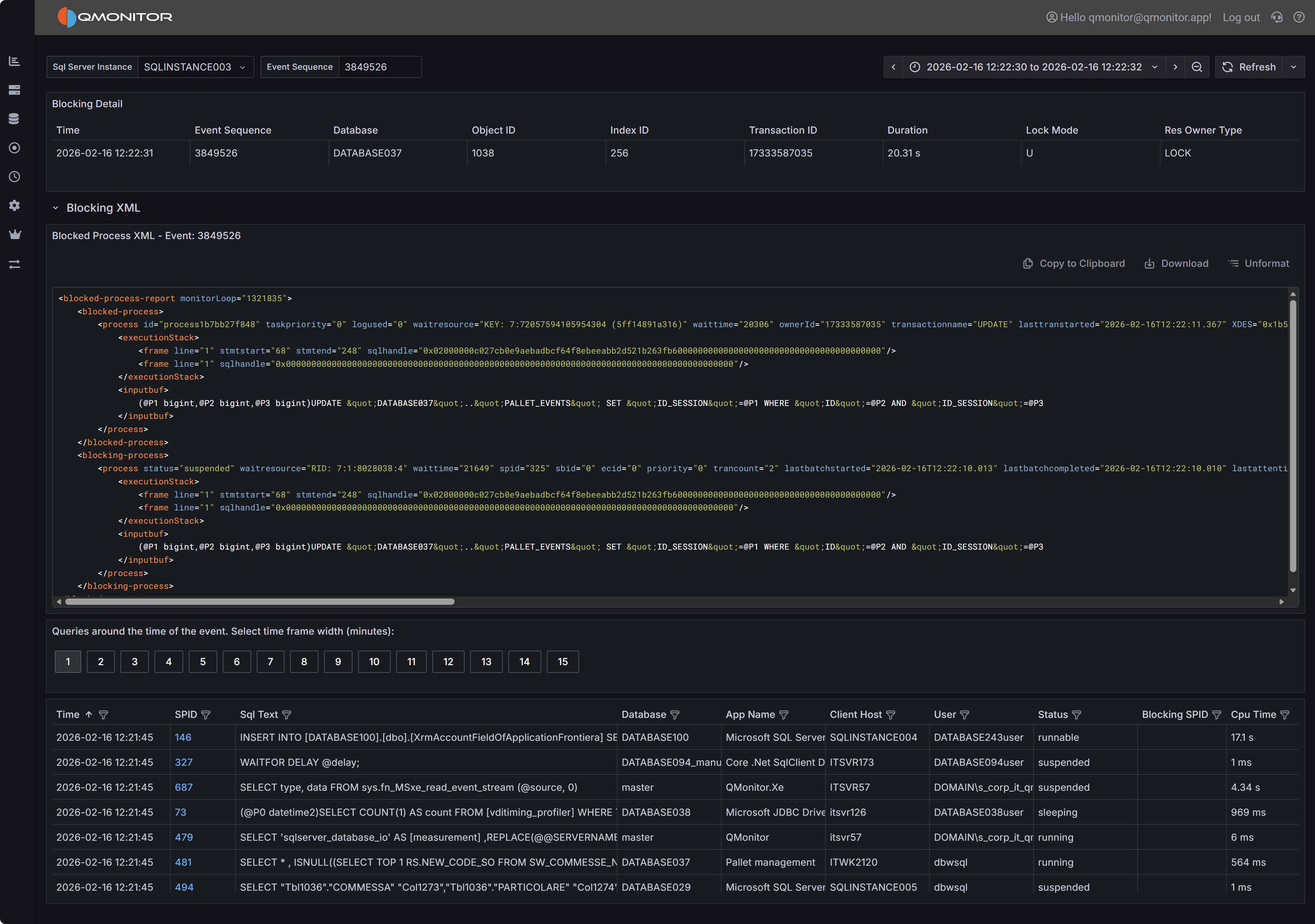

Blocking Event Details

When you click on a blocking event in the main table, the Blocking Detail dashboard opens with

comprehensive information to help you diagnose the root cause.

Blocking event detail showing blocked and blocking processes

Blocking event detail showing blocked and blocking processes

Event Summary

The top table provides key information about both the blocked and blocking processes. You’ll see the

session IDs (SPIDs) of the blocked and blocking sessions, how long the blocking lasted, which database

and object were involved, the lock mode causing the block, and the resource owner type. This summary

gives you immediate context about what was blocked, what was blocking it, and how serious the impact was.

Blocked Process Report XML

The Blocked Process Report XML panel displays the complete XML report generated by SQL Server when the

blocking event occurred. This XML contains detailed information about both the blocked and blocking

sessions, including the SQL statements they were executing, their transaction isolation levels, and

the specific resources they were waiting for or holding.

The XML includes one or more <blocked-process> nodes describing sessions that were waiting, and one

or more <blocking-process> nodes describing sessions that held the locks. Each node contains attributes

and child elements that provide:

- The SQL statement being executed (in the

inputbuf element). This might be truncated if the statement is very long. - The transaction isolation level

- Lock resource details (database ID, object ID, index ID, and the specific row or page being locked)

- The login name and host name of the session

- The current wait type and wait time

While the complete XML schema is documented in Microsoft’s SQL Server documentation, the most immediately

useful information is typically the SQL text from both the blocked and blocking processes. Documenting

the complete XML structure is beyond the scope of this documentation.

Active Sessions Grid

The bottom grid lists all sessions that were active around the time the blocking event occurred. This

context is valuable because blocking chains often involve multiple sessions, and understanding the

overall session activity helps you identify patterns and root causes.

Use the time window buttons above the grid to adjust how far before and after the blocking event you

want to see session data. Options range from 1 minute to 15 minutes. A wider window provides more

context but may include unrelated sessions.

Tip

Filter the grid by the “Blocking or Blocked” column set to “1” to see only sessions that were

either blocking another session or being blocked. This focused view helps you quickly identify

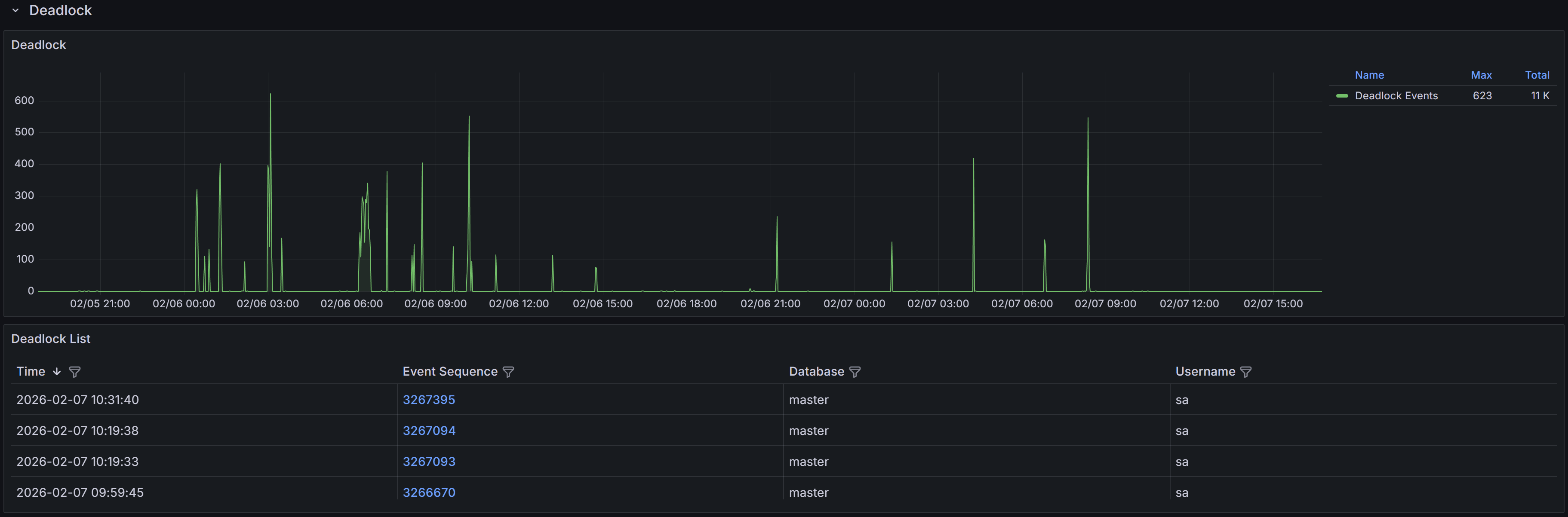

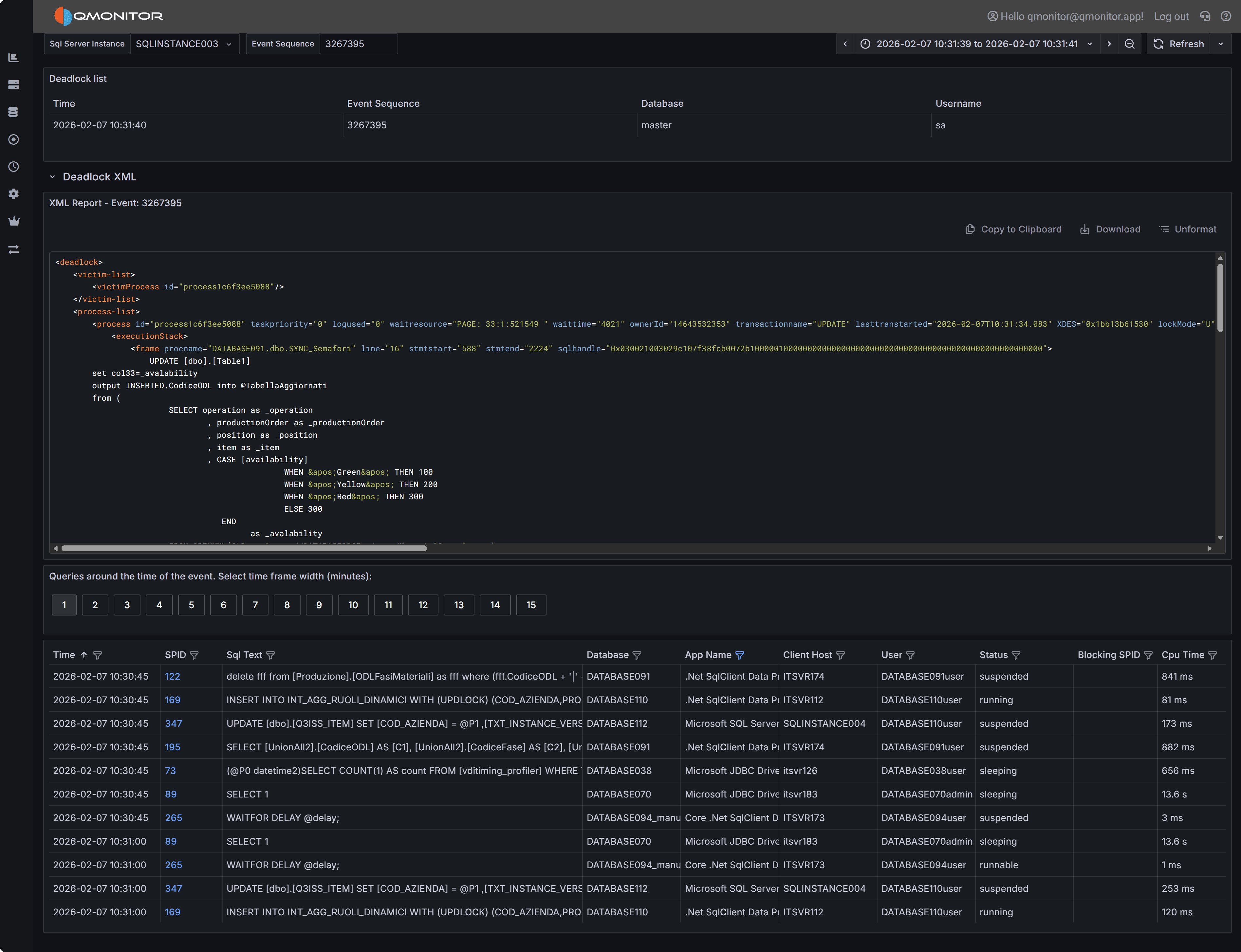

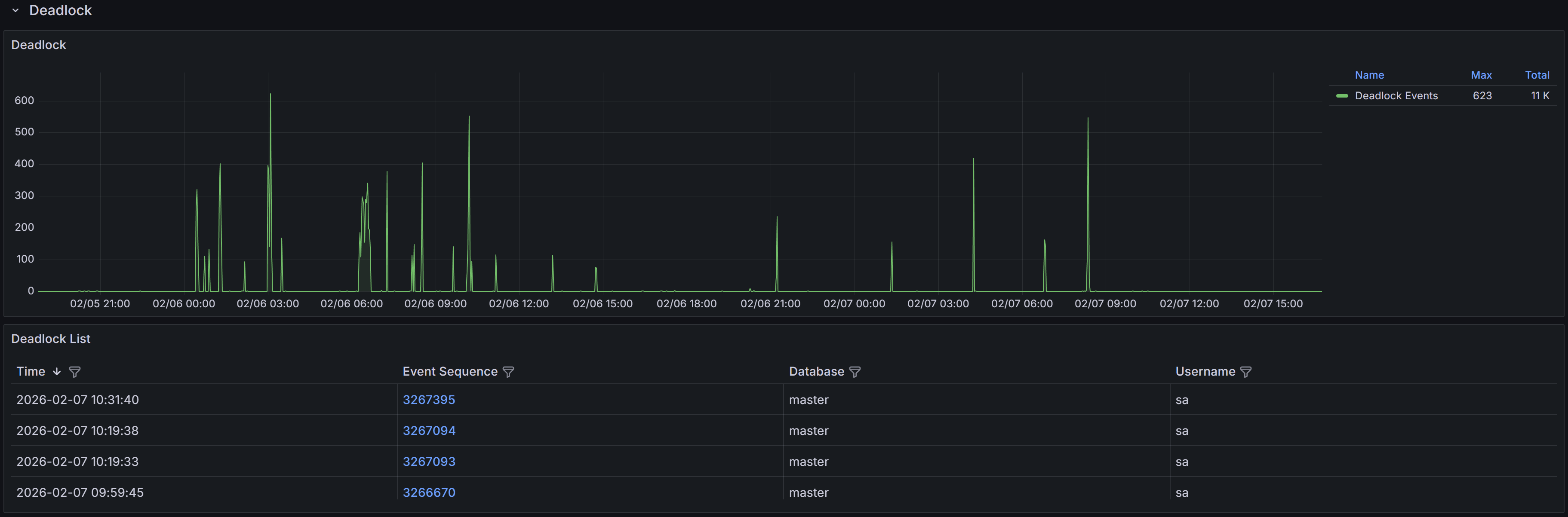

all participants in blocking chains without displaying unrelated active sessions.4.1.4.3 - Deadlocks

Information on deadlocks

The Deadlocks dashboard helps you identify and diagnose deadlock situations.

Expand the “Deadlocks” row to view a chart that shows the number of deadlocks

for each database.

Understanding Deadlocks

A deadlock occurs when two or more sessions create a circular dependency on locks.

Example

Session 1 updates Table A and then tries to update Table B, while Session 2 updates Table B and then

tries to update Table A. If both sessions start at nearly the same time, Session 1 will hold a lock on

Table A while waiting for Table B, and Session 2 will hold a lock on Table B while waiting for Table A.

Neither can proceed, creating a deadlock.SQL Server’s deadlock detector runs every few seconds to identify these circular lock dependencies. When

a deadlock is detected, SQL Server analyzes the sessions involved and chooses one as the “deadlock victim”

based on factors like transaction cost and deadlock priority. The victim’s current statement is rolled

back with error 1205, while other sessions proceed normally. The application that receives error 1205

should catch this error and retry the transaction, as the same operation will typically succeed on retry

once the competing transaction completes.

QMonitor captures deadlock events through SQL Server extended events and stores the complete deadlock

graph as XML. This graph contains detailed information about all sessions involved in the deadlock, the

resources they were competing for, and the SQL statements they were executing at the time.

The deadlock events table below the chart lists all captured deadlocks with the following information:

- Time shows when the deadlock occurred, helping you identify patterns such as deadlocks during batch

processing or peak usage periods.

- Event Sequence provides a unique identifier for the deadlock event that you can use when

communicating with team members or referencing in tickets.

- Database identifies which database the deadlock occurred in, helping you route investigation to the

appropriate database owners and application teams. This is often reported as “master”,

- but the graph itself contains the actual database context for each session.

- User Name shows the SQL Server login involved in the deadlock, useful for identifying which

applications or users are experiencing deadlock issues.

Use the column filters to narrow the list to specific databases or time periods, and sort by Time to see

the most recent deadlocks first or to identify clusters of deadlocks occurring in quick succession.

Click a row to open the Deadlock detail dashboard.

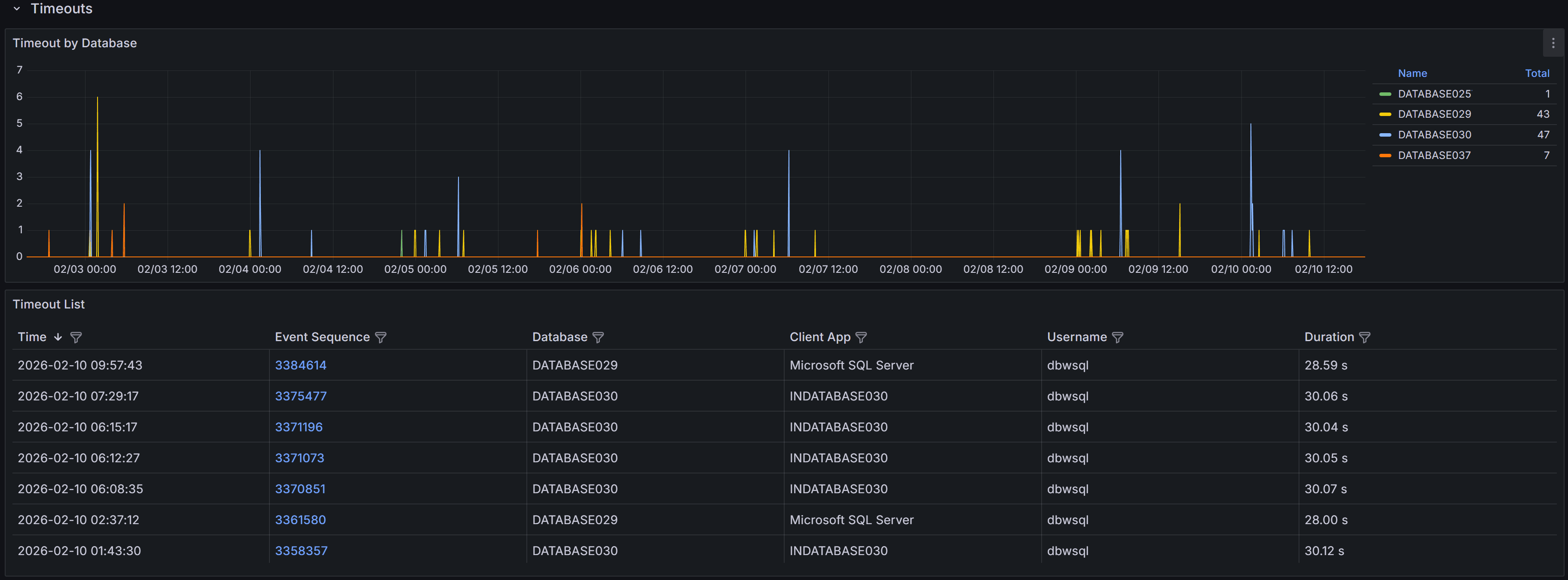

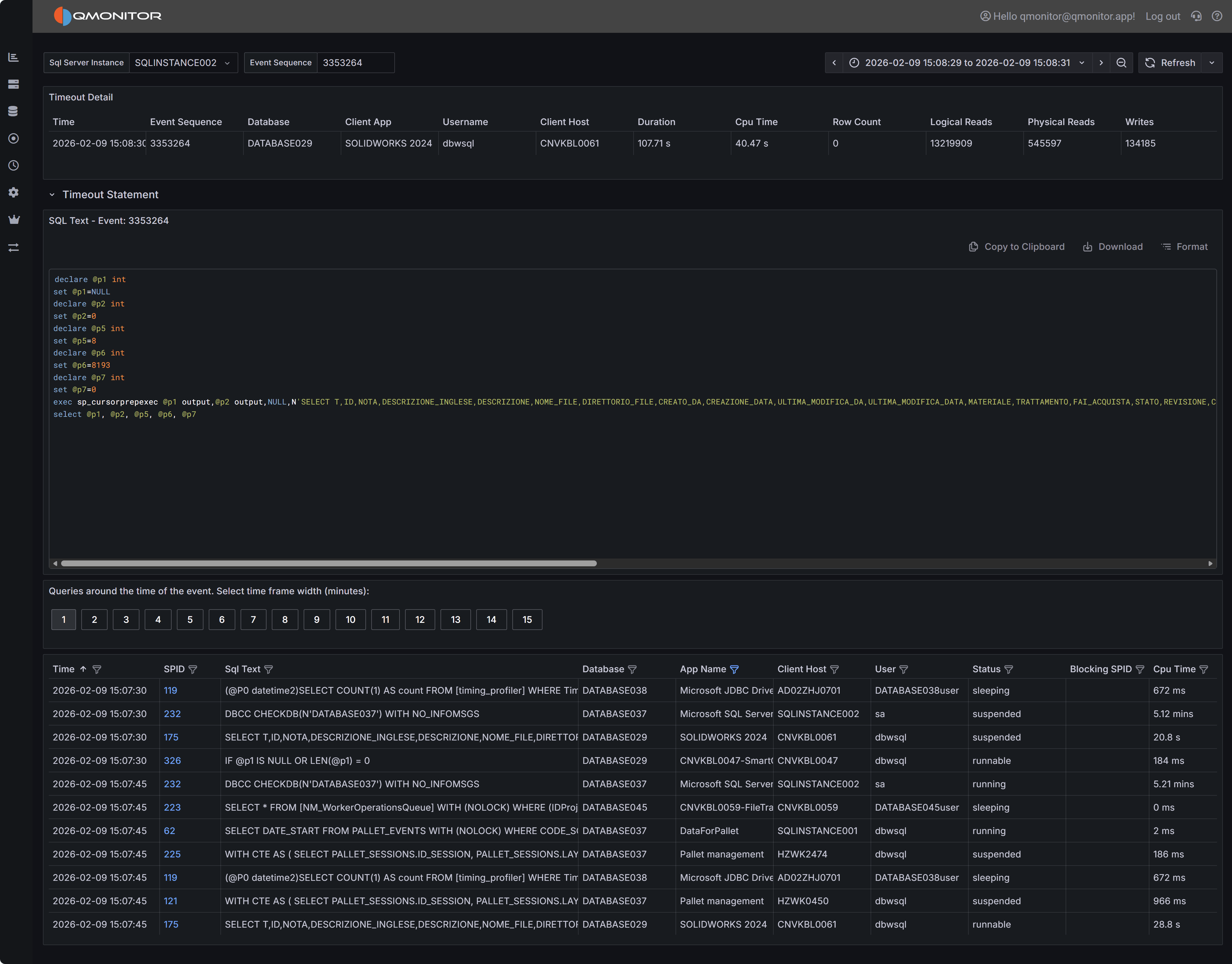

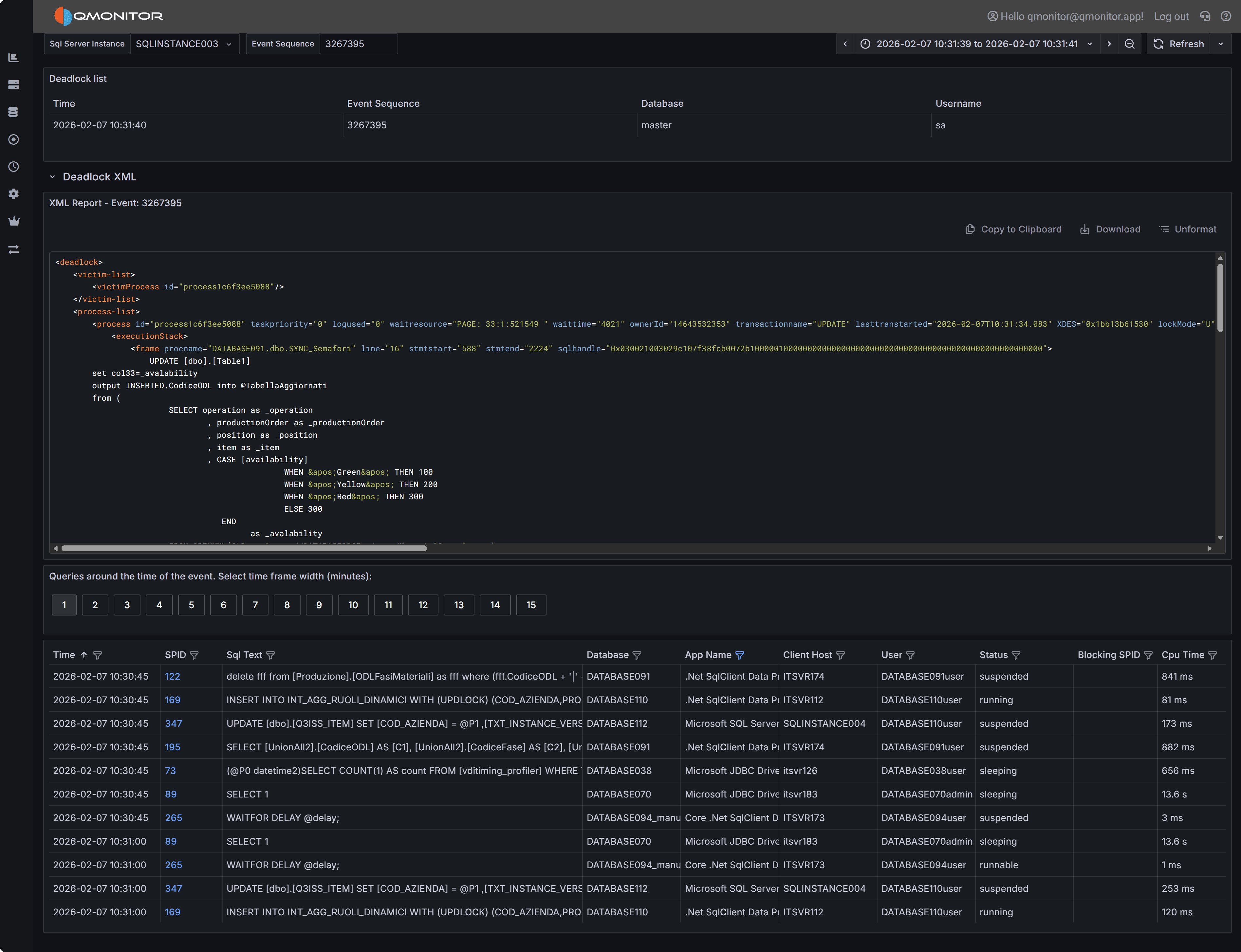

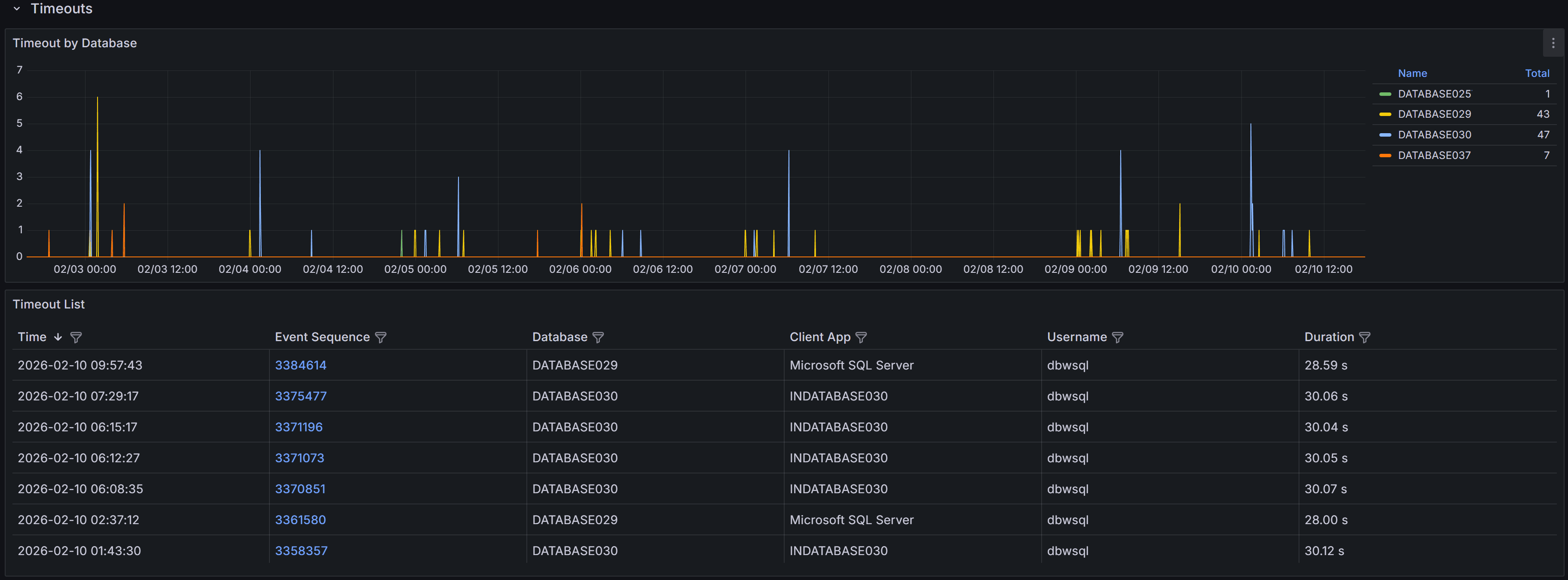

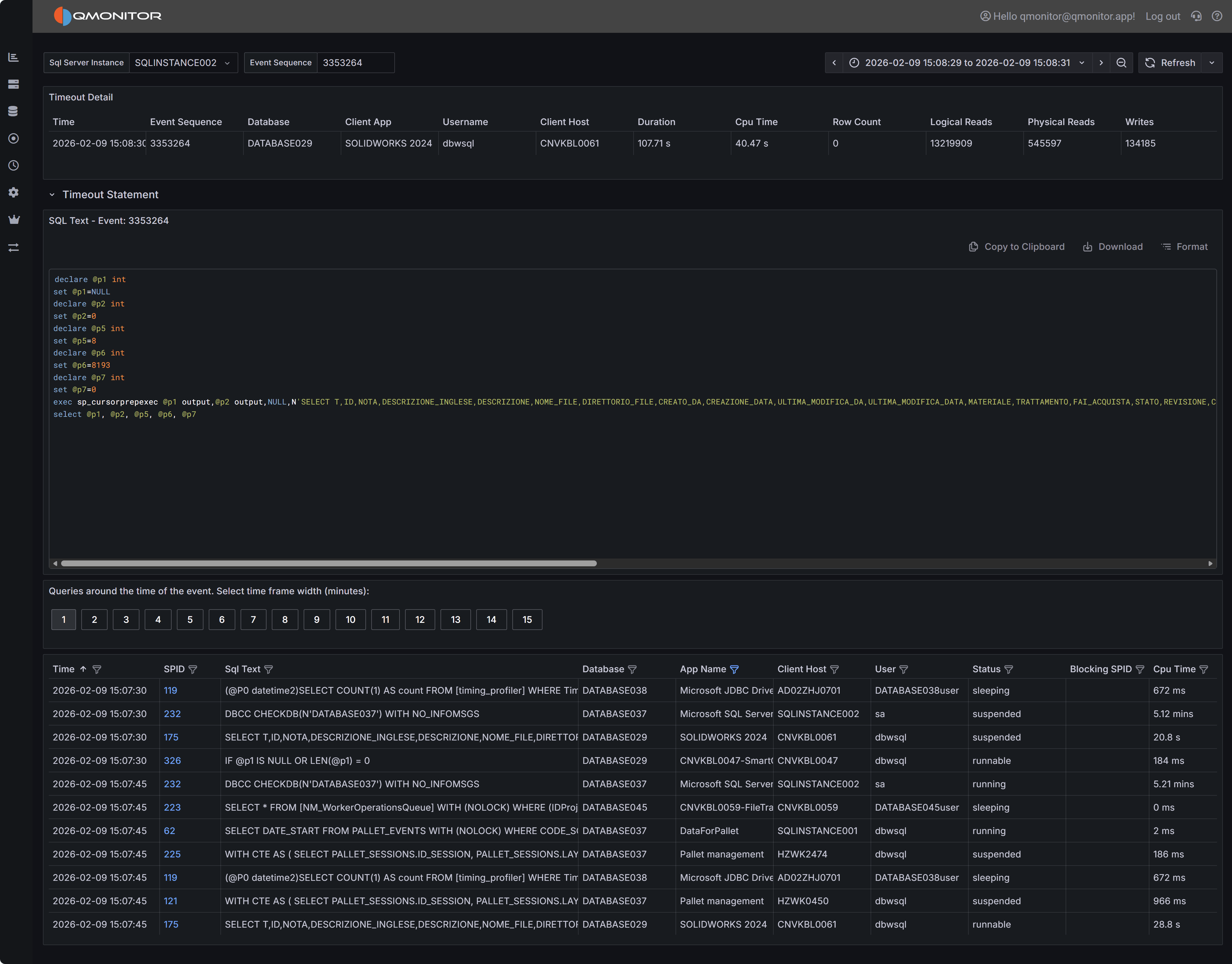

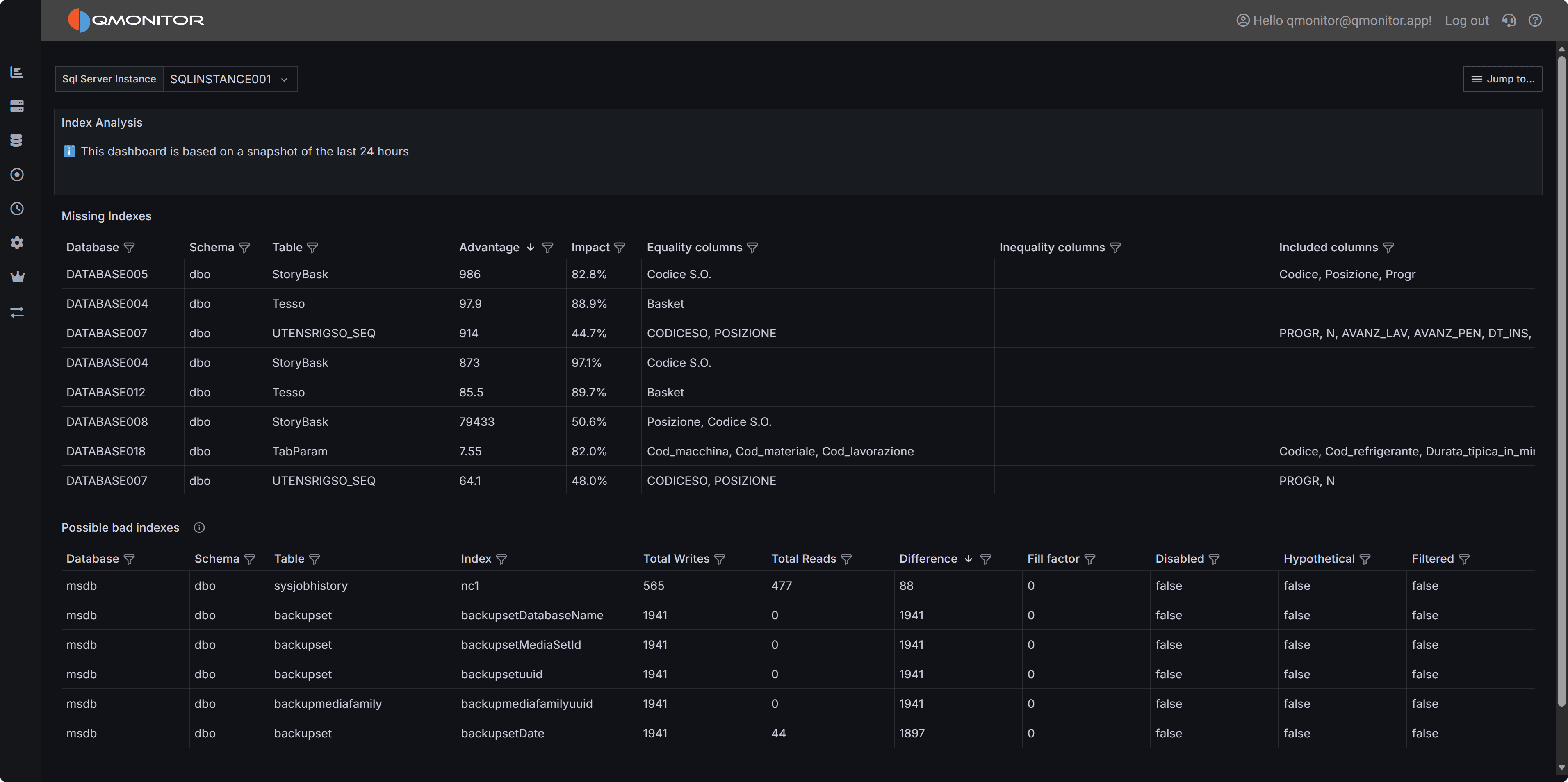

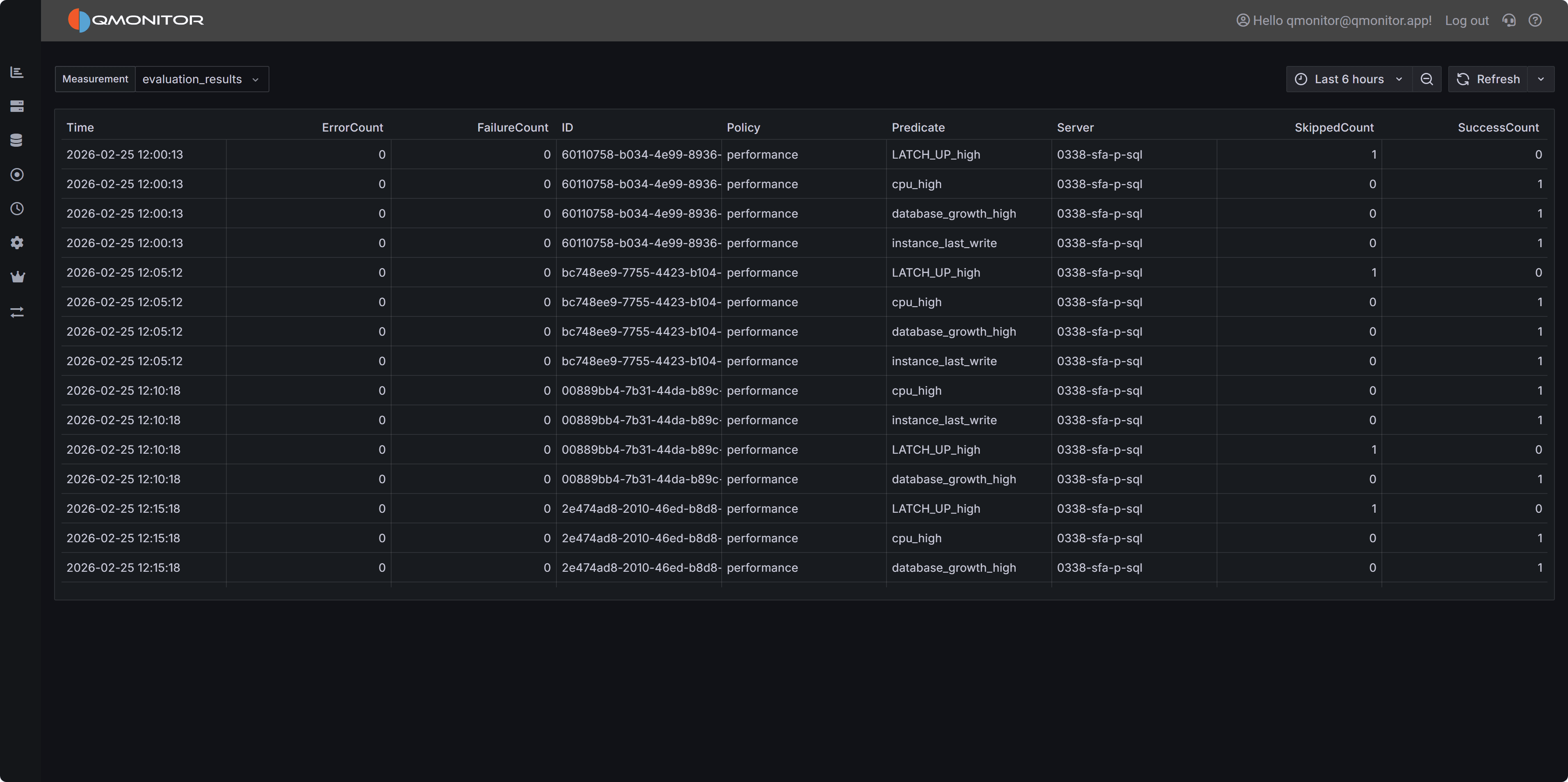

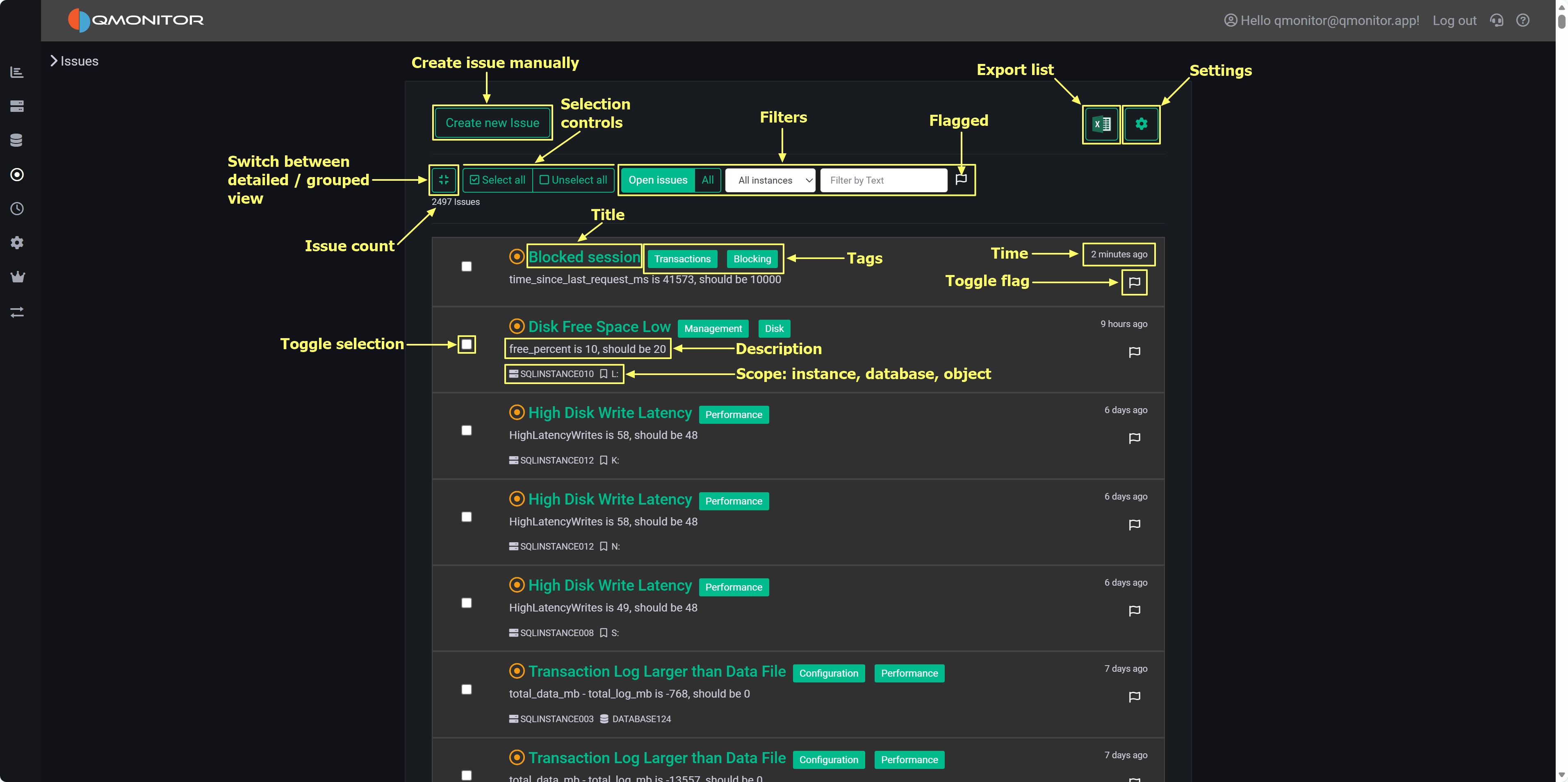

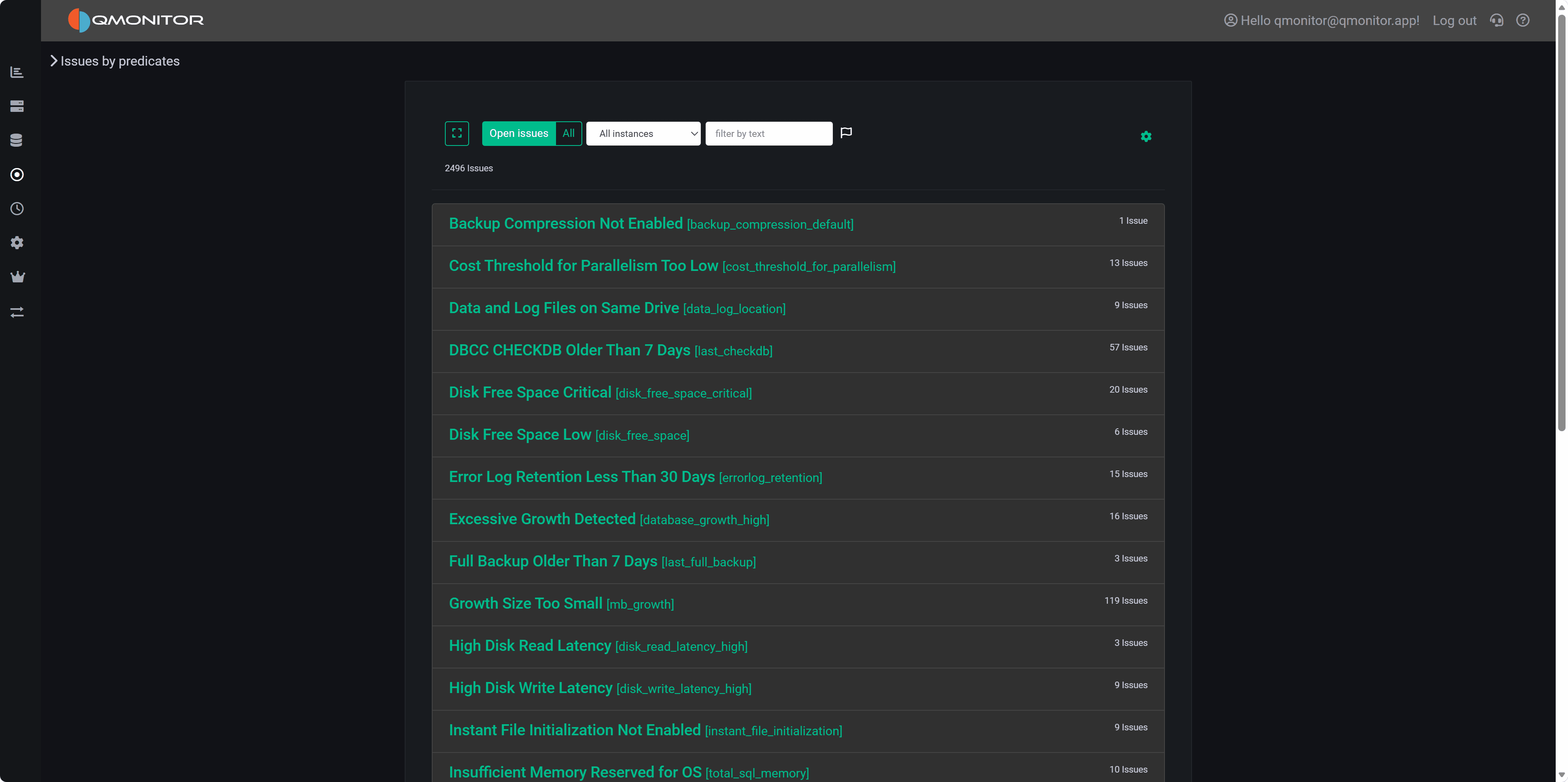

Deadlock Event Details